philosophy

Yondr CEO Graham Dugoni unpacks the technological zeitgeist in this exclusive Big Think interview covering media ecology, leadership, AI, human connection, and much more.

“I think it’s about time we stop allowing every male generation bang their frontal lobe through its most developmental stages.”

When Star Trek’s Captain Picard and The Office’s Dwight Schrute channel philosopher Karl Jaspers, we can all benefit.

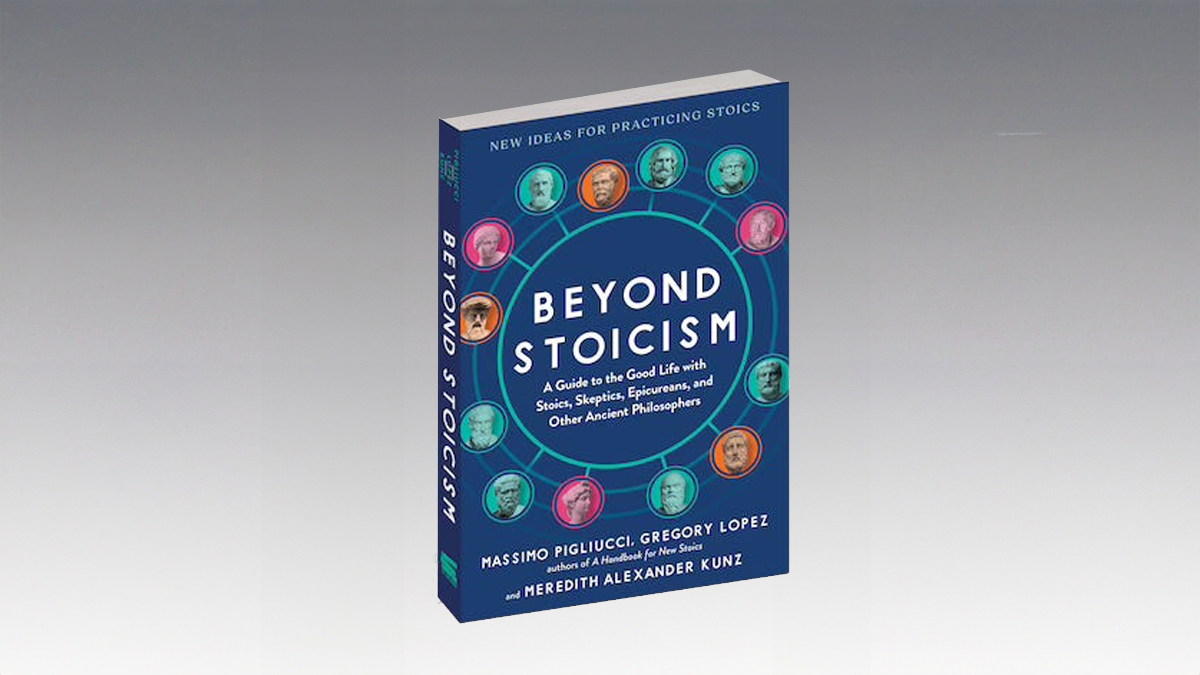

Pleasure, virtue, and doubt are necessary, but each is insufficient on its own.

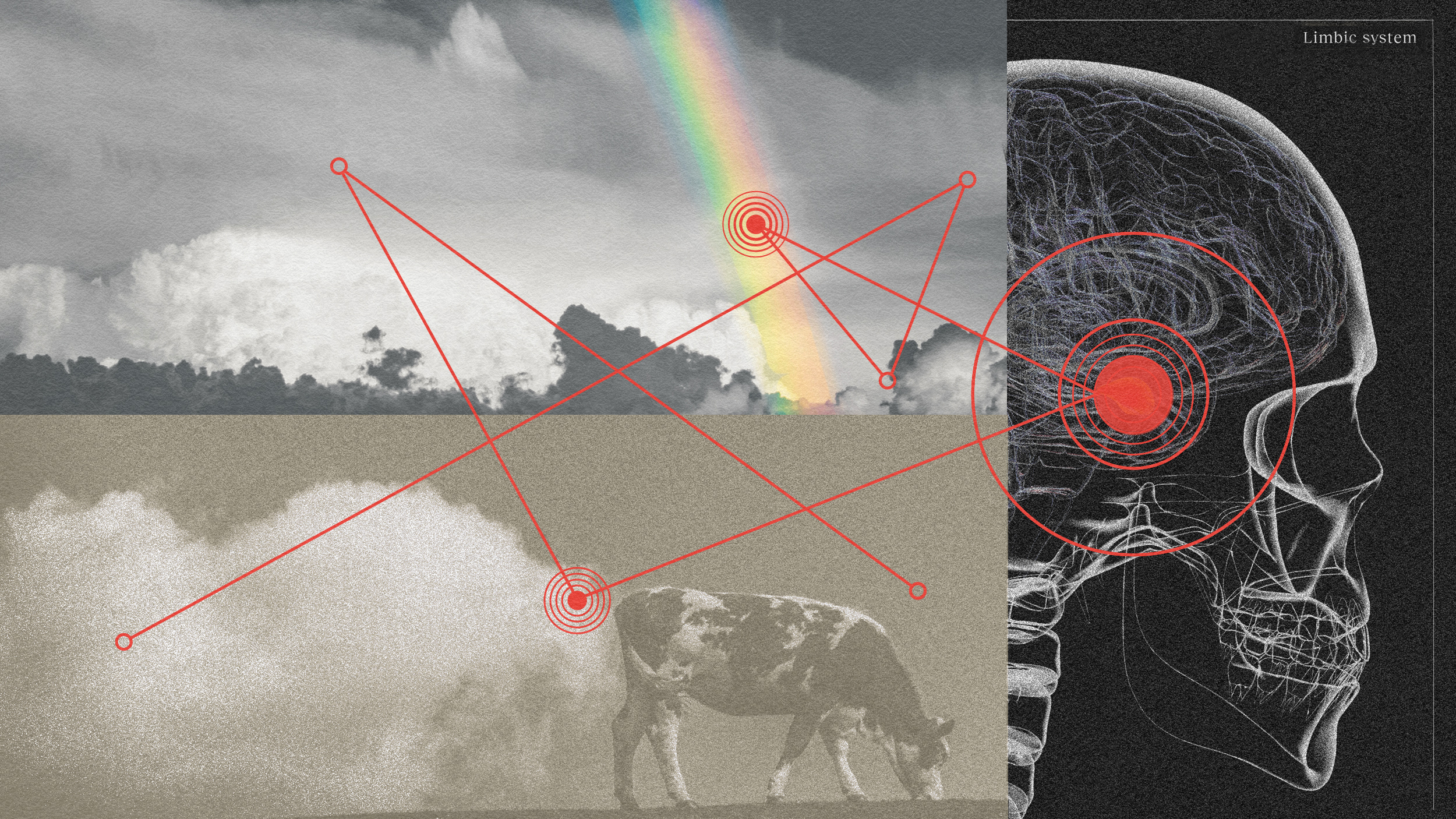

“Okay, dad, but GMOs help feed the world.”

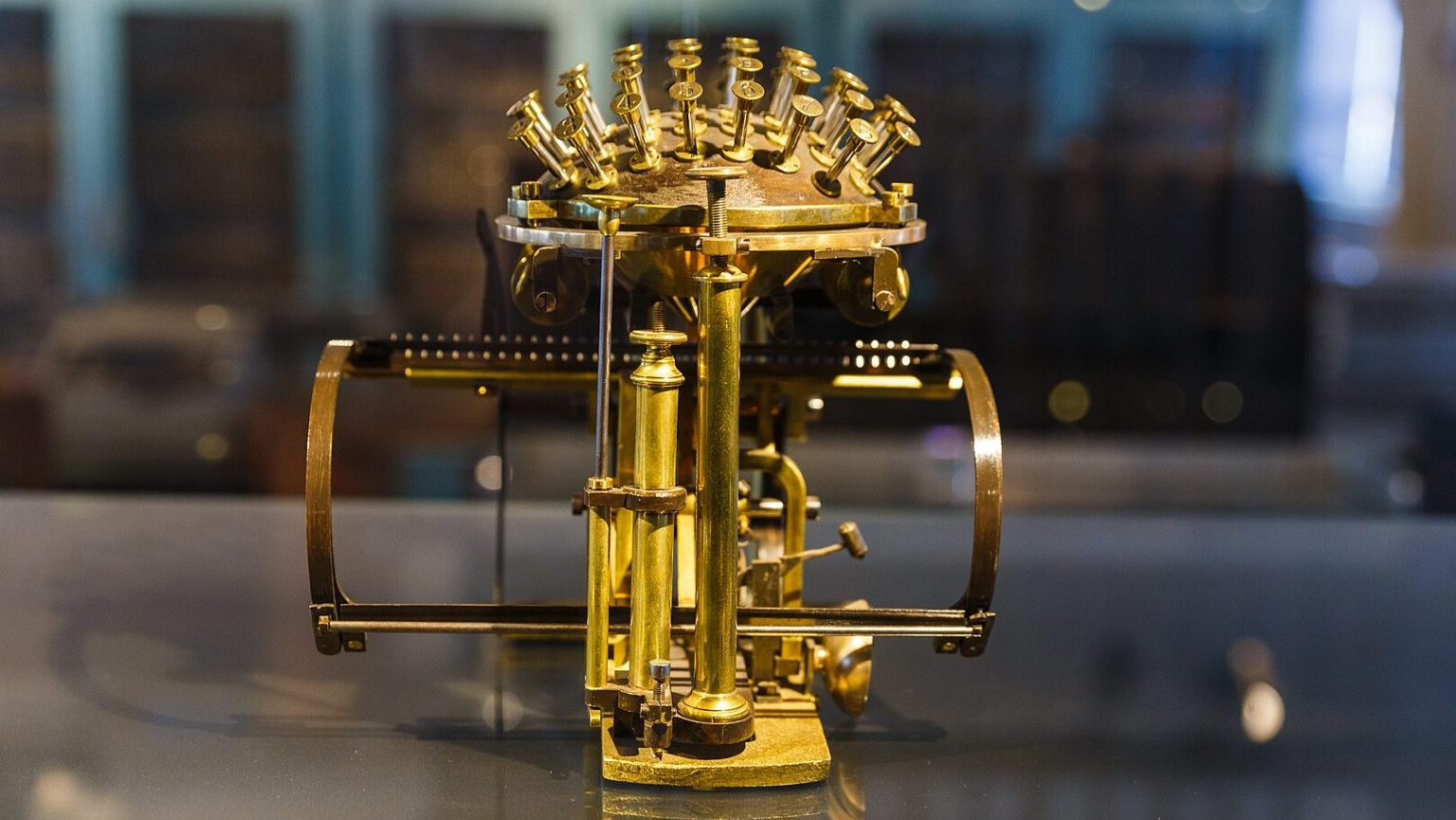

The Malling-Hansen writing ball, with its potential and limitations, redefined Nietzsche’s philosophical and creative expression.

You’re a moody person. You have to be — because understanding moods philosophically can be crucial to your work-life.

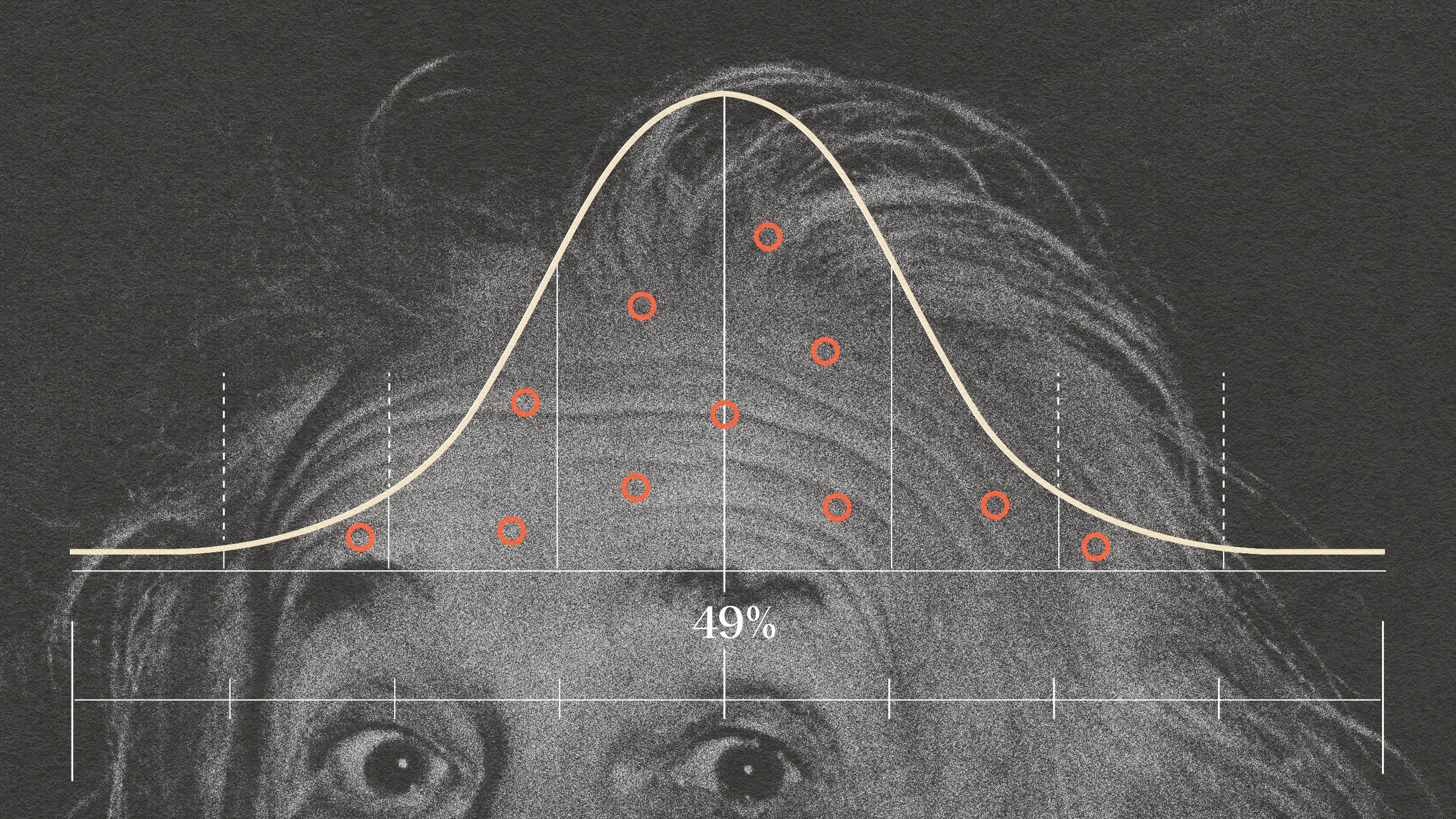

How black and white is your thinking?

Self-help often distills philosophical ideas for the modern ear. Sometimes, its better to go back to the source.

“We do not experience primarily because we have brains; we experience because we are alive.”

Just because you can’t experience it doesn’t mean it’s not real.

We have it in our power to forgive a debt — and learning to use this power in the workplace can be golden.

What if the barrier to a fulfilled life isn’t technology but culture?

“Could you create a god?” Nietzsche’s titular character asks in “Thus Spoke Zarathustra.”

Plato’s cave metaphor illustrates the cognitive trap of ignorance, where we may be unaware of the limitations of our understanding.

Will “Sausage Party” survive the test of time?

How many scientists does it take to ruin a good conspiracy?

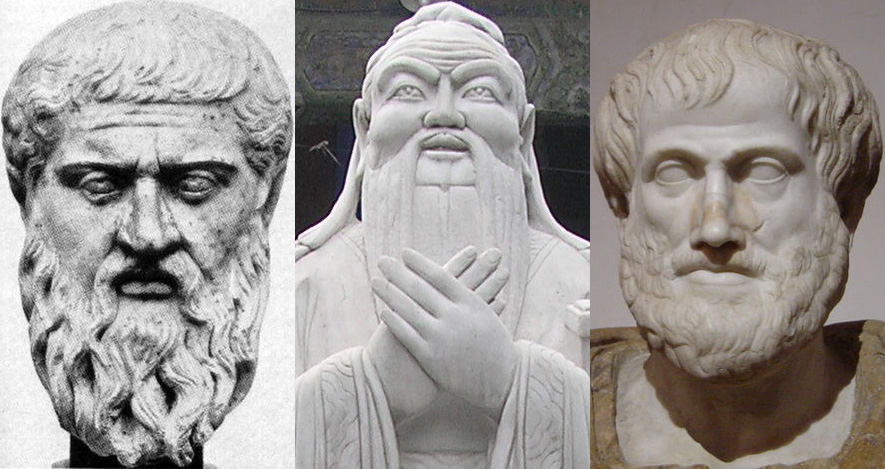

Two great philosophies — but do they work better together?

If you’re an atheist with a vocation, who laid that path for you?

While we’re busy wondering whether machines will ever become conscious, we rarely stop to ask: What happens to us?

“I have a friend who thinks vaccines cause autism,” writes Nina. “What can I do?”

In the 18th century, David Hume argued that we are only motivated to do good when our passions direct us to do so. Was he right?

There’s little more infuriating in the world than being told to “calm down” when you’re in the midst of a simmering grump.

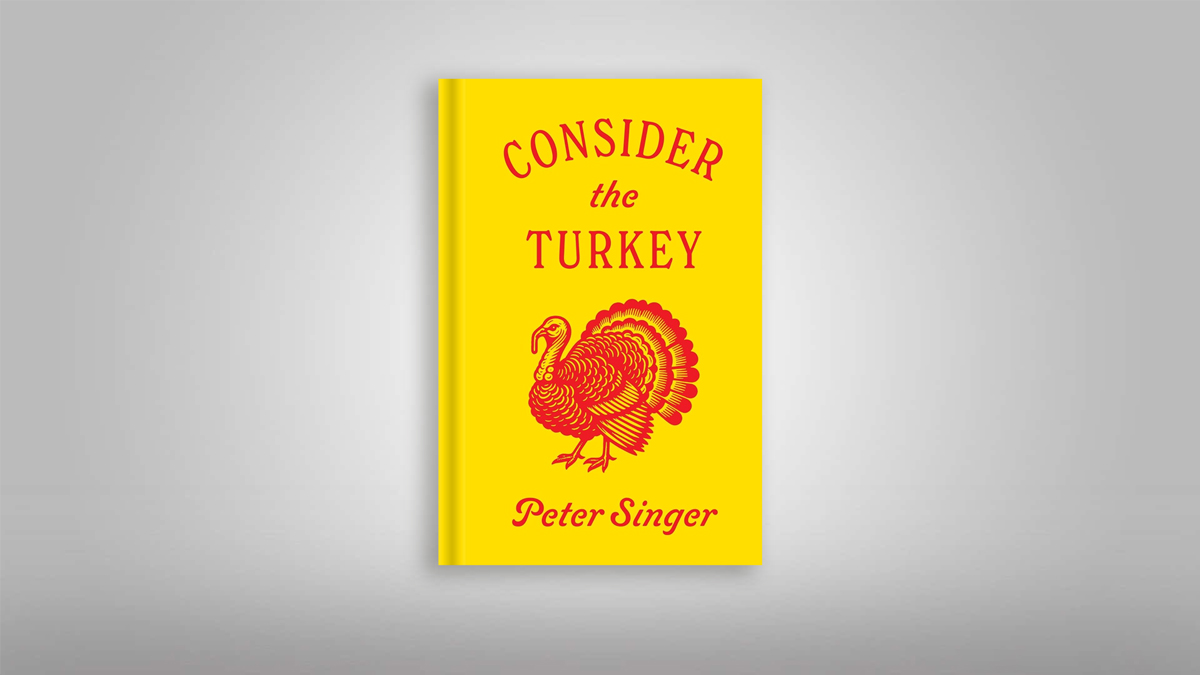

Philosopher Peter Singer argues it’s time to examine a morally dubious practice.

How can “you” move on when the old “you” is gone?

In his latest book, Malcolm Gladwell explores a strange phenomenon of group dynamics.

Reading this article would be such a millennial thing to do.

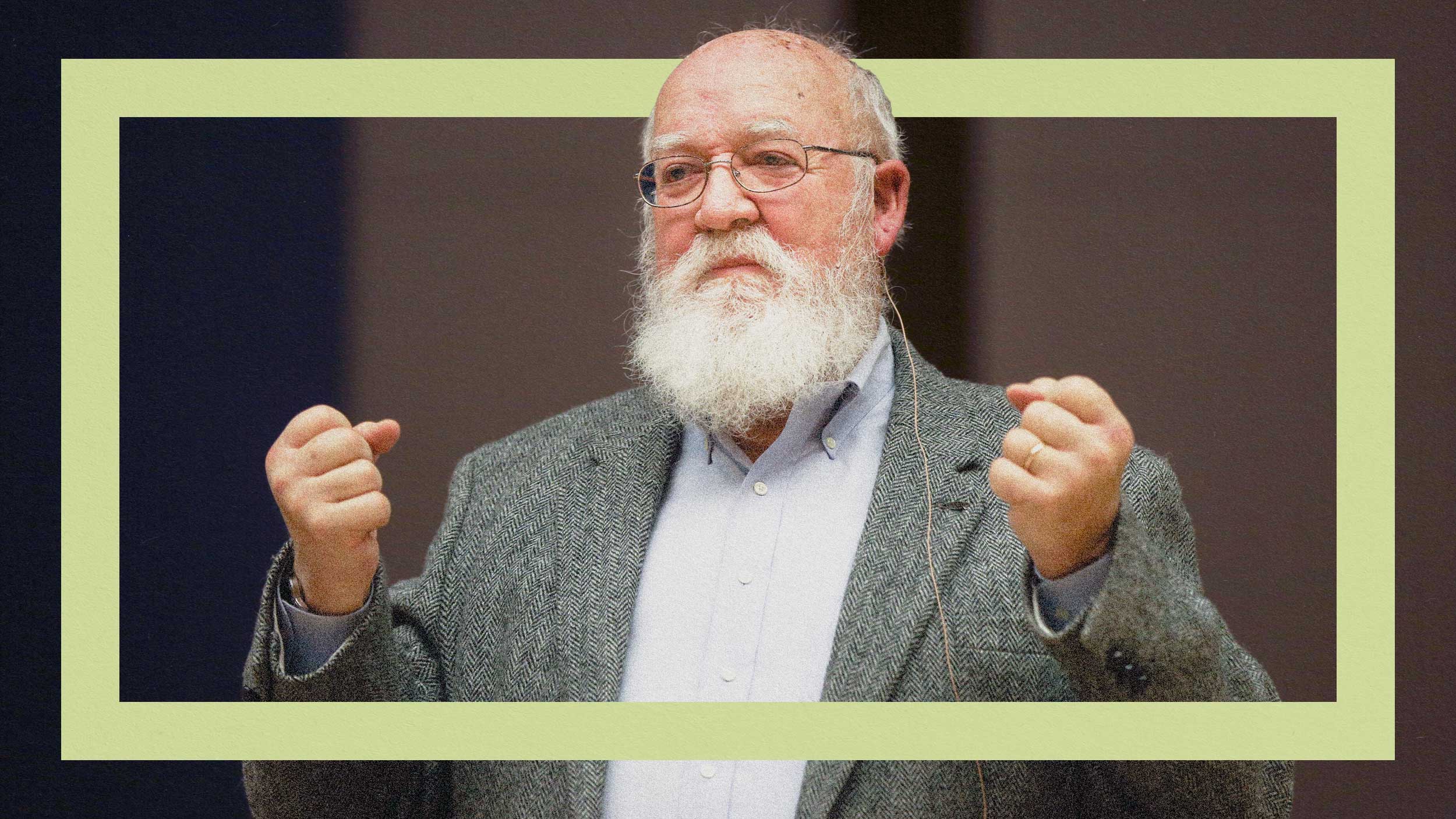

The late philosopher suggested adding a couple of “Occam’s heuristics” to your critical thinking toolbox.

The secret sauce is the real world.

Would you be upset if I called you an eggplant?