neural network

A neural network was trained to create its own cookbook recipes, resulting in some strange and unappetizing concoctions.

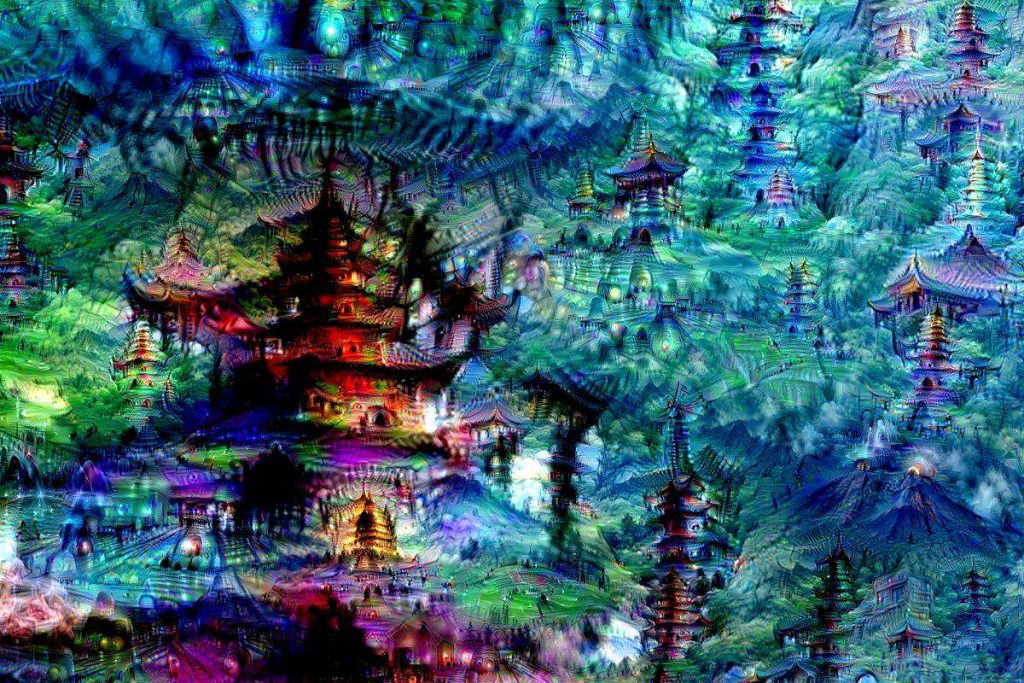

They may look odd, but it’s all part of Google’s plan to solve a huge issue in machine learning: recognizing objects in images.