innovation

Yondr CEO Graham Dugoni unpacks the technological zeitgeist in this exclusive Big Think interview covering media ecology, leadership, AI, human connection, and much more.

Other plans for the tech: organ banking and deep space travel.

Chetan Dube — founder and CEO of Quant — tells Big Think why a pivotal and monumental year for agentic AI has just begun.

“You’ll be able to fly twice as fast as a Boeing or Airbus, and it’ll be like the cost of flying business today.”

Why the advertising legend — and author of Alchemy — believes that inefficiency can be genius and insects can unlock innovation.

“Neurotech is not just about the brain,” says Synchron CTO Riki Banerjee, explaining how their tech can help with paralysis, brain diseases, and beyond.

Playing the long game in Japan is about creating something so enduring that it becomes timeless.

And can we run the grid of the future without AI?

Welcome to The Nightcrawler — a weekly newsletter from Eric Markowitz covering tech, innovation, and long-term thinking.

From tulips to Bitcoin, bubbles have been given a bad rap as destroyers of dreams — but they’re essential for our brightest future. Here’s why.

It’s been 65 years since Richard Feynman saw “plenty of room” in the nano-world. Are we finally getting down there?

Welcome to The Nightcrawler — a weekly newsletter from Eric Markowitz covering tech, innovation, and long-term thinking.

The Wharton School professor — and author of Co-Intelligence — outlines ways we can tap into the AI advantage safely and effectively.

By looking back at future dreams we can see our current hopes and visions in a whole new light.

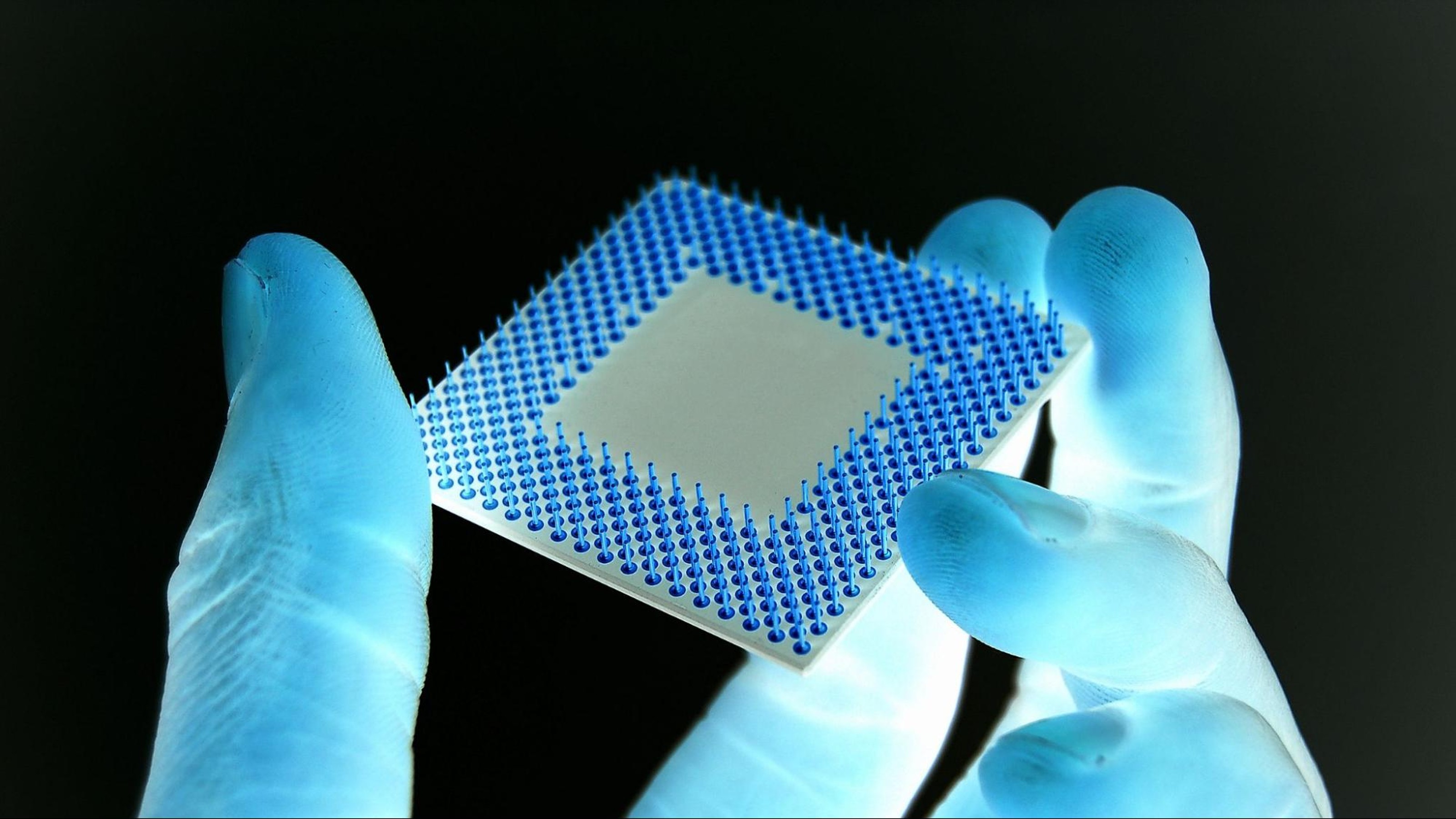

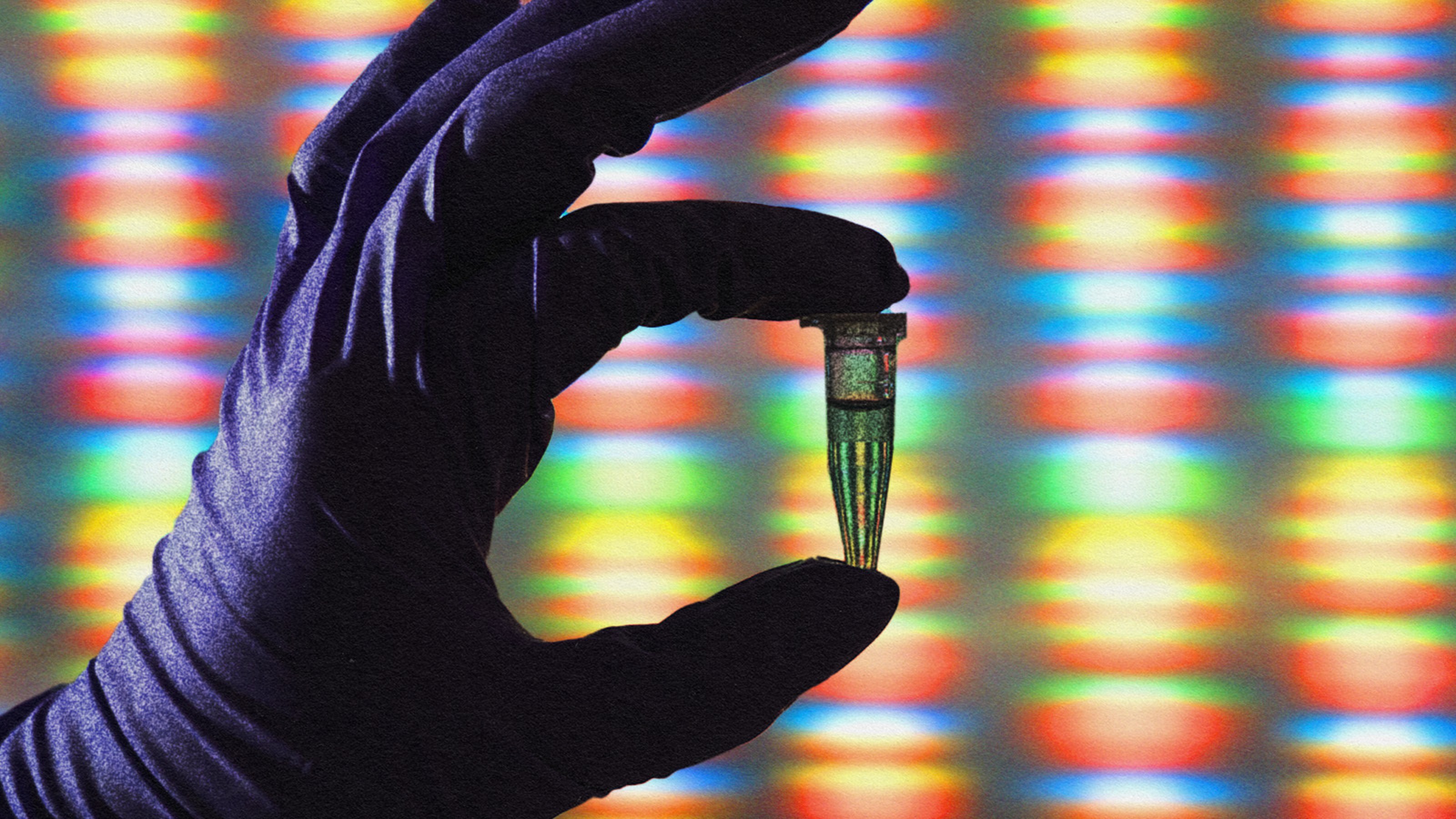

Behind America’s hunt for a superior semiconductor.

“We can build AI scientists that are better than we are… these systems can be superhuman,” says the FutureHouse co-founder.

Welcome to The Nightcrawler — a weekly newsletter from Eric Markowitz covering tech, innovation, and long-term thinking.

Magnificent time-tested buildings are filled with lessons in resilience and stability — and the benefits for investment strategy can be huge.

AI software is rapidly accelerating chip design, potentially leveling up the speed of innovation across the economy.

Welcome to The Nightcrawler — a weekly newsletter from Eric Markowitz covering tech, innovation, and long-term thinking.

Welcome to The Nightcrawler — a weekly newsletter from Eric Markowitz covering tech, innovation, and long-term thinking.

Oxford professor of ethics, John Tasioulas, thinks we should consider the loss of opportunity for “striving and succeeding” that AI is likely to bring.

The successful tactics of big-name leaders — including Bob Iger, Mary Barra, and Satya Nadella — reveal key approaches to innovation.

Reusable rockets, moon landers, civilian astronauts, and more.

The best autonomous car may be one you don’t even need to own.

Welcome to The Nightcrawler — a weekly newsletter from Eric Markowitz covering tech, innovation, and long-term thinking.

“The promise of the Human Genome Project has finally arrived.”

Welcome to The Nightcrawler — a weekly newsletter from Eric Markowitz covering tech, innovation, and long-term thinking.

Semyon Dukach — founding partner of VC firm One Way Ventures — adds balance to the founder mode debate.

The US needs 28 million EV chargers by 2030. Here’s how it can get there.