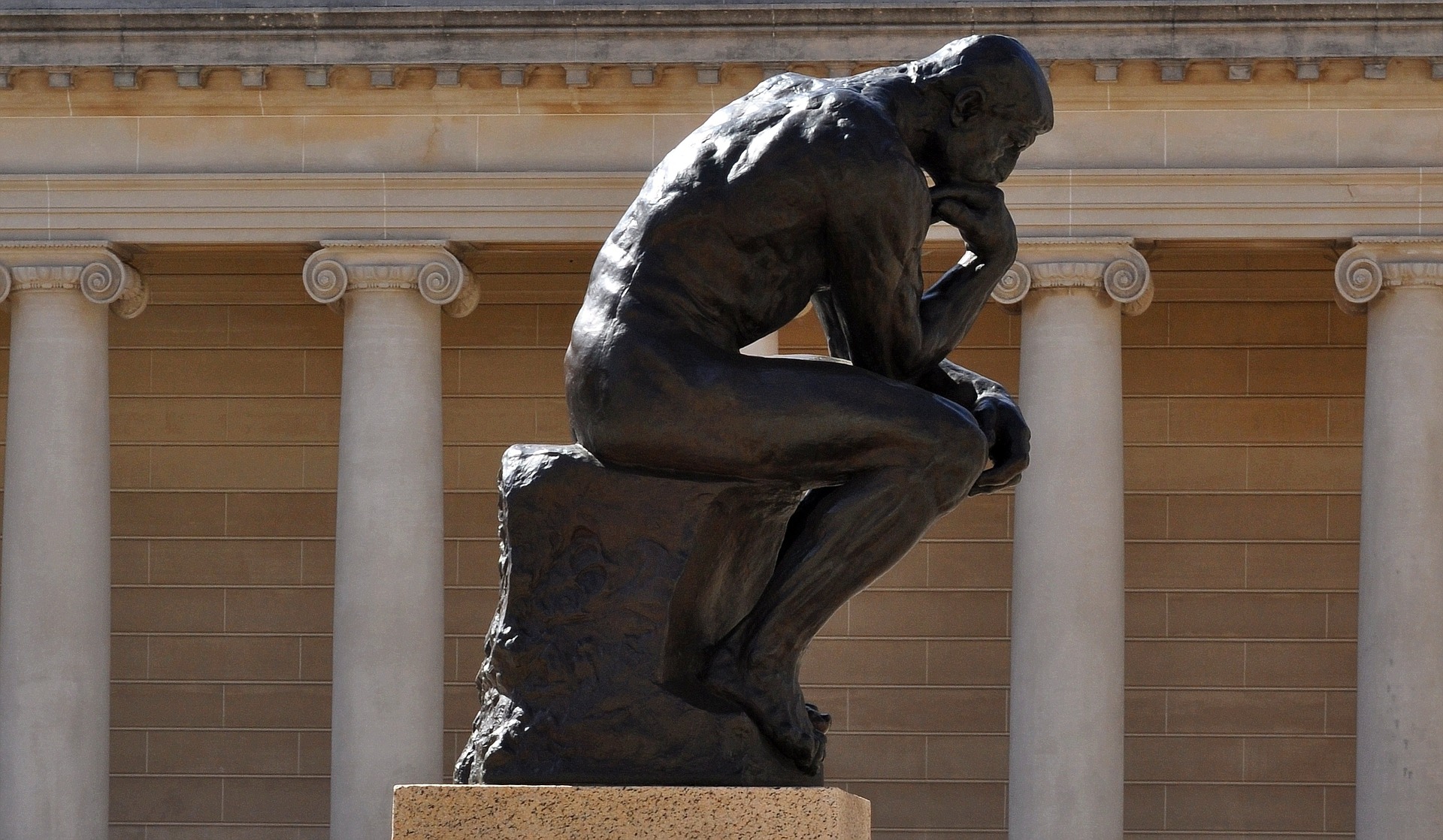

Daniel Dennett

Sure, the old Greek guys from 2,400 years ago get all the glory. But these living philosophers have a ton to say about life, the universe, and everything as it relates to right now.

Philosophers David Chalmers and Daniel Dennett argue over “philosophical zombies,” created to question the nature of human consciousness.

‘Deep learning’ AI should be able to explain its automated decision-making—but it can’t. And even its creators are lost on where to begin.

Philosopher Daniel Dennett believes AI should never become conscious — and no, it’s not because of the robopocalypse.

▸

4 min

—

with

The state of nature isn’t a “war of all against all.” Even no-brainer bacteria “know” that sometimes the game is “Survival of the Friendliest”

Evolution exists and exerts itself in a different way than gravity does… because natural selection is an “algorithmic force.”

We are what we are because of genes; we are who we are because of memes. Philosopher Daniel Dennett muses on an idea put forward by Richard Dawkins in 1976.

▸

7 min

—

with

The human mind is like a Turing machine, says Daniel Dennett. It’s made up of unthinking cogs – but when combined in the right order, their motion gives rise to consciousness.

▸

7 min

—

with

An old fight between philosophy and science has flared up again. Fortunately we have Rebecca Newberger Goldstein to help us sort out what’s going.