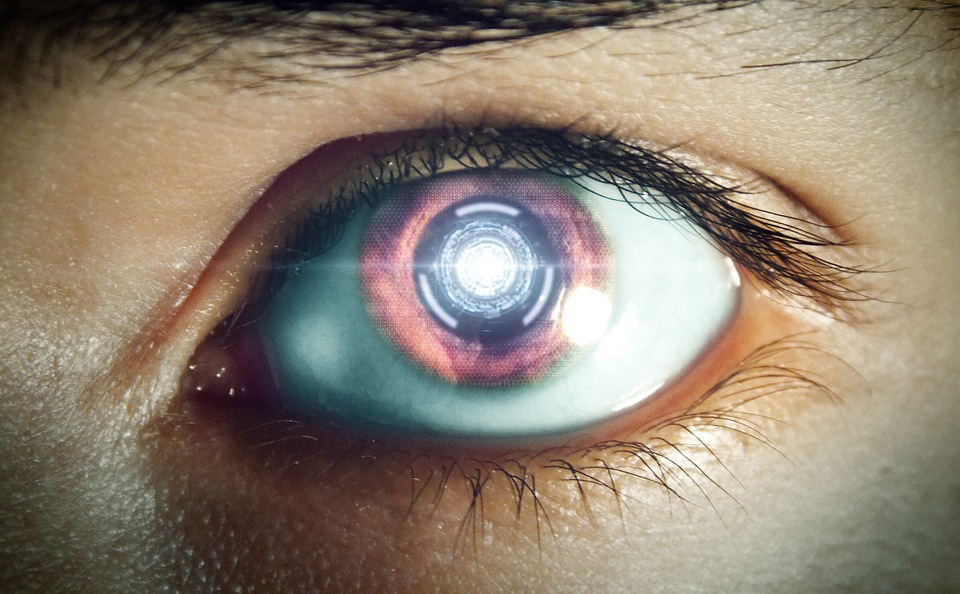

cyborg

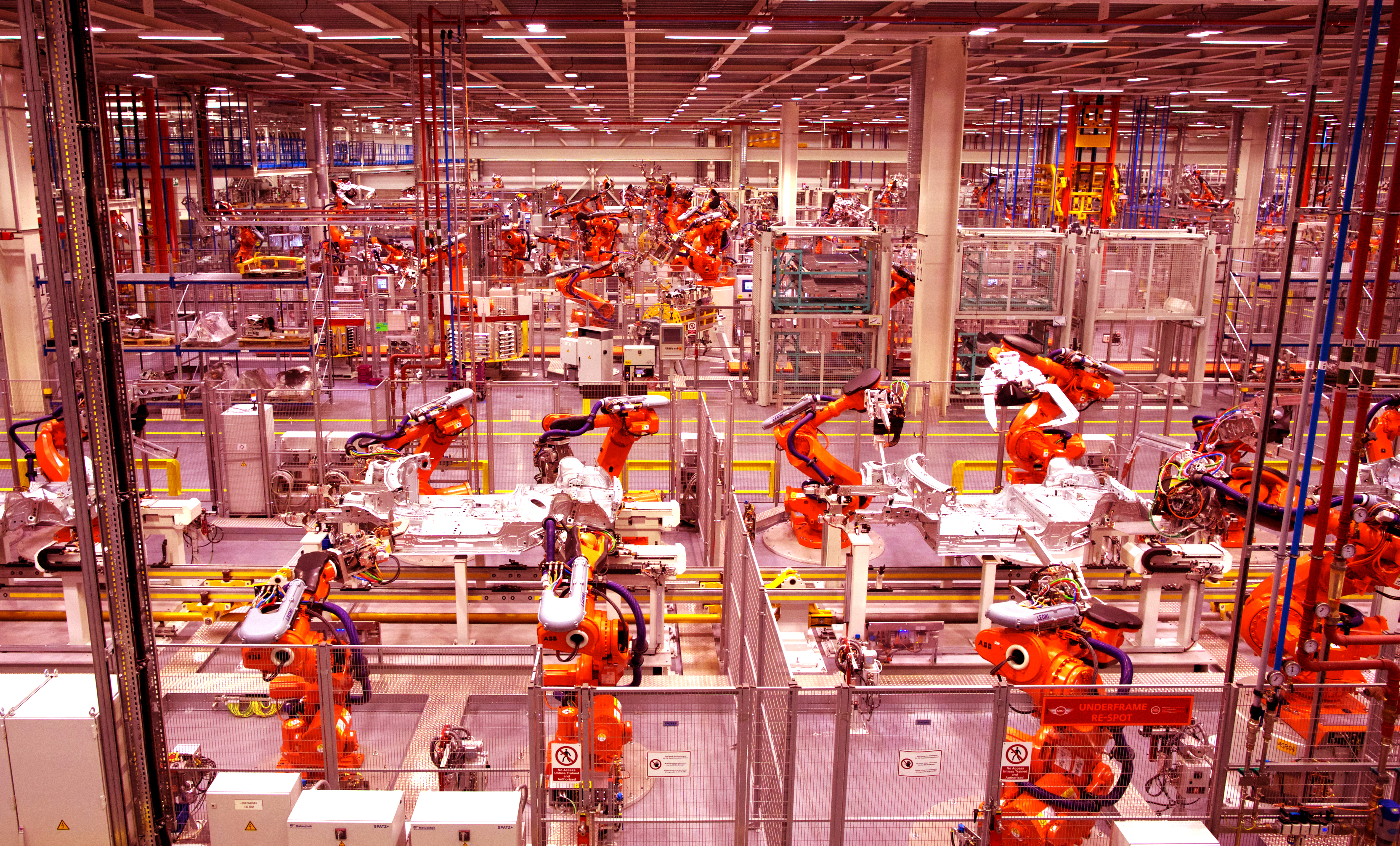

New book explores a future populated with robot helpers.

Would you ever have sex with a robot?

If A.I.s are as smart as mice or dogs, do they deserve the same rights?

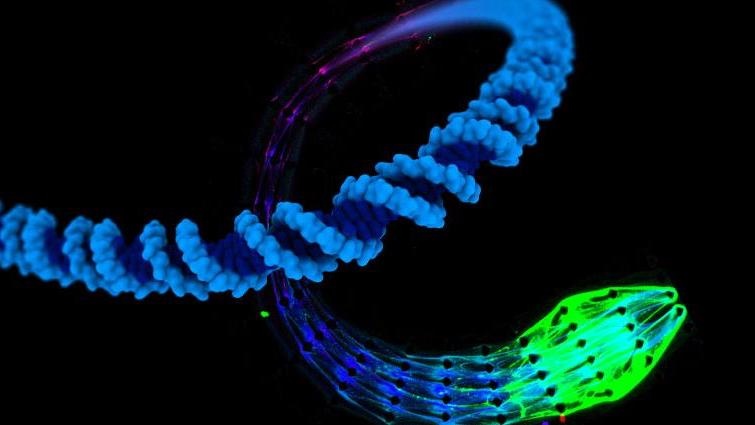

An innovation may lead to lifelike evolving machines.

Upload your mind? Here’s a reality check on the Singularity.

▸

5 min

—

with

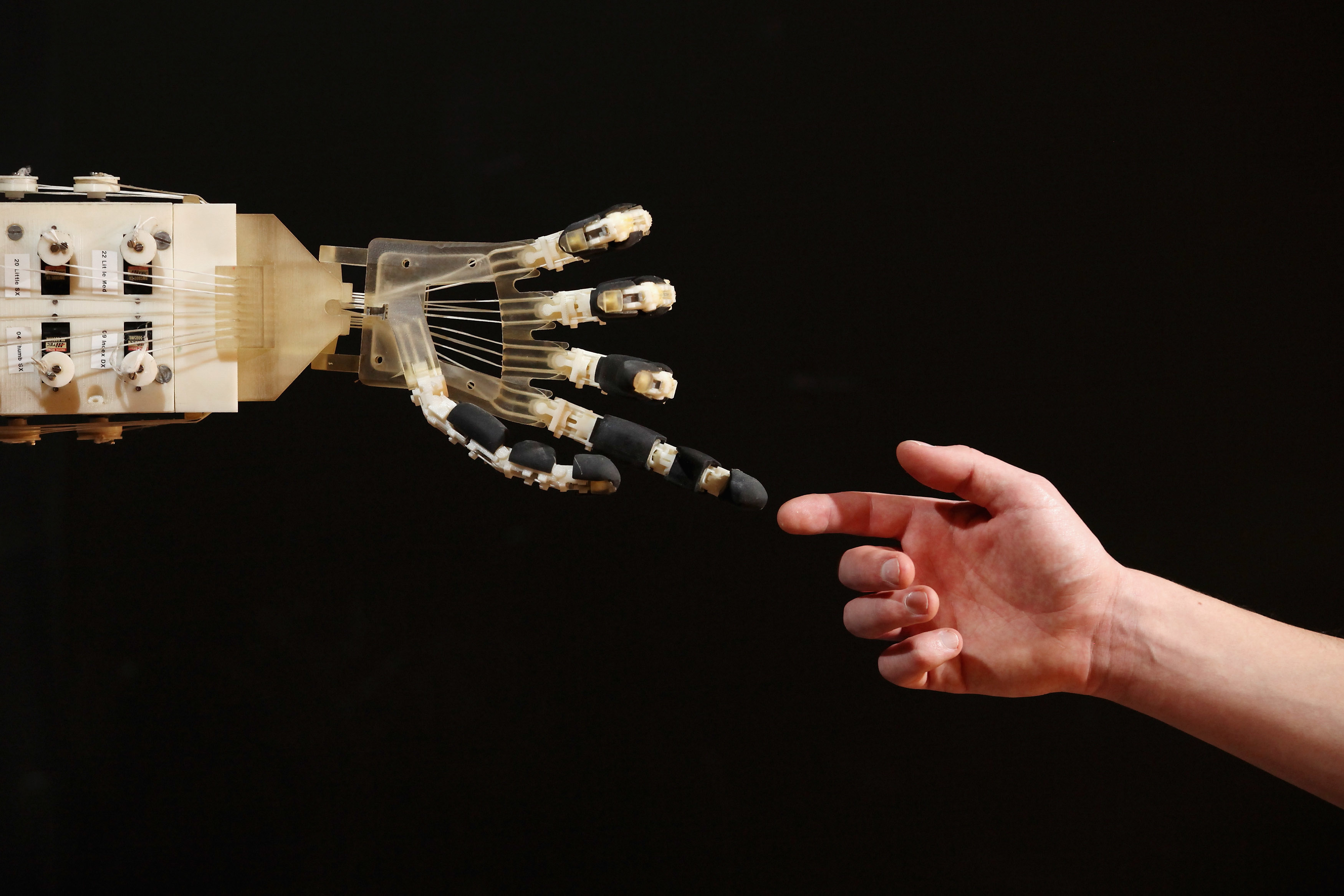

Even our most imaginative expectations of AI are only primitive — but as neuroscience understands the brain more deeply, it will unlock the full potential of hybrid intelligence.

▸

3 min

—

with

You are already a cyborg! Here’s 10 ways you could merge even more with technology in the coming decade.

Philosopher and cognitive scientist David Chalmers warns about an AI-dominated future world without consciousness at a recent conference on artificial intelligence that also included Elon Musk, Ray Kurzweil, Sam Harris, Demis Hassabis and others.

A recent conference on the future of artificial intelligence features visionary debate between Elon Musk, Ray Kurzweil, Sam Harris, Nick Bostrom, David Chalmers, Jaan Tallinn and others.