computer science

A black swan event is rare but disruptive — and might be predictable.

New computing theory allows artificial intelligences to store memories.

A new AI-produced commercial from Lexus shows how AI might be particularly suited for the advertising industry.

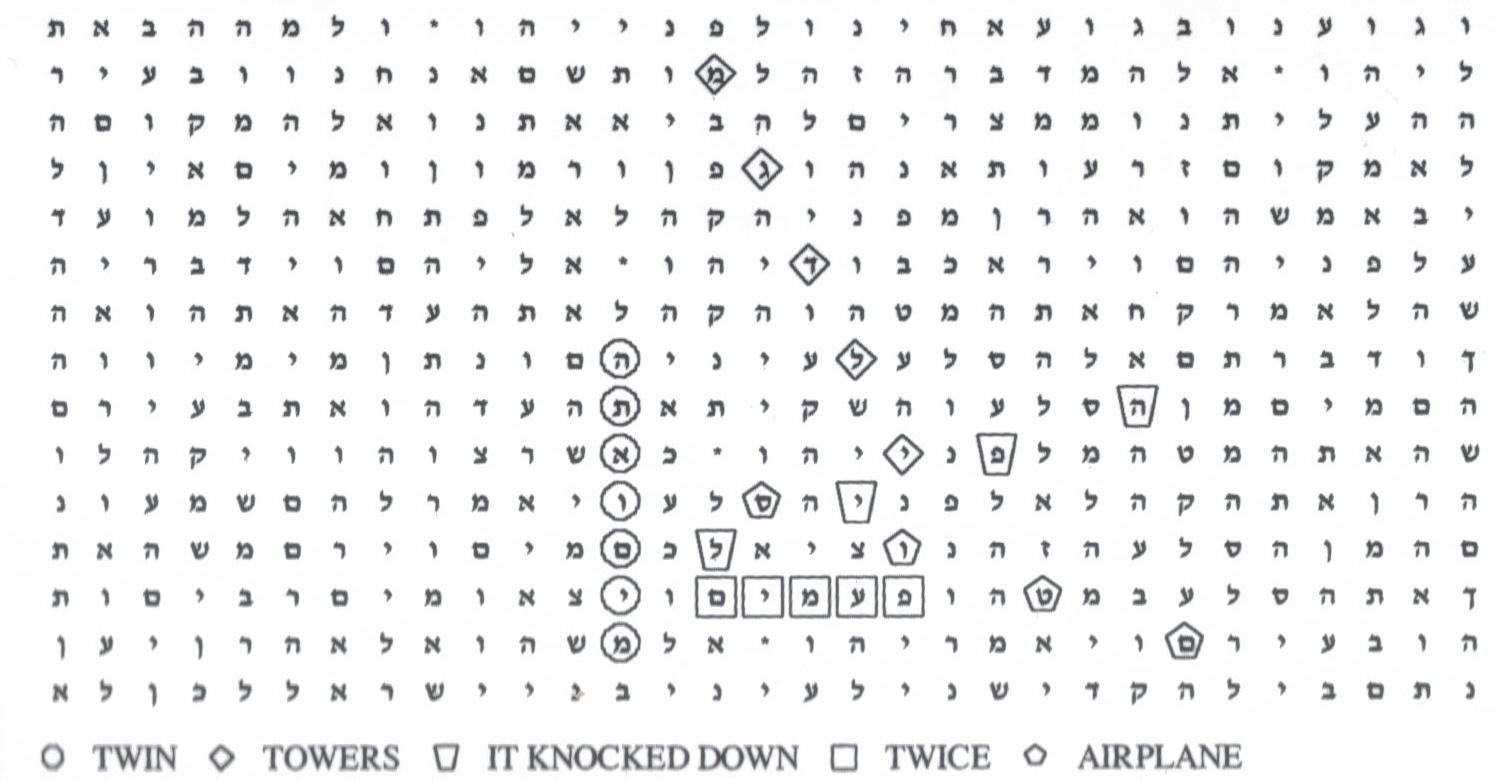

The controversy around the Torah codes gets a new life.

An Ivy League education without the Ivy League price tag.

Elon Musk, Sam Harris, Ray Kurzweil and other visionaries discuss AI superintelligence at a recent conference.