causality

We can “read” genes with ease now, but still can’t say what most of them “mean.” To show why we need clearer “causology” and fitter metaphors, let’s scrutinize cars and their parts like we do bodies and genes.

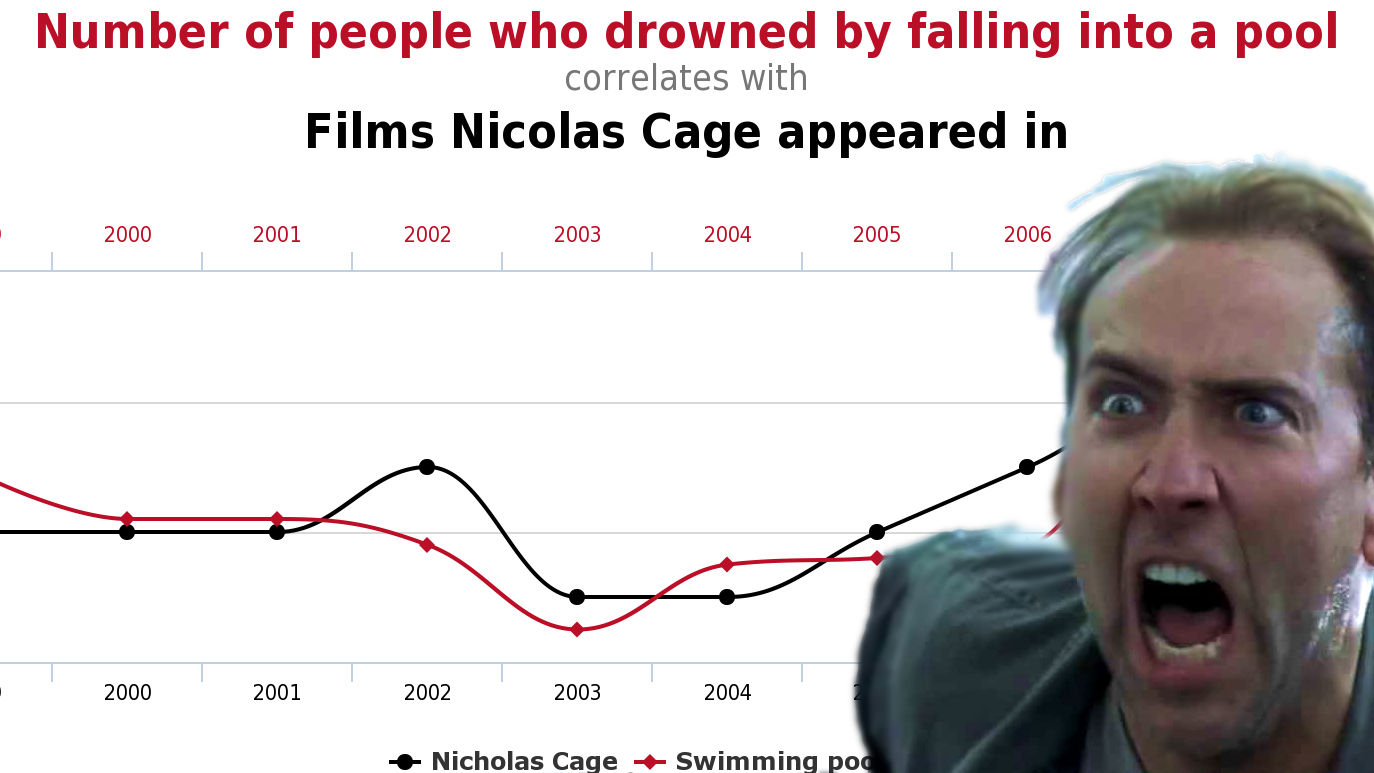

Hilarious examples that prove how correlation does not equal causality.