algorithms

Algorithms dictate a lot more than your social media feeds. Here’s how to win back your agency.

▸

6 min

—

with

Jonathan Rauch explains why the internet is so hostile to the truth, and what we can do to change that.

▸

5 min

—

with

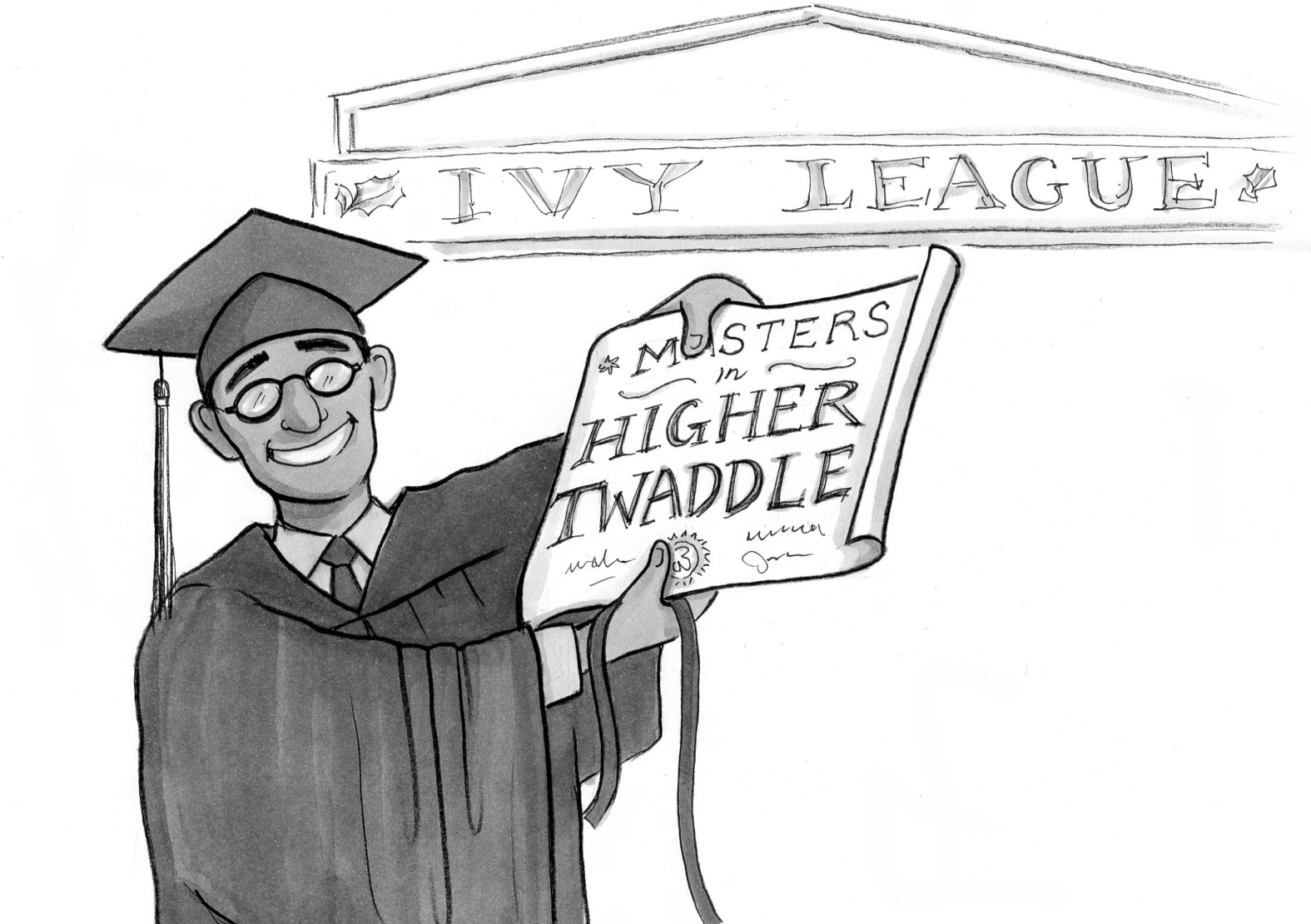

Why do some smart folk spout such bad ideas? Marilynne Robinson says it’s because we teach them “higher twaddle.” She’s right, but the situation is worse than she fears.

You mad, bro? The way that Facebook (and Twitter) manipulates your brain should be the very thing that outrages us the most.

▸

7 min

—

with

Users don’t need better media literacy to beat fake news. We need social media to be frank about its commercial interests.

▸

5 min

—

with

Here’s one use for all that harvested personal data that you might not object to. Algorithms and big data are no longer just for profit; they can bring us self-awareness and growth.

▸

4 min

—

with