Big Think recently spoke with Nick Bostrom about how humans might find fulfillment in a post-scarcity world.

Jonny Thomson taught philosophy in Oxford for more than a decade before turning to writing full-time. He’s a staff writer at Big Think, where he writes about philosophy, theology, psychology,[…]

You’ve got to know when to fight and when to laugh.

Jonny Thomson taught philosophy in Oxford for more than a decade before turning to writing full-time. He’s a staff writer at Big Think, where he writes about philosophy, theology, psychology,[…]

Big ideas in 10 minutes or less

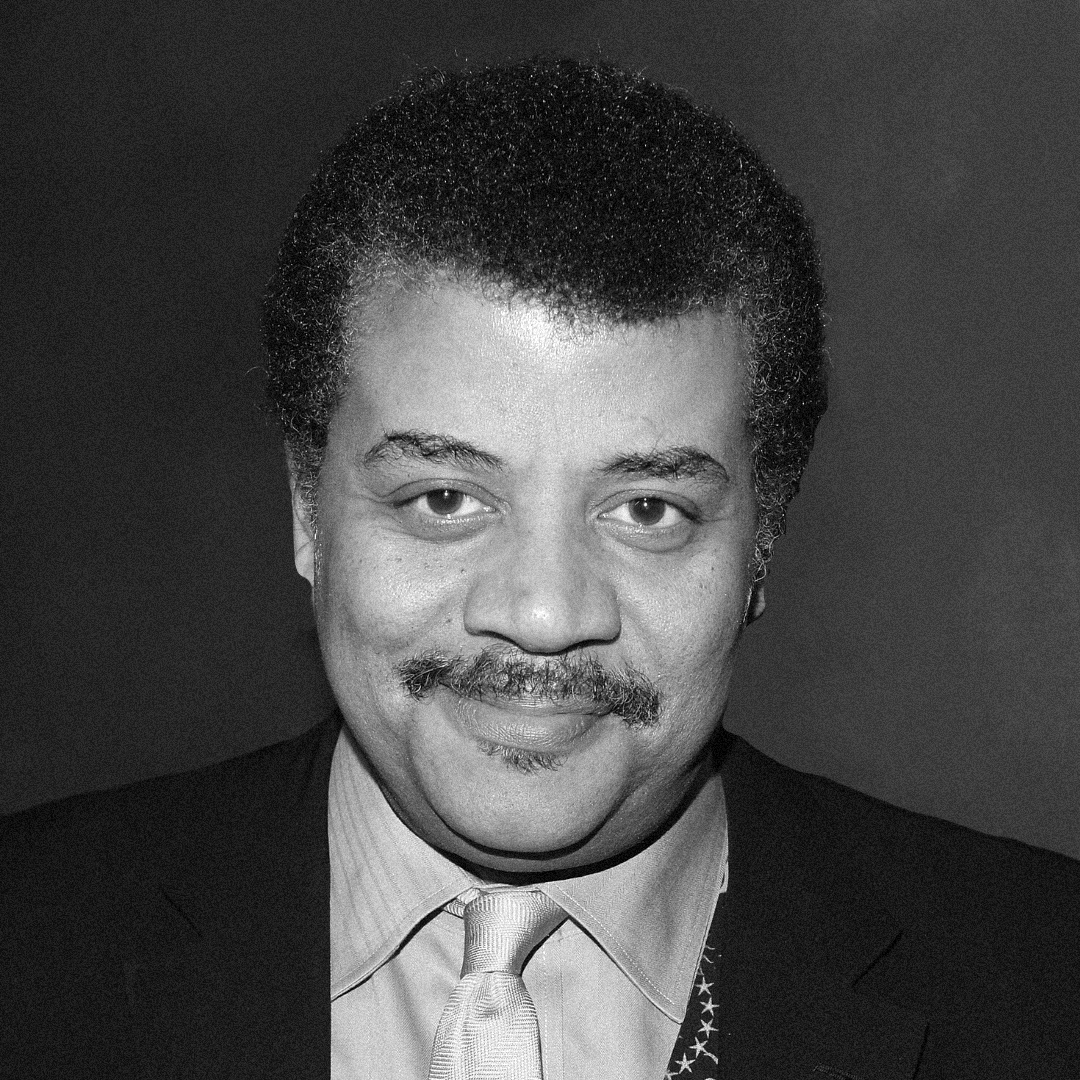

Explore our library of more than 2,000 interviews with the world’s biggest thinkers.

Learn from the world’s biggest thinkers

Get insights from thought leaders across all fields and industries.

Explore the world’s biggest questions

Dive in and think big with us.

Is free will a phantom of brain chemistry, or are we truly in control of our lives? A question debated by great minds for millenia.

Explore our series

Original video series from the biggest thinkers in the world.

Even the smartest people benefit from more context. These experts are here to provide just that.

▸

9 min

—

with

▸

9 min

—

with

▸

8 min

—

with

▸

13 min

—

with

▸

8 min

—

with

▸

8 min

—

with

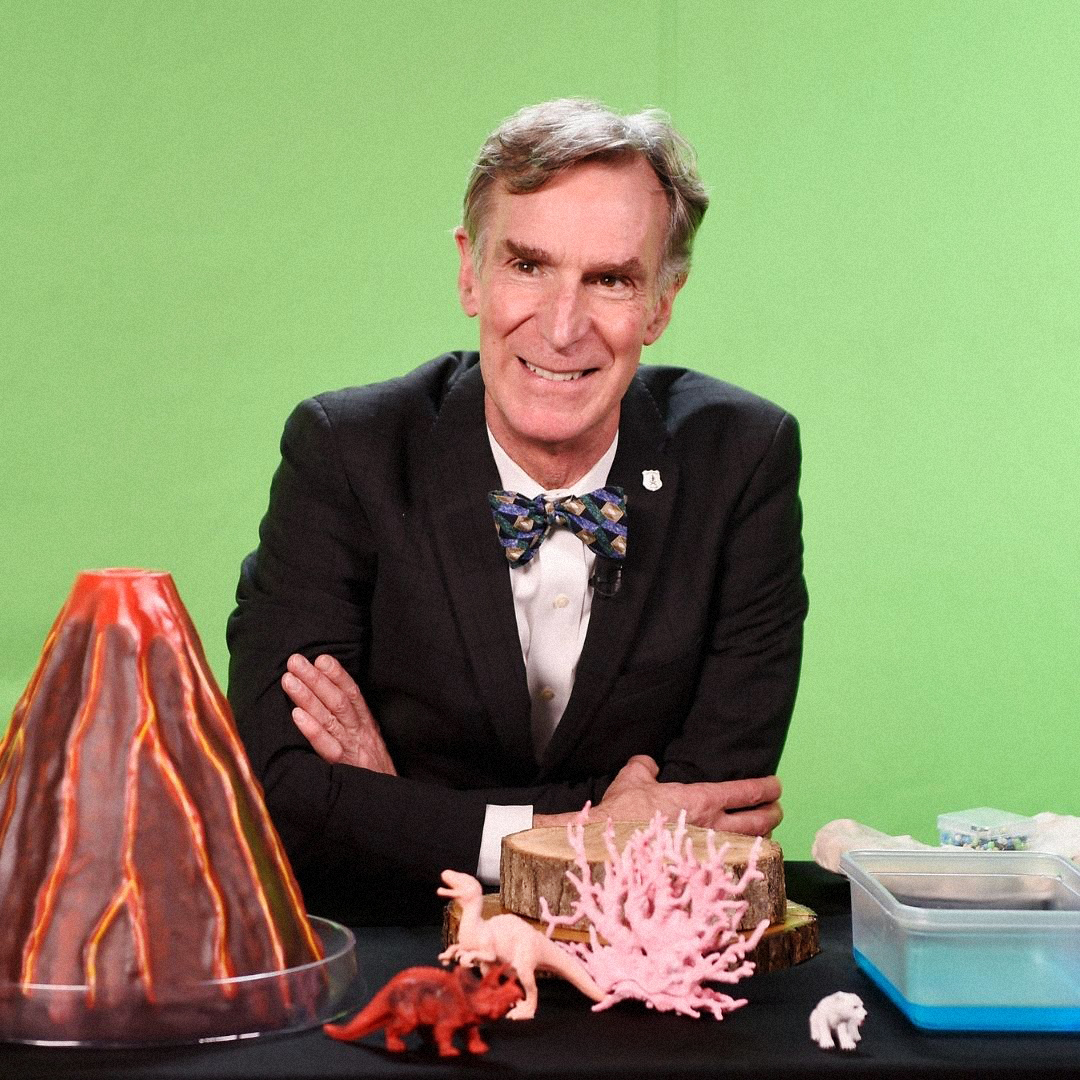

Big Thinkers join us to ruminate on a great question that they may — or may not — have pondered before.

▸

7 min

—

with

▸

6 min

—

with

▸

6 min

—

with

▸

5 min

—

with

▸

6 min

—

with

▸

4 min

—

with

Get Smarter, Faster.

Get Big Think+ for Business

Enable transformation at your company with Big Think+.

In an increasingly remote world, it’s more important than ever that you have a scalable, digital resource to build culture and enable transformation. Unlock potential across your organization by building the capabilities that are relevant today and will continue to be relevant in the future with Big Think+.

From leadership with Simon Sinek to design thinking with Sara Blakely, our learning content features expert insights from the world’s biggest thinkers. That’s why brands like Ford, PwC, and BMO trust Big Think to help employees learn and grow.