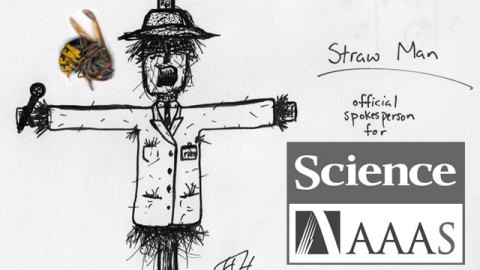

Science’s Straw Man Sting

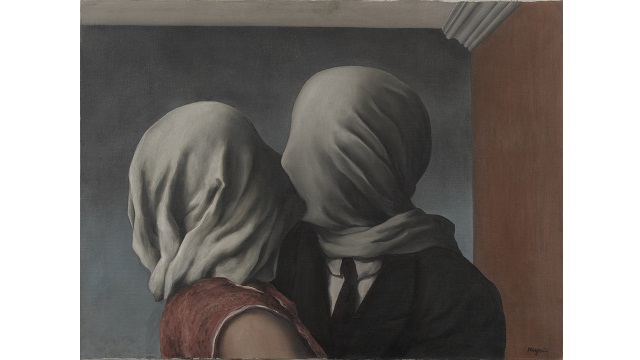

Last week Sciencepublished a “sting operation” that runs the risk of tarnishing the entire phenomenon of open access publishing, however the paper is only representative of a tiny and very specific portion. The sting involved submitting 304 versions of the same bogus paper to different open access journals – essentially an industrial scale version of the Sokal affair, except instead of targeting postmodern cultural studies, the target was open access publishing. In this post we’ll look at some of the main reasons why the sting has been broadly slated for bad science.

1. No control group – In the words of Michael Eisen, co-founder of PLoS (whose journal PLoS ONE rejected the bogus paper):

“It’s nuts to construe this as a problem unique to open access publishing, if for no other reason than the study, didn’t do the control of submitting the same paper to subscription-based publishers”

2. Bias in selection – The selection criteria meant only 304 of 2054 open access journals were included. Only journals with fees (a minority of open access journals) were selected, amongst a litany of other criteria as Jeroen Bosman points out:

“Bohannan’s selection of journals to submit his paper to is based on the Directory of Open Access Journals. Even if you consider this list as complete, which it is not, the selection Bohannon made is very skewed. Only journals with fees (article processing charges) were considered, thereby throwing out 75% (leaving only 2054 of 8250 titles). This introduced a bias, because all publishers who are just in it to make easy money are concentrated in this group and not in the 75% that does not charge. Then the author threw out all journals that were not aiming at general, biological, chemical or medical science. This meant another reduction with 85% (from 2054 to 304), introducing another bias, because the pressure to publish is highest in these fields, making them prime targets of ‘predatory’ publishers. In short, by making the selections, chances are high that (unintentionally) Bohannon focussed on a group with a relatively many rotten apples.“

3. Inclusion of many known outright “criminal organisations”

121 of the papers included in the sting were sent to journals discovered through Beall’s list of known predatory publishers, described in the paper as “a single page on the Internet that names and shames what he calls “predatory” publishers”. It is perhaps actually surprising that 35 of the journals on Beall’s blacklist actually passed the sting. In any case, the subset taken from Beall’s list which makes up nearly half of the sample is clearly in no way representative of open access publishing as a whole.

4. Not peer reviewed

Ironically, the paper – about peer review – was not peer reviewed. Instead it was published in a magazine as journalism dressed up as a scientific paper. If the paper had been reviewed, it could have been suggested that something as basic as a control group might have been necessary and the unsupported conclusion could have been toned down.

5. Unsupported conclusion

The argument made in the paper that “most of the players (in the realm of open access scientific journals) are murky” is simply not demonstrated by this study because of the simple fact that “most of the players” (75%) were excluded for not having processing charges. This fact alone is a demonstration that most open access journals are not simply out for a buck – unlike many publishers – not least Science, who use mile high paywalls to prevent you from accessing science. Of course there are a great many downright fraudulent publishers at the bottom of the barrel of open access publishing, we knew that already – we’ve covered some of the very same ones explicitly on this blog before. It’s not like there aren’t dodgy papers published in the subscription publishing world – they seem to take centre stage on this blog. It’s noteworthy however that one of the open access journals that accepted the dodgy papers was owned by Sage (for profit), another was published by Elsevier, who are known for crucifying institutions with their subscription model that deprives scientists at institutions around the world of science. They also run this gem:

Mike Taylor sums it up well:

“It’s a maze of preordained outcomes, multiple levels of biased selection, cherry-picked data and spin-ridden conclusions. What it shows is: predatory journals are predatory. That’s not news.”

The Science paper was a wonderful idea and has indeed cast a bright and important light on many known and unknown downright fraudulent publishers, but it could have been so much more. The apparatus was there, the papers were prepared to automatically generate with random names and institutions, the automated mailer was built. The paper could have shaken the foundations of bad science where it matters, instead the author chose to state the obvious. Worst of all, they state the findings as if they are somehow representative of the whole of open access publishing – when in fact the subset represents a very specific minority. The majority who weren’t targeted, don’t deserve to be tarnished by the same brush.

Read part 2 of this post here.

Image Credit: Adapted from artwork by Seth Thomas Rasmussen and Woodleywonderworks.

To keep up to date with this blog you can follow Neurobonkers on Twitter, Facebook, RSS or join the mailing list.