The birth of childhood: A brief history of the European child

Jan Steen - A School for Boys and Girls [c.1670]

It took several thousand years for our culture to realize that a child is not an object. Learning how to treat children as humans continues to this day.

“Nature wants children to be children before they are men,” wrote Jean-Jacques Rousseau in the book Emile, or On Education (1762). While Rousseau did not see children as humans, he appealed to parents to look after their offspring. “If we consider childhood itself, is there anything so weak and wretched as a child, anything so utterly at the mercy of those about it, so dependent on their pity, their care, and their affection?” he asked. At a time when children were regularly entrusted to others during adolescence or left in shelters, Rousseau’s demands seemed revolutionary. They paved the way for the breakthrough discovery that indeed, a child is also a human being, capable of feelings, having their own needs and, above all, suffering. But the philosopher himself did not take these ideas to heart. Whenever his lover and later wife, Teresa Levasseur, gave birth to a child, Rousseau immediately gave the baby to an orphanage, where just one in a hundred newborns had a chance to live to adulthood.

Ancient cruelty

Double standards in people’s approach to children were not unusual in the past. In ancient Greece, no one condemned parents for leaving a baby by the road or in the garbage. Usually, it was torn apart by animals. Less often, a passer-by would take them – not necessarily guided by mercy. After raising the orphan, the ‘Good Samaritan’ could sell the child at a slave market, recovering the money invested in their maintenance with interest. This kind of practice did not shock, because in the world of ancient Greece a child had the status of private property, and therefore the public and authorities were indifferent to their fate.

The exception was Sparta, but this did not mean anything good for minors. While in other poleis infanticide was left to parents, in Sparta it was managed by the council of Fyli. In Life of Lycurgus, Plutarch wrote about how the child was inspected by the Fyli elders forming the council: “If they found it stout and well made, they gave order for its rearing, and allotted to it one of the nine thousand shares of land above mentioned for its maintenance, but, if they found it puny and ill-shaped, ordered it to be taken to what was called the Apothetae, a sort of chasm under Taygetus; as thinking it neither for the good of the child itself, nor for the public interest, that it should be brought up, if it did not, from the very outset, appear made to be healthy and vigorous.” The boys who passed the selection faced a rather short childhood – when they were seven, they were taken to the barracks, where they were trained to be excellent soldiers until they came of age.

Greek standards for dealing with children were modified slightly by the Romans. Until the second century BCE, citizens of the Eternal City followed the custom to put each new born baby on the ground right after delivery. If the father picked the baby up, the mother could care for it. If not, the newborn landed in the trash – someone could take them away or wild dogs would consume them. It was not until the end of the republic that this custom was considered barbaric and gradually began to fade. However, the tradition requiring that the young man or woman should remain under the absolute authority of their father was still obliged. The head of the family could even kill the offspring with impunity, although he had to consult the decision with the rest of the family beforehand.

Discovering childhood

When the Greeks and Romans did decide to look after their offspring, they showed them love and attention. In wealthier homes, special emphasis was placed on education and upbringing, so that the descendant “would desire to become an exemplary citizen, who would able to govern as well as obey orders in accordance with the laws of justice,” as Plato explained in The Laws. According to the philosopher, children should be carefully looked after, and parents have the duty to care for their physical and mental development. Plato considered outdoor games combined with reading fairy tales, poetry and listening to music as the best way to achieve this goal. Interestingly, Plato did not approve of corporeal punishment as an educational measure.

The great Greek historian and philosopher Plutarch was of a similar opinion. He praised the Roman senator Cato the Elder for helping his wife to bathe their son, and not avoiding changing the baby. When the offspring grew up, the senator spent a lot of time with the boy, studied literary works with him, and taught him history, as well as horse riding and the use of weapons. Cato also condemned the beating of children, considering it to be unworthy of a Roman citizen. As prosperity grew, the revolutionary idea became increasingly popular in the republic. Educator Marcus Fabius Quintilianus (Quintilian) in his Institutes of Orator described corporeal punishment as “humiliating”.

Another consequence of the liberalization of customs in the first century CE was taking care of girls’ education and gradually equalizing their rights with those of boys. However, only Christians condemned the practice of abandonment of newborns. The new religion, garnering new followers in the Roman Empire from the third century onwards, ordered followers to care unconditionally for every being bestowed with an immortal soul.

This new trend turned out so strong that it survived even the fall of the Empire and the conquest of its lands by the Germanic peoples. Unwanted children began to end up in shelters, eagerly opened by monasteries. Moral pressure and the opportunity to give a child to the monks led to infanticide becoming a marginal phenomenon. Legal provisions prohibiting parents from killing, mutilating and selling children began to emerge. In Poland, this was banned in 1347 by Casimir the Great in his Wiślica Statutes.

However, as Philippe Ariès notes in Centuries of Childhood: A Social History of Family Life: “Childhood was a period of transition which passed quickly, and which was just as quickly forgotten.” As few children survived into adulthood, parents usually did not develop deeper emotional ties with their offspring. During the Middle Ages, most European languages did not even know the word ‘child’.

Departure from violence

During the Middle Ages, a child became a young man at the age of eight or nine. According to canon law of the Catholic Church, the bride had to be at least 12 years old, and the groom, 14. This fact greatly hindered the lives of the most powerful families. Immediately after the child’s birth, the father, wanting to increase the resources and prestige of the family, began looking for a daughter-in-law or son-in-law. While the families decided their fate, the children subject to the transaction had nothing to say. When the King of Poland and Hungary, Louis the Hungarian, matched his daughter Jadwiga with Wilhelm Habsburg, she was only four years old. The husband chosen for her was four years older. To avoid conflicts with the church, the contract between the families was called an ‘engagement for the future’ (in Latin: sponsalia de futuro). The advantage of these arrangements was such that if political priorities changed, they were easier to break than sacramental union. This was the case with the engagement of Hedwig, who, for the benefit of the Polish raison d’etat, at the age of 13 married Władysław II Jagiełło, instead of Habsburg.

Interest in children as independent beings was revived in Europe when antiquity was discovered. Thanks to the writings of ancient philosophers, the fashion to care for education and educating children returned. Initially, corporeal punishment was the main tool in the education process. Regular beating of the pupils was considered to be so necessary that in the monastery schools a custom of a spring trip to the birch grove arose. There, the students themselves collected a supply of sticks for their teacher for the entire year.

A change in this way of thinking came with Ignatius of Loyola’s Society of Jesus, founded in 1540. The Jesuits used violence only in extraordinary situations, and corporeal punishment could only be imposed by a servant, never a teacher. The pan-European network of free schools for young people built by the order enjoyed an excellent reputation. “They were the best teachers of all,” the English philosopher Francis Bacon admitted reluctantly. The successes of the order made empiricists aware of the importance of non-violent education. One of the greatest philosophers of the 17th century, John Locke, urged parents to try to stimulate children to learn and behave well, using praise above all other measures.

The aforementioned Rousseau went even further, and criticized all then patterns of treating children. According to the then fashion, noble and rich people did not deal with them, because so did the plebs. The newborn was fed by a wet-nurse, and then was passed on to grandparents or poor relatives who were paid a salary. The child would return home when they were at least five years old. The toddler suddenly lost their loved ones. Later, their upbringing and education was supervised by their strict biological mother. They saw the father sporadically. Instead of love, they received daily lessons in showing respect and obedience. Rousseau condemned all of this. “His accusations and demands shook public opinion, women read them with tears in their eyes. And just like it was once fashionable, among the upper classes, to pass on the baby to the wet-nurse, after Emil it became fashionable for the mother to breastfeed her child,” wrote Stanisław Kot in Historia wychowania [The History of Education]. Still, a fashion detached from the law and exposing society to the fate of children could not change the reality.

Shelter and factory

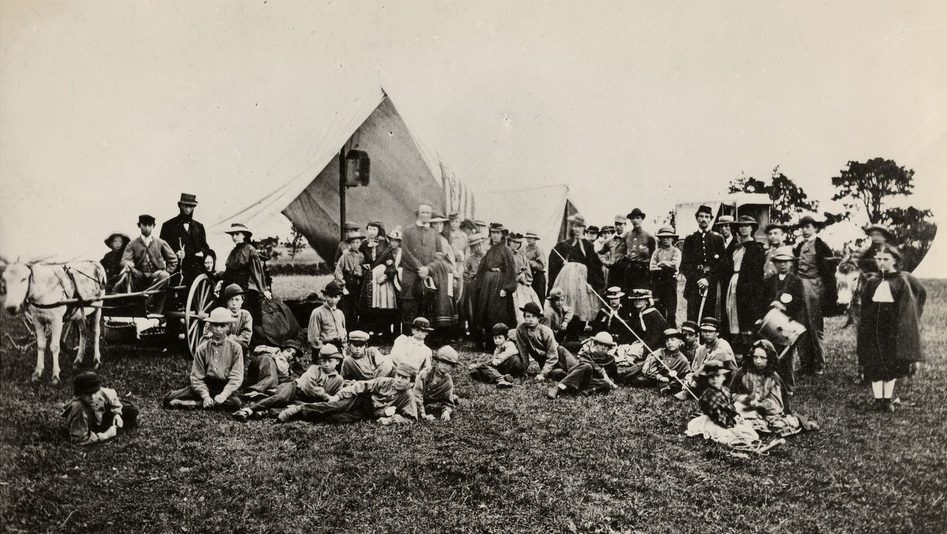

“In many villages and towns, newborn babies were kept for twelve to fifteen days, until there were enough of them. Then they were transported, often in a state of extreme exhaustion, to the shelter,” writes Marian Surdacki in DzieciporzuconewspołeczeństwachdawnejEuropyiPolski [Children Abandoned in the Societies of Old Europe and Poland]. While the Old Continent elites discovered the humanity of children, less affluent residents began reproducing entirely different ancient patterns on a massive scale. In the 18th century, abandoning unwanted children again became the norm. They usually went to care facilities maintained by local communes. In London, shelters took in around 15,000 children each year. Few managed to survive into adulthood. Across Europe, the number of abandoned children in the 18th century is estimated at around 10 million. Moral condemnation by the Catholic and Protestant churches did not do much.

Paradoxically, the industrial revolution turned out to be more effective, although initially it seemed to have the opposite effect. In Great Britain, peasants migrating to the cities routinely rid themselves of bothersome progeny. London shelters were under siege, and around 120,000 homeless, abandoned children wandered the streets of the metropolis. Although most did not survive a year, those who did required food and clothes. The financing of shelters placed a heavy burden on municipal budgets. “To the parish authorities, encumbered with great masses of unwanted children, the new cotton mills in Lancashire, Derby, and Notts were a godsend,” write Barbara and John Lawrence Hammond in The Town Labourer.

At the beginning of the 19th century, English shelters became a source of cheap labour for the emerging factories. Orphans had to earn a living to receive shelter and food. Soon, their peers from poor families met the same fate. “In the manufacturing districts it is common for parents to send their children of both sexes at seven or eight years of age, in winter as well as summer, at six o’clock in the morning, sometimes of course in the dark, and occasionally amidst frost and snow, to enter the manufactories, which are often heated to a high temperature, and contain an atmosphere far from being the most favourable to human life,” wrote Robert Owen in 1813. This extraordinary manager of the New Lanark spinning mill built a workers’ estate complete with a kindergarten. It offered care, but also taught the children of workers how to read and write.

However, Owen remained a notable exception. Following his appeal, in 1816 the British parliament set up a special commission, which soon established that as many as 20% of workers in the textile industry were under 13 years old. There were also spinning mills where children constituted 70% of the labour force. As a standard, they worked 12 hours a day, and their only day of rest was Sunday. Their supervisors maintained discipline with truncheons. Such daily existence, combined with the tuberculosis epidemic, did not give the young workers a chance to live for too long. Owen and his supporters’ protests, however, hardly changed anything for many years. “Industry as such is seeking new, less skilled but cheaper, workers. Small children are most welcome,” noted the French socialist Eugène Buret two decades later.

Emerging morality

Among the documents available in the British National Archives is the report of a government factory inspector from August 1859. He briefly described the case of a 13-year-old worker, Martha Appleton, from a Wigan spinning mill. Due to unhealthy, inhumane conditions the girl fainted on the job. Her hand became caught in an unguarded machine and all her fingers on that hand were severed. Since her job required both hands to be fast and efficient, Martha was fired, noted the inspector. As he suspected, the girl fainted due to fatigue. The next day, the factory owner decided that such a defective child would be useless. So, he dismissed her.

Where a single man once worked, one now finds several children or women doing similar jobs for poor salaries, warned Eugène Buret. This state of affairs began to alarm an increasing number of people. The activities of the German educator Friedrich Fröbel had a significant impact on this: he visited many cities and gave lectures on returning children to their childhoods, encouraging adults to provide children with care and free education. Fröbel’s ideas contrasted dramatically with press reports about the terrible conditions endured by children in factories.

The Prussian government reacted first, and as early as 1839 banned the employment of minors. In France, a similar ban came into force two years later. In Britain, however, Prime Minister Robert Peel had to fight the parliament before peers agreed to adopt the Factory Act in 1844. The new legislation banned children below 13 from working in factories for more than six hours per day. Simultaneously, employers were required to provide child workers with education in factory schools. Soon, European states discovered that their strength was determined by citizens able to work efficiently and fight effectively on the battlefields. Children mutilated at work were completely unfit for military service. At the end of the 19th century, underage workers finally disappeared from European factories.

In defence of the child

“Mamma has been in the habit of whipping and beating me almost every day. She used to whip me with a twisted whip – a rawhide. The whip always left a black and blue mark on my body,” 10-year-old Mary Ellen Wilson told a New York court in April 1874. Social activist Etty Wheeler stood in defence of the girl battered by her guardians (her biological parents were dead). When her requests for intervention were repeatedly refused by the police, the courts, and even the mayor of New York, the woman turned to the American Society for the Prevention of Cruelty to Animals (ASPCA) for help. Its president Henry Bergh first agreed with Miss Wheeler that the child was not her guardians’ property. Using his experience fighting for animal rights, he began a press and legal battle for little Wilson. The girl’s testimony published in the press shocked the public. The court took the child from her guardians, and sentenced her sadistic stepmother to a year of hard labour. Mary Ellen Wilson came under the care of Etty Wheeler. In 1877, her story inspired animal rights activists to establish American Humane, an NGO fighting for the protection of every harmed creature, including children.

In Europe, this idea found more and more supporters. Even more so than among the aristocrats, the bourgeois hardly used corporeal punishment, as it was met with more and more condemnation, note Philippe Ariès and Georges Duby in A History of Private Life: From the Fires of Revolution to the Great War. At the same time, the custom of entrusting the care of offspring to strangers fell into oblivion. Towards the end of the 19th century, ‘good mothers’ began to look after their own babies.

In 1900, Ellen Key’s bestselling book The Century of the Child was published. A teacher from Sweden urged parents to provide their offspring with love and a sense of security, and limit themselves to patiently observe how nature takes its course. However, her idealism collided with another pioneering work by Karl Marx and Friedrich Engels. The authors postulated that we ought to “replace home education by social”. The indoctrination of children was to be dealt with by school and youth organizations, whose aim was to prepare young people to fight the conservative generation of parents for a new world.

Did the 20th century bring a breakthrough in how children are treated? In 1924, the League of Nations adopted a Declaration of the Rights of the Child. The opening preamble stated that “mankind owes to the child the best that it has to give.” This is an important postulate, but sadly it is still not implemented in many places around the world.

Translated from the Polish by Joanna Figiel

Reprinted with permission of Przekrój. Read the original article.