9 folks who were way ahead of their time

- Sometimes, people are so far ahead of the curve that it takes everybody else hundreds of years to catch up to their ideas.

- While many people are content to quietly sit back and flow with popular opinion, these nine thinkers let the world know what it was doing wrong, often with major consequences.

- These great thinkers remind us that taking an unpopular, bold stance might not be madness.

It’s been said that when you’re one step ahead of the crowd you’re a genius but that two steps ahead make you a crackpot. In some cases, people were so far ahead of their time that they would seem progressive even today, despite hundreds of years of history slowly working to catch up to them.

Here, we have nine scientific and social visionaries who were well ahead of everybody else. The names of others like them have been lost to history, buried under the weight of popular opinion. These bold few are the ones we know about.

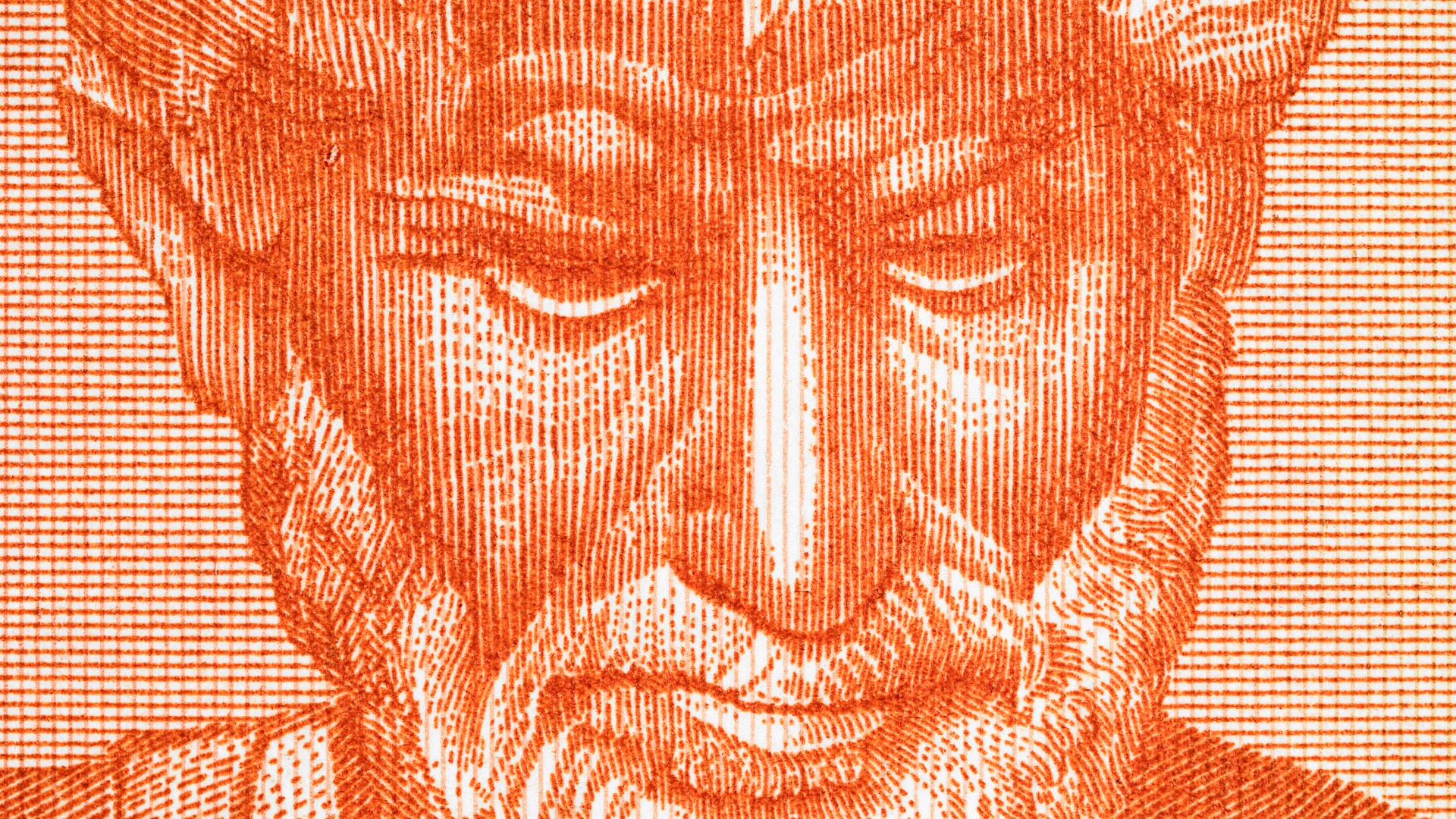

Dante, 1450, painted by Andrea del Castagno.

(Photo by: Picturenow/UIG via Getty Images)

Dante Alighieri

The author of the Divine Comedy, Dante had more than his share of ideas that were well ahead of the 14th century.

The first and most famous part of the comedy, Inferno, slips a serious jab at Catholic teachings past the radar. In the story, sodomites are placed in the same circle of hell as murderers; in line with church teachings. Dante, however, expresses sympathy for the damned here that is absent in other chapters.

The sequel to Inferno, which features purgatory, also has depicts homosexual characters in a favorable light, implying that Dante didn’t consider it a sin to be gay. Historian John Boswell called Dante’s treatment of the subject “revolutionary” in comparison to theological consensus at the time.

Dante also wrote books on political philosophy which were a few centuries early. In De Monarchia he argued for separating secular government from religious authority and called for a universal monarchy to unite all secular governments in the interests of peace.

Hero’s Engine.

(Public Domain/Wikimedia commons)

Hero of Alexandria

An inventor who nearly touched off the industrial revolution two thousand years early, Hero has several fantastic credits to his name. He invented the windmill, the vending machine, and the automatic door.

He is best known for his description of an aeolipile, an early steam engine. It is a simple device and consists of a boiler with two jets. When heated, water in the boiler escapes and causes the whole thing to spin. The device, often called ‘Hero’s Engine’ was described by him in the 1st century C.E., but may date back earlier.

The aeolipile was first used to demonstrate the power of the weather but was later used as a temple curiosity. While some historians argue Hero understood its possible uses, this is controversial. It wasn’t until 1543 that we can confirm that anybody came up with the idea to attach the engine to something and do work with it.

Woodhull presidential campaign.

(Photo by Hulton Archive/Getty Images)

Victoria Claflin Woodhull

The first woman to run for the office of President of the United States, Victoria Woodhull’s platform would seem radical even today. She also did this before any woman could have voted for her, though Susan B. Anthony famously tried.

Running for the Equal Rights Party, Woodhull campaigned for labor rights, progressive taxation, equal rights for men and women, free love, an international system of preventing war by arbitration of disputes, total employment through public works projects, and the end of the death penalty.

The Equal Rights party also nominated Fredrick Douglass for vice president; he never acknowledged it and campaigned for President Grant. Woodhull received a negligible number of votes and was too young to take office anyway, but still has the distinction of being the first woman to run.

Her progressive stances didn’t end there; her personal life shocked the Victorian moralists of her day. She and her sister were the first women to be stock brokers on Wall Street. They ran a newspaper that discussed issues of sexual double standards, how long a skirt needed to be, vegetarianism, and other social problems. It also featured the first English printing of Marx’s Communist Manifesto. While she later walked back on it, she was also a supporter of free love during her more radical years.

Madam de Pizan giving a lecture.

(Public Domain)

Christine de Pizan

An Italian poet writing in France during the 14th century, Christine de Pizan was a celebrity in her own time with big ideas. Simone de Beauvoir called her works “the first time we see a woman take up her pen in defense of her sex.” She was the first professional woman of letters in European history.

Left without an income source after the death of her husband and father, she embarked on a writing career at a time when nearly all other female writers wrote under pseudonyms. She wrote love poems, biographies, and prose works.

Most noteworthy is The Book of the City of Ladies, a story of Christine using the achievements of famous women in history to build a city. In the book, she argues by allegory that men and women were both equally capable of goodness, a radical notion at the time. She also claimed that women should be educated and wrote an accompanying manual for it, another stunning departure from medieval practice. Her books remained in print for two centuries.

Ada Lovelace as depicted by Alfred Edward Chalon.

(Photo by Hulton Archive/Getty Images)

Ada Lovelace

The daughter of Lord Byron, Lovelace was directed towards math and science by her mother out of fear that she would otherwise turn out like her father. While science didn’t save her from an early death, it did allow her to become the first computer programmer in history.

In 1842, she translated an article about an incomplete mechanical computer devised by Charles Babbage into English. At the end of the article, she added a series of notes which included the algorithms necessary for the machine to compute Bernoulli numbers, the first published computer program.In the same section, she argued that artificial intelligence was impossible, explaining that the device could only act as ordered.

In addition to being the first person to write computer code, she was the first person to realize how much computers could do. Computer historian Doron Swade argues that she was the earliest person to understand that the numbers a computer was crunching could represent anything, not just quantities. This jump, which nobody else at the time made, predicted our current use of computers as more than mere calculators.

Descartes.

(Hulton Archive/Getty Images)

Rene Descartes

A famous French scientist and philosopher, Descartes was also a few hundred years early on one of his inventions.

After reviewing an idea for improving vision pitched by Leonardo da Vinci, Descartes invented the contact lens. Consisting of a glass tube filled with liquid and placed directly on the eye, it was able to correct for vision problems. However, it was so large that it made blinking impossible. The first practical contact lenses would not be invented for another 250 years.

This was on top of Descartes successful career inventing modern philosophy, fusing algebra and geometry, and laying the foundations for the invention of calculus, which happened shortly after his death.

Marcus Aurelius

Marcus Aurelius

The last of the Five Good Emperors of Rome, Marcus Aurelius was a stoic philosopher whose ideas on life and governance make for great reading.

His excellent rule was progressive on many fronts. His dedication to free speech was particularly noteworthy. He wrote in Meditations of the nobility of “the idea of a polity in which there is the same law for all, a polity administered with regard to equal rights and equal freedom of speech, and the idea of a kingly government which respects most of all the freedom of the governed.”

He practiced what he preached and ignored satirical depictions of him when he could just as easily have killed the people making fun of him. While he wasn’t the only person holding this stance, he was one of the few people to allow such liberties before the modern era. His statement is held as one of the ancient origins of liberal political philosophy.

(Edward Gooch/Edward Gooch/Getty Images)

Jeremy Bentham

The founder of utilitarian philosophy, Bentham was an avid reformer during his lifetime, and his philosophy has inspired many people who worked for social justice long after his mummification.

One of his first reform efforts was the creation of a better prison, the Panopticon. The design featured a single watchtower surrounded by cells, which were arranged in a circle. Bentham proposed that since every prisoner could be seen at any time, all prisoners would behave themselves. The building was never constructed, though Michel Foucault remarked that core concept spread throughout the criminal justice system and every other part of our society.

Bentham, convinced that the rejection of the Panopticon was caused by a conspiracy against the public, set his sights on reforming everything else. During his lifetime he argued for animal rights, women’s rights, and law reform. A paper arguing against the criminalization of homosexual acts was published after his death, making him the first person in England to write an essay in support of gay rights.

He is still ahead of the UK on the issue of no-fault divorce, which he supported and they still haven’t gotten around to.

Chanakya

Chanakya was an Indian statesman, philosopher, and economist in the 4th century BCE who was one of the architects of the Mauryan Empire.

His treatise Arthashastra, which was thought to be lost until the 20th century, and has been favorably compared to Machiavelli’s The Prince. Unlike the European work, the Arthashastra encourages a king to rule justly and empower the people he rules.

Several points in the book would be considered progressive today. He argues for giving welfare to those who could not work, giving out land to the peasants if the landed elite weren’t using it, a mixed economy, conservation, and giving animals which had worked their entire lives a comfortable retirement.