It’s the age of automation and the robots are coming. But for what?

Photo credit: Hugo Amaral/SOPA Images/LightRocket via Getty Image

- Journalist Andrés Oppenheimer, columnist and member of a Pulitzer Prize-winning team explores the cutting edge of automation.

- From South Korean robot schools, Silicon valley futurist predictions and automated Japanese restaurants, this book shows us that the future of work is almost here.

- Already replacing a growing number of workers while also creating new roles, the concept of employment is becoming even more dynamic.

Alarmed and somewhat intrigued by a University of Oxford study that predicted 47 percent of jobs would be replaced by robots or intelligent computers, journalist Andrés Oppenheimer set out to discover what the future of work held for the potential casualties and benefactors of this new era.

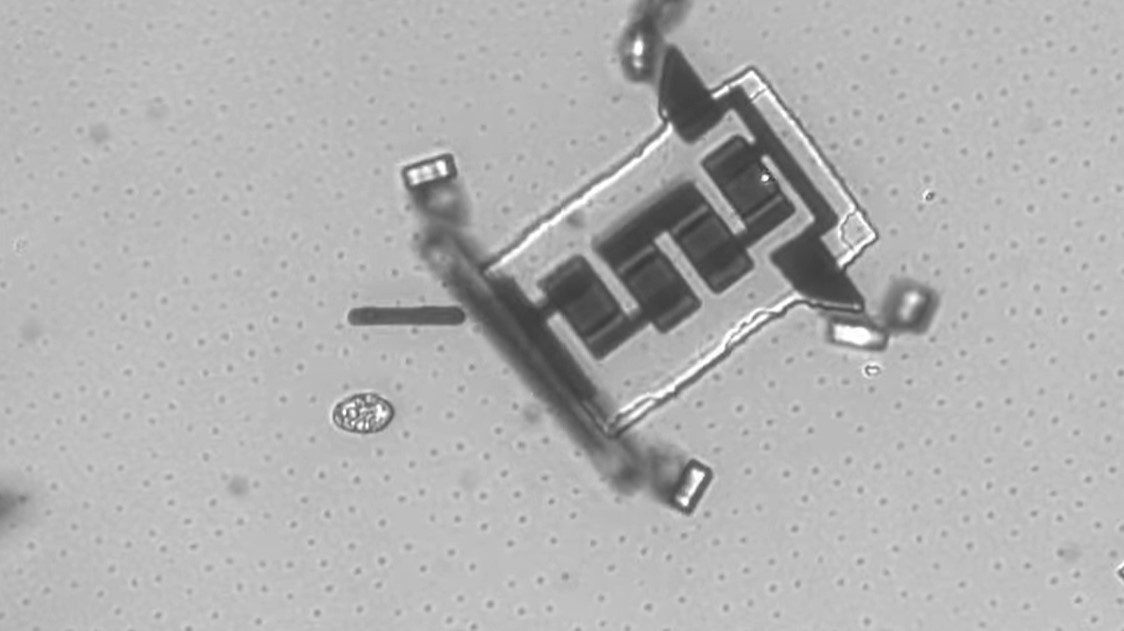

Robotics and other assorted automated processes are already radically changing the nature of what we consider work and employment. Unlike past eras of paradigmatic technological shifts, where entire workforces were able to quickly recover and evolve into new burgeoning fields — the coming age of automation isn’t going to be as seamless of a transition.

In The Robots Are Coming! The Future of Jobs in the Age of Automation, Oppenheimer casts a wide net of inquiry into a broad and multidisciplinary search for the future of what’s to come. The result of years of tenacious research, experiences and thrilling conversations, this book leaves no tech stone unturned.

Without devolving into a buzzword jargon fest, Oppenheimer adequately sketches out and name drops nearly every technology experts and pundits alike think will usher in the new age.

Whether it’s machine learning, A.I., augmented and virtual realities or the need for a universal basic income — this book name checks the aforementioned tech and then assaults it from all fronts. Is it hype? Where are we in terms of implementation? What do the experts say and what do the detractors think? How will this affect the job market and notions of employment?

The Robots Are Coming!

What’re they coming for? Everything.

Timeframes, statistics, and opinion tended to oscillate depending on who the author was talking to at the time. There were many instances cited that went against all common fears of automation displacing jobs. For example, in 2016 Amazon increased their transport robots from 30,000 to 45,000. Speculators at the time figured this would result in the loss of jobs. On the contrary, more than 100,000 new jobs were added in the next 18 months.

In our present time these types of employment increases are relatively common. But they’re also equally matched with a host of jobs in all industries being lost to automation. And they’re not just confined to low-level labor and service jobs. They’re affecting all levels of work.

Head up to the high towers of Wall Street and you’ll even see financial professionals replaced by robo-analysts using big data. These aren’t displacing the lowest of the workforce, but knocking out big-time financial advisors that use to make an average of $350,000 to $500,000 a year.

Even duties in professions such as journalism and law aren’t safe from being deferred to automation. Andrês remarked that in just the past few years the stunning speed of automated transcription services completely changed the way he conducted interviews. The book’s interviews themselves were transcribed and largely translated by A.I. methods.

A growing force of bots are also writing a rising number of articles due to a technology called Heliograf. What would have taken hundreds of journalists covering local elections, was done with just one templating bot. In 2016 the Washington Post was able to cover over 500 local elections with this technology.

If one thing is perfectly clear, it’s that automation and intelligent computers are leaving nothing behind and popping up in the least expected places. Understandably, this has got a lot of people worried.

Anders Sandberg from Oxford comically, but nonetheless genuinely, put it this way:

It’s quite simple: if your job can be easily explained it can be automated, if it can’t it wont.

The future of work is going to require a massive shift in skills, mindset and know-how. Soft skills, being able to work with a steady flow of interactive data and ability to make actionable insights from the data-driven world are just some of the traits of a future workforce.

For those that aren’t going to make the cut, they’ll need to shift their mindset on the psychological and cultural notion of work and employment in the first place. The many futurists, serious economists, and, at times, the author himself truly believe that a universal basic income needs to be implemented.

A new mindset for the future

In an interview with philosopher Nick Bostrom, there was a discussion about the importance and self-worth that so many people receive from their employment. This he believes is a new phenomenon and one of the major problems we’ll have to face socially.

Bostrom mentions that at one point, the aristocratic classes of old were able to live worthy lives by engaging in pleasurable and fulfilling experiences. It’s inferred from his conversation that something like this will need to take place in the mindset of a larger sect of the population. With the prospect of an entire futuristic world not needed for work, we seriously need to reconsider the human enterprise and the notions of self-worth tied to employment.

All futurist utopian ideals aside, the nature of schooling, vocational work and employment seem to be following an age-old trend – omnipotent progress always rears its head and usurps the status quo. Work will change with the times in absurdly unique ways in which even this book and any one else alive today will not be able to predict.

Oppenheimer mentions how jobs like iPhone developer, Cloud data analyst and so on emerged from our most recent inventions and innovations. Less than two decades ago these words would have been gibberish to anyone hearing them. The same will hold true for the jobs in the next few decades.

There are a number of things that no foreseeable robotic intelligence will ever be able to compete with. Forget fantasy notions of singularities and eschatological coming of days through superintelligence – these things are a different thing to worry about entirely. The reality of the situation is that new jobs are coming and a whole lot of jobs we’ve had for years are never going to return.

Dealing with the inability to reskill a large amount of the populace will be a major problem in the coming years.

The author sees himself as both techno optimist in the long-run, but a techno-pessimist in the short term.

If there’s one final takeaway from this book it’s that the threat or rather promise of automation is real and an inevitability. There’s no use fighting against it. The only thing we can do is evolve alongside it.