The Singularity and Its Discontents

Bioethicist Paul Root Wolpe questions the basic premise of the Singularity concept, arguing that it “misunderstands the complex nature of biological and physical life.”

Sign up for the Smarter Faster newsletter

A weekly newsletter featuring the biggest ideas from the smartest people

In February, 2011, a powerful IBM computer called Watson became the Jeopardy world champion. Immediately, the media went crazy with headlines about machines obliterating mankind. As a media professional, I am not at all surprised by this – the more powerful our machines become, the more our attitudes toward them become polarized between paranoia on the one hand and weak-kneed submission on the other. Playing upon those fears and desires is a guaranteed attention-getter – the stuff of multi-digit pageviews and retweets.

What’s the Big Idea?

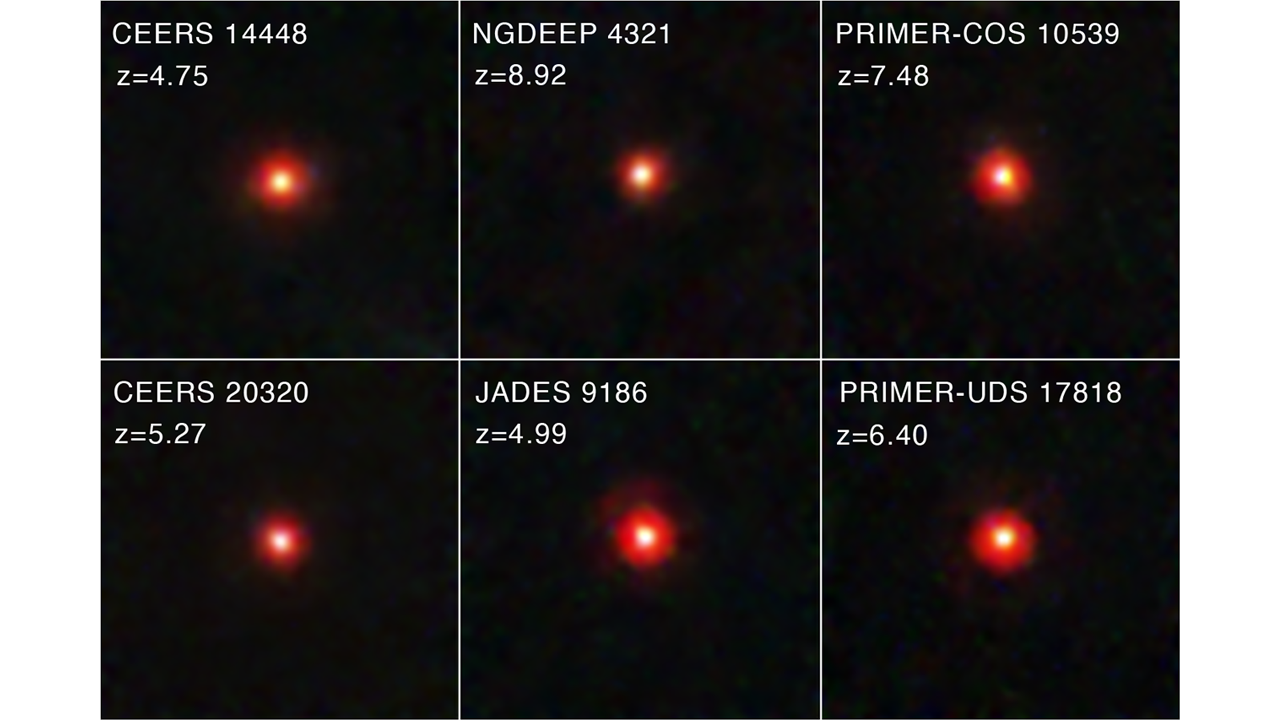

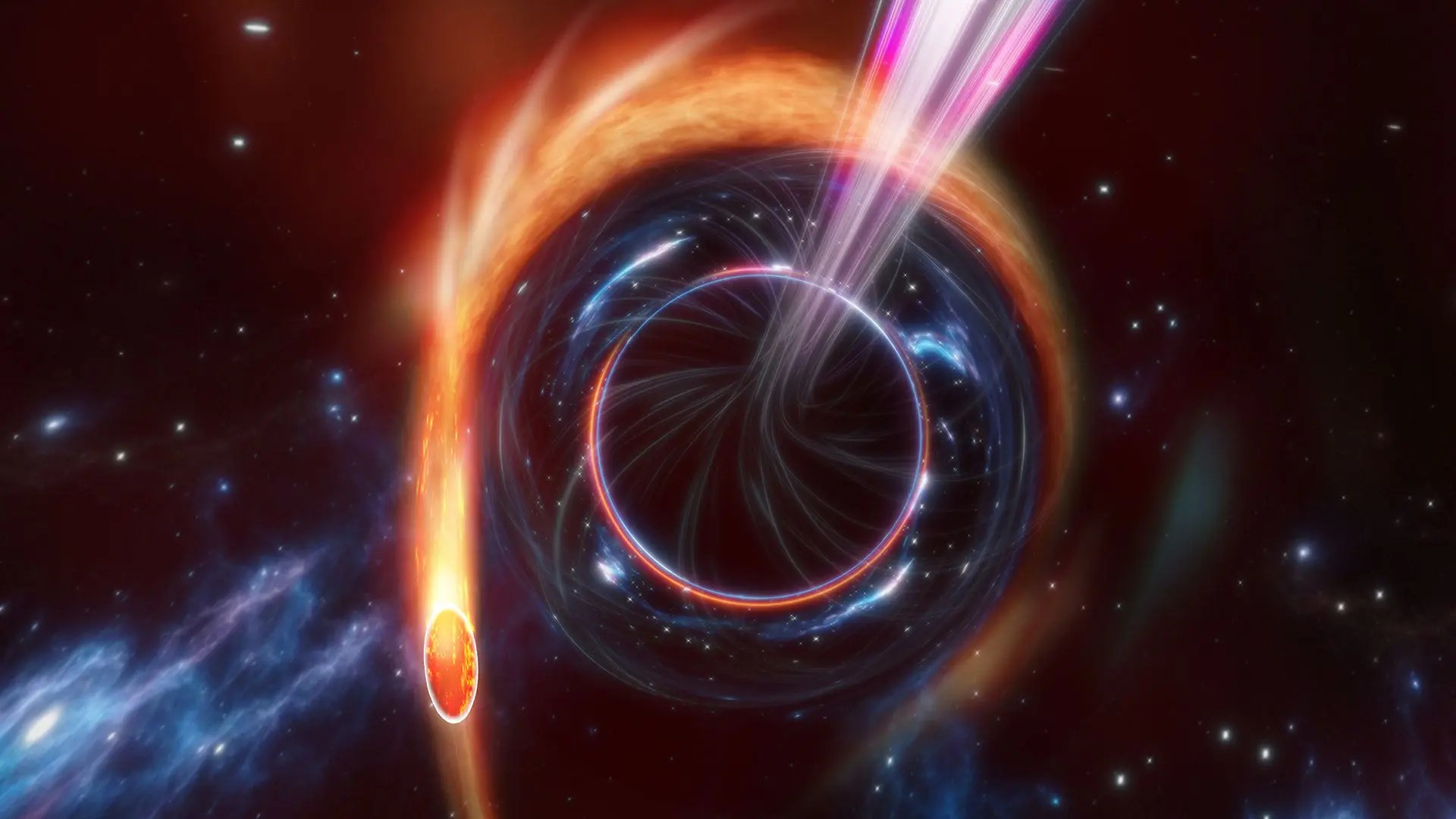

The concept of “the Singularity,” a moment in the not-too-distant future when we will manage to create superhuman intelligence, either in machine form or by augmenting our own brains with biotechnology, is particularly effective at inspiring this kind of technophobia or technophilic zealotry. Mathematician and Science Fiction author Vernor Vinge coined the term in a 1993 article – analogizing our inability to envision a post-A.I. world to modern physics’ inability to explain what happens at the center of a black hole. In the hands of Futurist Ray Kurzweil and friends, the Singularity has evolved into an inspirational movement with its own Institute and University, both dedicated to hastening the coming of the big event and ensuring that its outcomes are beneficial – rather than disastrous – for mankind.

The movement has its fervent detractors. Jaron Lanier, an early Internet pioneer, the author of You Are Not a Gadget, and a partner architect at Microsoft Research calls the Singularity an “ultramodern religion . . . in which people are told to wait politely as their very souls are made obsolete.” Lanier objects to what he sees as as an increasingly widespread desire to cede human responsibility to technology – to let Netflix, for example, decide what movie we should watch next, or allow expensive data-crunching systems to represent the reality in public-school classrooms – though machines haven’t yet achieved anything approaching (our admittedly imperfect) human sentience or complexity. He cautions against Silicon Valley hype that prematurely paints technology as redemption from human suffering.

Bioethicist Paul Root Wolpe, a recent Big Think guest, is not overly disturbed by visions of a future in which people have robotic arms and silicon brain-implants. Still, he questions the basic premise of the Singularity concept, arguing that it “misunderstands the complex nature of biological and physical life.”

Every branch of science, Wolpe points out, periodically reaches major thresholds that are expected – by the media, at least – to redefine and clarify everything, forever. But for each threshold of understanding we cross, unforseen complexities arise – the frontiers of the next generation of the science. Wolpe finds the Singularity’s focus on a single, transformative event misleading and dangerously simplistic.

The movement has its fervent detractors. Jaron Lanier, an early Internet pioneer, the author of You Are Not a Gadget, and a partner architect at Microsoft Research calls the Singularity an “ultramodern religion . . . in which people are told to wait politely as their very souls are made obsolete.” Lanier objects to what he sees as as an increasingly widespread desire to cede human responsibility to technology – to let Netflix, for example, decide what movie we should watch next, or allow expensive data-crunching systems to represent the reality in public-school classrooms – though machines haven’t yet achieved anything approaching (our admittedly imperfect) human sentience or complexity. He cautions against Silicon Valley hype that prematurely paints technology as redemption from human suffering.

Bioethicist Paul Root Wolpe, a recent Big Think guest, is not overly disturbed by visions of a future in which people have robotic arms and silicon brain-implants. Still, he questions the basic premise of the Singularity concept, arguing that it “misunderstands the complex nature of biological and physical life.”

Every branch of science, Wolpe points out, periodically reaches major thresholds that are expected – by the media, at least – to redefine and clarify everything, forever. But for each threshold of understanding we cross, unforseen complexities arise – the frontiers of the next generation of the science. Wolpe finds the Singularity’s focus on a single, transformative event misleading and dangerously simplistic.

Paul Root Wolpe: Physics also thought it was going to find its grand unified theory a long time ago. And now we’re just beginning to discover that maybe the universe isn’t exactly organized the way we thought it was with dark matter and String Theory and all of that, which we still don’t really understand the nature of and can’t agree about.

I think what we’re going to find over time is that rather than convergence leading us to some sort of unified idea is that there will constantly be this kind of complexity fallout. As we learn about things more and more deeply, we will discover that in fact, there’s all kinds of peripheral work to be done that we couldn’t have even imagined looking forward. And what that means is you’re not going to have a convergence towards a singularity, but you’re going to have a very complex set of moments where things will change in a lot of different ways.

What’s the Significance?

The danger of the Singularity concept lies in its assumption that an inevitable, singular transformation of life as we know it is fast approaching. In some, this inspires paranoid, Terminator-style visions of mankind enslaved by machines. In others, beatific fantasies of a world free of messy human imperfection. In either fiction, involuntarily or by choice, we are annihilated by our own creations. The first is a primal death-wish. The second, a religious vision of redemption. Neither approach faces squarely the real challenges and possibilities before us.

In reality, the future may be much closer in some respects to William Gibson’s cyberpunk classic Neuromancer, in which biotechnology and artificial intelligence solve some of our problems, only to introduce a myriad of new ones. What do you do, for example, when you’re surfing some infinitely distant spiral arm of the Internet via 4-D holovision and a glitch in the damn program suddenly leaves you stuck halfway through the wall of a giant, obsidian cube that represents a block of encrypted data you’re trying to access?

Close your eyes and wait for the update?

The danger of the Singularity concept lies in its assumption that an inevitable, singular transformation of life as we know it is fast approaching. In some, this inspires paranoid, Terminator-style visions of mankind enslaved by machines. In others, beatific fantasies of a world free of messy human imperfection. In either fiction, involuntarily or by choice, we are annihilated by our own creations. The first is a primal death-wish. The second, a religious vision of redemption. Neither approach faces squarely the real challenges and possibilities before us.

In reality, the future may be much closer in some respects to William Gibson’s cyberpunk classic Neuromancer, in which biotechnology and artificial intelligence solve some of our problems, only to introduce a myriad of new ones. What do you do, for example, when you’re surfing some infinitely distant spiral arm of the Internet via 4-D holovision and a glitch in the damn program suddenly leaves you stuck halfway through the wall of a giant, obsidian cube that represents a block of encrypted data you’re trying to access?

Close your eyes and wait for the update?

The series Re-envision is sponsored by Toyota.

Sign up for the Smarter Faster newsletter

A weekly newsletter featuring the biggest ideas from the smartest people