DeepMind

Here’s why coding skills alone won’t save you from job automation.

▸

7 min

—

with

Artificial Intelligence is already outsmarting us at ’80s computer games by finding ways to beat games that developers didn’t even know were there. Just wait until it figures out how to beat us in ways that matter.

▸

4 min

—

with

Google’s DeepMind artificial intelligence learns what it takes to win, making human-like choices in competitive situations.

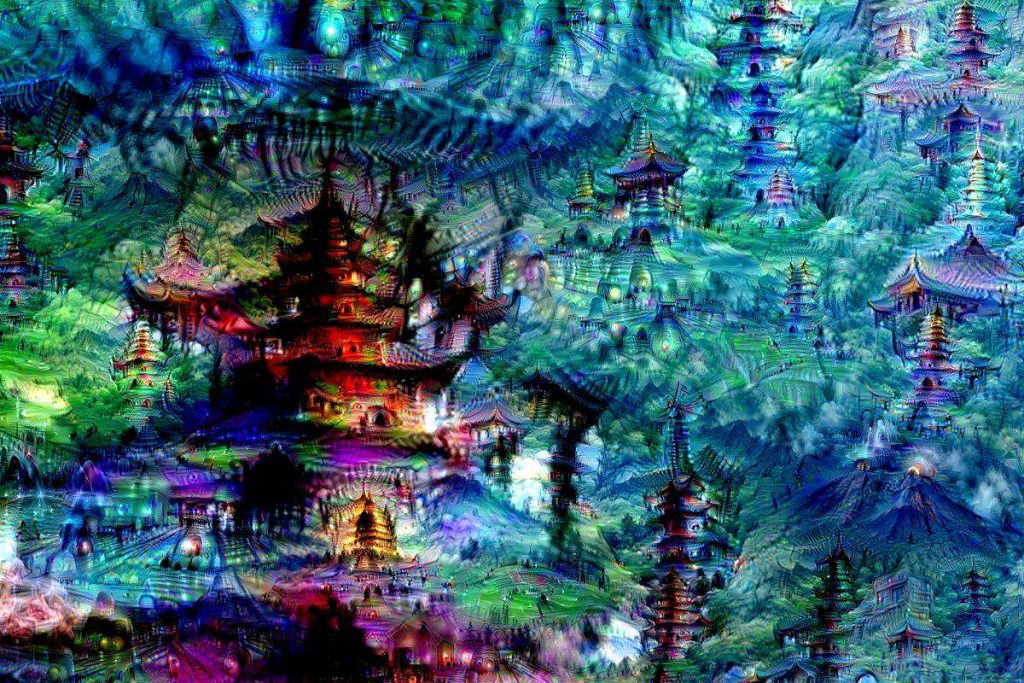

They may look odd, but it’s all part of Google’s plan to solve a huge issue in machine learning: recognizing objects in images.

Google’s DeepMind creates AI that blows away existing speech synthesizers.