Is it ethical for A.I. to have a human voice?

On May 8th, Google rolled out Duplex, a new AI technology that is able to hold human-sounding conversations. The tech drew astonishment across a wide spectrum of the internet at the ability of the AI to interact with an unsuspecting human when making an appointment for a haircut. Duplex was able to adjust to the information given and even inserted a few fillers like “uh” and “hmm” into its speech, coming across quite realistically. And what’s even better – the AI accomplished its task, booking the appointment.

While undeniably impressive, Google’s demonstration also raised a host of concerns focused on what it means that an AI can be made to sound like a human. Is it ethical for an A.I. to have a human voice and impersonate a human? Or should there be a way for humans to always know they are conversing with an AI?

Duplex is built to make the A.I. sound like you are talking to a regular person. Trained to do well in narrow domains, Duplex is directed towards specific tasks, such as scheduling appointments. That is to say, Duplex can’t talk to you about random things just yet, but as part of the Google Assistant, it could be quite useful.

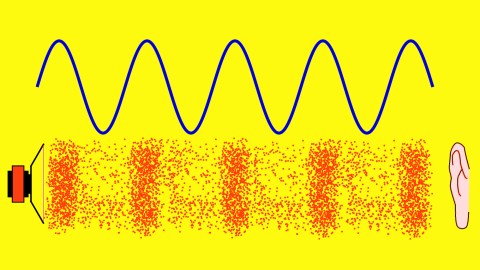

The way it achieves the naturalness is by synthesizing speech that is modeled after the imperfect speech of humans, full of corrections, omissions or undue verbosity. As Google explains on its blog, Duplex is a recurrent neural network (RNN) at its core that is built utilizing the TensorFlow Extended (TFX) machine learning platform.

The network was trained on anonymous phone conversation data. It can look at such factors as features from the audio, the goal, and the history of the conversation. The speech it generates can control intonation depending on the circumstance,” as it’s described in the Google’s blog.

The AI also varies the speed of its response to the other speaker based on the words used and the overall situation. Most of the tasks are completed fully autonomously, without any human interaction.

Here’s how that call by the AI to the hair salon went, as presented by the Google CEO Sundar Pichai:

And here’s Google Duplex calling a restaurant:

Such technological advances in creating fake humans understandably provoked a number of concerns among observers.

Tech reporter Bridget Carey called out the tech for its ability to deceive humans, especially in the age known for “fake news”:

“I am genuinely bothered and disturbed at how morally wrong it is for the Google Assistant voice to act like a human and deceive other humans on the other line of a phone call, using upspeak and other quirks of language,” wrote Carey.

She also pointed out that we need to look at when “it’s right for a human to know when they are speaking to a robot.” Indeed, wouldn’t you want to know the voice on the other end is a human being? Or does that not matter?

The data science research analyst Travis Korte offered that maybe the key is in finding a way to make sure the machines sound different from us:

“We should make AI sound different from humans for the same reason we put a smelly additive in normally odorless natural gas,” wrote Korte.

Comments on his thread suggested that machines have synthesized voices and as such should sound “synthetic”.

Oren Etzioni and Carissa Schoenick, experts from the Allen Institute for AI wrote a think piece where they were unequivocal about how creepy and ethically dangerous are machines that sound like humans:

“From a technology perspective this is impressive, but from an ethical and regulatory perspective it’s more than a little concerning,” elaborated Etzioni and Schoenick. “In response, we need to insist on mandatory full disclosure. Any AI should declare itself to be so, and any AI-based content should be clearly labeled as such. We have a right to know whether we are sharing are thoughts, feelings, time, and energy with another person or with a bot.”

The experts also compared listening to the otherwise innocuous Google Duplex to “the sound of a Pandora’s box that is opening rapidly.” Let’s hope we can keep this technology contained and serving us instead of devaluing even more the veracity of the information we encounter through the media and in our daily lives.

—