‘Information Theory’ Is Misinforming Us (Because The Meaning Isn’t In The Message).

“Information theory” is misnamed. And information operates differently in physics versus biology.

1. Gregory Chaitin applies “information theory” to biology by casting DNA as “a universal programming language found in every cell.”

2. But information theory is misnamed — it covers only symbolically encoded messages, not information generally. It quantifies information by explicitly ignoring meaning. It can do this because usually the meaning of a message isn’t in the message. It’s in what the message refers to (or causes).

3. To a signaling engineer, the messages “set” and “ant” have equal amounts of information, three symbols each. Yet the Oxford English Dictionary needs 60,000 words to describe the meanings of “set” versus less than 60 for “ant.”

4. Information theory’s engineering mindset presumes simple semantics — clean 1:1 mappings from message components to looked-up meaning — but evolved systems like language have more complex semantics — e.g., the 430 verb senses of “set.” Hence the amount of information in a message often doesn’t relate simply to its meaning (or the complexity of its effects).

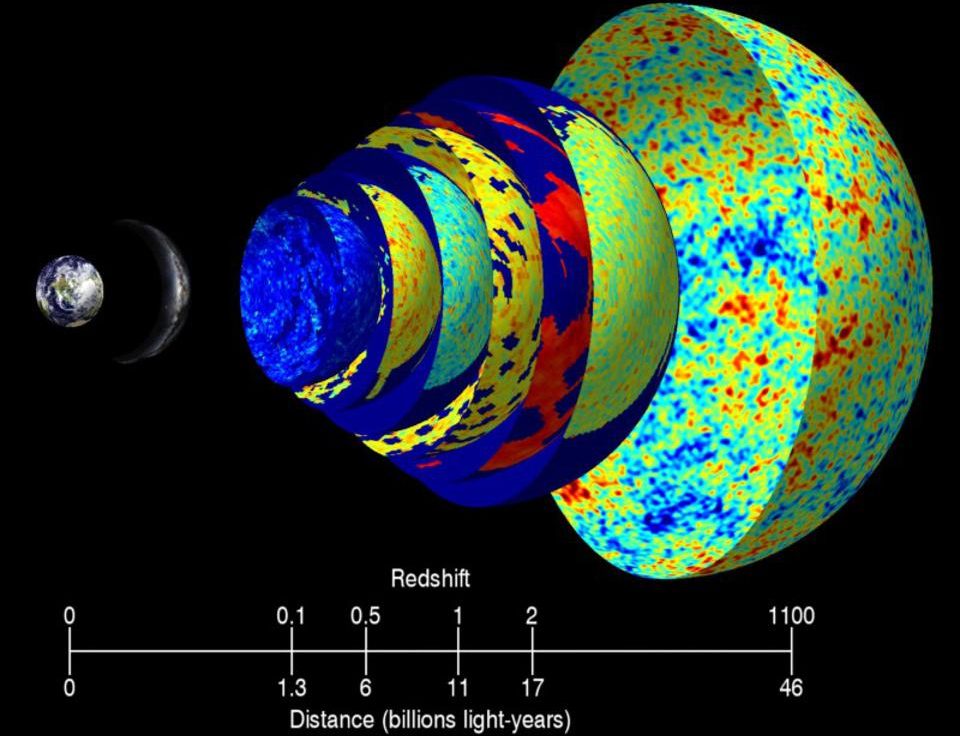

5. Saying the human genome’s 3 billion base pairs contain ~770 MB of information isn’t the whole story. Much else (including other information) is needed for DNA’s messages to be effective. As Richard Lewontin notes, DNA isn’t “self-replicating”; it needs a vast molecular machinery and a very particular environment (context) to work (express its meanings).

6. Much quality is lost in quantifying loose types. Comparing genes numerically — as in “humans and chimps share ~99 percent of their DNA” — effectively ignores gene semantics. Texts with 99 percent of the same words can have very different meanings (word combinations matter more than word counts).

7. DNA’s “language of life” has even higher complexity. Its meanings (its sentences) aren’t all statically predefined — they’re built dynamically. After DNA completes its parental message transmission and developmental “recipe” roles, its genes enjoy an active social life. Their modular functions (protein-making “subroutines”) are orchestrated by environmental messages.

8. Despite some laxity, statements like, “Our bodies are full of software,” and, “All biological events are programmed,” highlight how processes in biology can handle information differently than those of physics.

9. Do objects in physics process information? A thrown ball’s path is somehow computed/followed, so at least metaphorically, that’s information processing. And in that sense, information and causality are related.

10. Physics never needs what programmers call exception handling. Its patterns don’t have exceptions. There’s a 1:1 directness to physics’ cause-effect structure. Or, where a cause has many effects, they have a stable, or predictable, distribution. So probability and statistics apply.

11. Biology’s information processing is more complex (more software-like, more algorithmic). Somewhere between its low-level biochemical cause-effect interactions and life’s higher-level decision processes, different kinds of logic emerge. In some contexts, cause and effect aren’t direct; they’re mediated by semantics (see Charles Darwin’s “Hindoo,” on belief and biochemistry interacting).

Logic structures and information processing appear to vary by domain.