Dr. Michio Kaku says “if I were to put money on the table I would say that in the next ten years as Moore’s Law slows down, we will tweak it.”

Michio Kaku: Years ago, we physicists predicted the end of Moore’s Law that says a computer power doubles every 18 months. But we also, on the other hand, proposed a positive program. Perhaps molecular computers, quantum computers can takeover when silicon power is exhausted. But then the question is, what’s the timeframe? What is a realistic scenario for the next coming years?

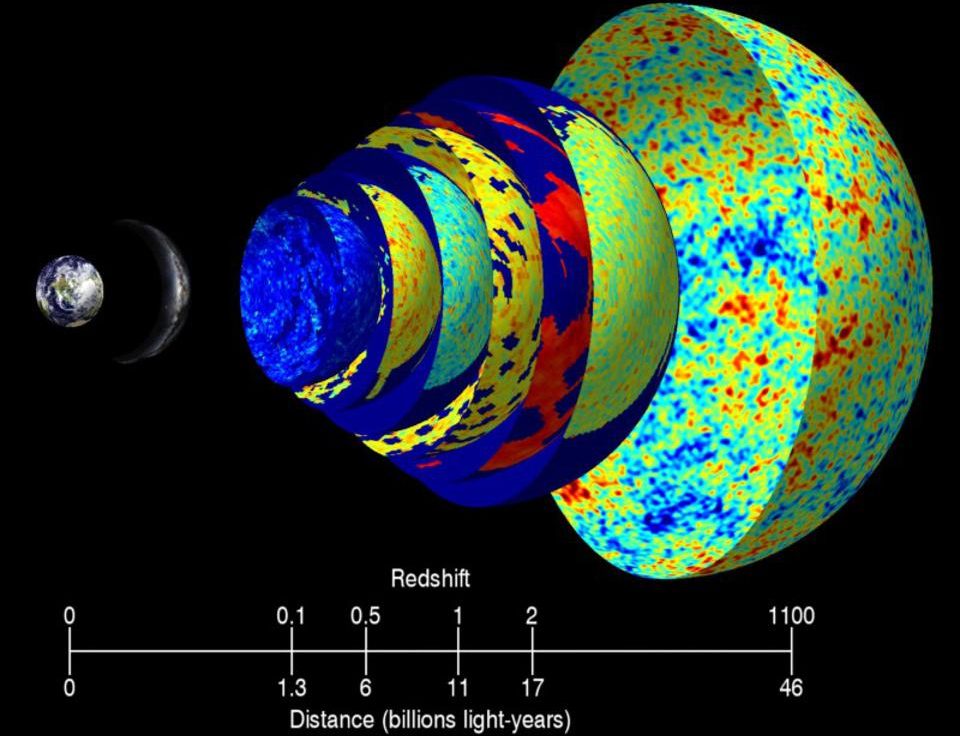

Well, first of all, in about ten years or so, we will see the collapse of Moore’s Law. In fact, already, already we see a slowing down of Moore’s Law. Computer power simply cannot maintain its rapid exponential rise using standard silicon technology. Intel Corporation has admitted this. In fact, Intel Corporation is now going to three-dimensional chips, chips that compute not just flatly in two dimensions but in the third dimension. But there are problems with that. The two basic problems are heat and leakage. That’s the reason why the age of silicon will eventually come to a close. No one knows when, but as I mentioned we already now can see the slowing down of Moore’s Law, and in ten years it could flatten out completely. So what is the problem? The problem is that a Pentium chip today has a layer almost down to 20 atoms across, 20 atoms across. When that layer gets down to about 5 atoms across, it’s all over. You have two effects. Heat--the heat generated will be so intense that the chip will melt. You can literally fry an egg on top of the chip, and the chip itself begins to disintegrate And second of all, leakage--you don’t know where the electron is anymore. The quantum theory takes over. The Heisenberg Uncertainty Principle says you don’t know where that electron is anymore, meaning it could be outside the wire, outside the Pentium chip, or inside the Pentium chip. So there is an ultimate limit set by the laws of thermal dynamics and set by the laws of quantum mechanics as to how much computing power you can do with silicon.

So, what’s beyond silicon? There have been a number of proposals: protein computers, DNA computers, optical computers, quantum computers, molecular computers. If I were to put money on the table I would say that in the next ten years as Moore’s Law slows down, we will tweak it. We will tweak it with three-dimensional chips, maybe optical chips, tweak it with known technology pushing the limits, squeezing what we can. Microsoft for example, a software company, wants to go to parallel processing. Instead of having one chip here, spread them out horizontally. Take a problem, cut it in many pieces and have it run simultaneously on many standard chips. That’s another possible solution. But hey, Moore’s Law is exponential. Sooner or later even three-dimensional chips, even parallel processing, will be exhausted and we’ll have to go to the post-silicon era.

So, what are the candidates? The first candidate is molecular computers; that is, molecular transistors. They already exist. We already have molecules that are in the shape of a valve. You turn the valve one way and the electricity stops through that molecule. You turn it the other way and electricity flows through that molecule just like a pipe and a valve because that’s what a transistor is, a switch, except this switch is molecular rather than a switch made out of piping. The problem is mass production and wiring them up. Molecules are very small. We forget that. How do you wire up tiny little molecules and create fantastic circuits with them? With transistors and silicon, we do it with photolithography, just like you make a t-shirt. How do you make a t-shirt? You get a stencil, shine light to the stencil and it has an image on a t-shirt by which you can spray chemicals. Well, the same thing we do with layers up layers of circuitry on a silicon chip.

Now, quantum computing in some sense is the ultimate computer, but there are enormous problems with quantum computing. The main problem is de-coherence. Let’s say I have two atoms and they vibrate in unison. If I have two atoms and they vibrate in unison I can shine a light wave and flip one over and do a calculation, but they have to first start vibrating in unison. Eventually an airplane goes over. Eventually a child walks in front of your apparatus. Eventually somebody coughs and then all of the sudden they’re no longer in synchronization. It gets contaminated by disturbances from the outside world. Once you lose the coherence, the computer is useless. So what is the world’s record for a quantum computing calculation? Da-dah, it’s three times five is fifteen. That is the world’s record for a quantum computing calculation. That doesn’t sound like much until you realize that that was done on five atoms. So here is a homework problem for you: take five atoms and prove that three times five is fifteen, and then you realize, oh my God, that was a nontrivial feat that IBM pulled off.

So, in conclusion, it is awfully hard to compute on quantum computers. The basic architecture, the basic apparatus has still not yet gelled. If I were to put money on the table, I would say that in the next ten years we’ll simply tweak Moore’s Law a bit with chip-like computers in three dimensions, but beyond that we may have to go to molecular computers and perhaps late in the 21st century quantum computers.

Directed / Produced by

Jonathan Fowler & Elizabeth Rodd