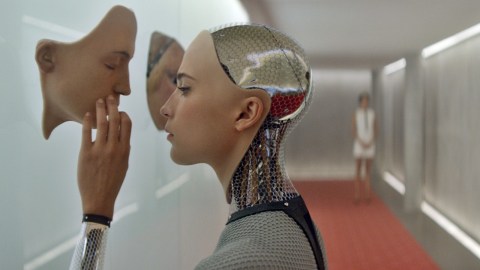

Could We Ever Train A.I. To Become Master Storytellers?

Supercomputers might be able to beat humans at games like chess or Go, but they’ve shown little aptitude for one of mankind’s distinguishing abilities: storytelling.

Mark Riedl, an associate professor at the Georgia Tech School of Interactive Computing and director of the Entertainment Intelligence Lab, has spent years researching this fundamental difference between A.I. and humans, and how it might be possible to train neural networks to write good stories.

I spoke with Riedl about his research on neural networks and how automated story generation might be used in the future.

Could you give a bit of background about your research in automated story generation?

In the last few years, we’ve looked at the question of how can [neural networks] tell stories about the real world? So, [how do we get] a computer to tell a story about something that could plausibly happen in the real world, which is a very complicated world. To do that, we turn to machine learning, neural networks – things where we might just be able to feed in lots of stories and try to get the neural network to learn the model for us.

The big vision is I would like to be able to feed a large number of stories into a computer program, have it learn how the world works – or, at least, how story worlds work – and then be able to produce new stories that are distinct from the stories it’s read that are plausible.

What’s the process a neural network undergoes to learn about story worlds?

I would say that any sort of machine learning – and neural networks are just one flavor of that – is all about pattern finding. So when you see two things happen again and again and again, you start to hypothesize that a pattern is happening.

So what a neural network is doing is going over the stories over and over and over again, looking to say, “Well, do certain sentences follow other sentences more often than not?” Or certain words, letter, or characters – or whatever your level of abstraction is that you want to start with.

Then the patterns become more and more sophisticated. So [the network] will notice that this pattern is followed by that pattern, and then you can think of it as a hierarchical growth of that pattern.

So a neural network is basically learning these statistical patterns, and by the time it’s all done we have this thing called a model.

And then, if the model is any good, I should be able to reverse the process, and if I ask for something, the model should be able to tell me what comes next, and after that, and after that. So it starts to lay down the patterns it likes the best, and if it’s text, we can call that a story.

On your Twitter, you mentioned that you want to train neural networks to learn about story generation, and eventually about sociocultural conventions and morals. Can you elaborate on that?

These are actually two aspects of the same thing, because to learn how to tell stories is to learn, basically, about the patterns of life. So think of a simple situation, like going to a restaurant.

Restaurants happen in stories a lot. They also happen in the real world a lot. So, when we talk about learning how to tell stories, in some sense what we’re doing is trying to learn how the world works. What we’re also learning is social conventions.

A question like “What should I do when I enter a restaurant?” would be the sort of question a neural network should be able to answer.

Apply that to robotics. So we want robots to be able to do things humans can also do. We’re asking the question whether robots can learn to behave like humans in social settings.

In that sense, I think of it as learning the rules of society. And I think this is important for multiple reasons. One is: societies have different sets of rules. So think about going to a restaurant in Europe versus America. The protocol is a little bit different. But also, if you think about why these social protocols exist, they often exist to prevent human to human conflict.

Another example is: Why do we stand in line at the post office? Because someone will get upset if we don’t, and that could lead to a fight. So we just kind of automatically follow the convention without really thinking about why we do it. So we’re also, in that sense, potentially teaching robots and A.I. systems how to avoid conflict.

What about story components like theme, motif and symbolism – would stories generated by A.I. include these nuanced ideas?

That’s definitely further down the road, in that they are higher-level patterns. And while, in theory, [neural networks] should be able to learn patterns of higher and higher complexities, we’re obviously starting at the most basic level.

But, to the larger point, I do think that these patterns of storytelling are emergent from the lower level cultural conventions. So we have ideas like “good defeats evil” because of [mankind’s] underlying desire to exemplify good values and bad values, which stems from daily life.

I think of it as kind of life climbing a ladder of greater and greater sophistication. These patterns were not created from a top-down sort of way, but emergent from a b0ttom-up sort of way. So we need to also learn them from a bottom-up way.

Where can we expect to see the next major innovation in automated story, on the consumer side?

The computer game industry has not been terribly fast to adopt artificial intelligence techniques that can work at the story level. So they’re not particularly interested in A.I.-developed plot lines. And there’s lots of reasons for that. One is that it does kind of present a certain level of risk. We really don’t know how to generate really good stories yet with computers.

Do you mean risk as in the story might turn out to be garbage?

Yeah, exactly. Or it will screw something up and then people will hate the game. Or there will be just sort of an unreliable experience coming out of the game.

There are certain application on the horizon for which story generation is more amenable. I think more open-ended virtual realities, where there’s not necessarily a strong game element, but more of being in a world populated by lots of other individuals, some of which are humans and some of which are AI systems. So think about populating very very large worlds with synthetic characters who have a little bit of backstory so they’re not just cardboard cookie-cutter sorts of things that you currently see in computer games.

One of the areas I think is most promising is improvisational storytelling. In the same sense that we have theatrical improvisation, being able to for a human and computer to just sit down and start bullshitting and making up storytelling.

What would that look like?

Do you know the show Whose Line Is It Anyway?

Yeah

Sometimes those games, right, that are kind of storytelling games, like save the cat from the tree sound very simple until one of the participants says “Oh no, the ladder is broken!” And then someone else says “I’ll get a catapult!” So, you’re constantly trying to make the story more and more absurd.

Now, imagine doing that not just between two humans, but with a computer trying to throw in interesting wrinkles into the story and that can also respond to your interesting twists.

That kind of hits on something about the comedy behind the products of neural networks. Are you familiar with Janelle Shane’s blog?

Yeah, I know her well – on Twitter.

She’s had some pretty hilarious results from training neural networks to do things like invent cookbook recipes. Do you find those funny, and if so, why?

For me, it’s both funny and a little bit sad. It’s an unintentional type of humor. It’s literally because there’s error in the model that the neural network learns. Right, it has choices – “I put A, and I can follow an A with an N, or a C, or an L, or a Q” – right? And then it kind of just doesn’t know what the difference is, so it picks one randomly.

So when it’s putting these things together and it comes out quite hilarious, it’s because it’s literally just confused. But it hits upon something that we recognize.

As in, the title kind of makes sense?

Right. It makes sense to us because we have all this semantic understanding and we understand these two words, and we try to fill in the blanks as to why those two things would go together. The neural network’s not thinking about that. It’s just thinking, “Statistically, I could put this word after that word. It’s just as good as any other guess.” So it doesn’t realize it’s being hilarious.

Speaking about the differences between artificial neural networks and the human brain, does studying neural networks shed light on the human brain or human life in general, or are they apples and oranges?

Neural networks are inspired by the human brain, but the similarities end pretty quickly. So I don’t think there’s necessarily a lot that we’ve learned from neural networks about how the human mind works.

What I do think is kind of interesting is really more about the data. The data is really an abstraction of things that happen in the real world. So when any sort of machine learning system learns a pattern, you can kind of reflect back and say, “Well, is that true of human behavior?” Because otherwise that pattern would not have existed. So, it does illuminate some things, and you can actually learn some things about human behavior by running the data through pattern finders and looking for statistical correlations.

Do you think we’ll ever see a video game in which every player experiences a unique, automatically generated story that’s narratively satisfying?

That’s something to aspire to. I think it depends on the complexity of the story. We have techniques that can tell pretty decent stories, at least from a computer game perspective – they don’t have really complicated stories here. And we can tell lots of variations on those stories.

So, I think in the near future it’s not implausible that we can have fully generated computer game experiences that are reasonably good and unique, but with limited cast of characters and a limited-size world.

It’s kind of like everyone can have their own little version of Little Red Riding Hood. You kind of know what to expect, but things will be different every time.

Right.

The challenge I’m tackling now with WikiPlots and these other things is I want to build models of all possible stories that can happen in the world, or a very large number of stories. That’d be akin to saying, “Today, I want to be a knight. And tomorrow, I want to be a WWII soldier. And the day after that, I want to be in my own sitcom.” And that is a much, much, much exponentially harder problem to solve, to the point where I don’t know if we’d see that in the next 5 to 10 years.

Can you elaborate on that idea of model complexity?

So the way I think of it is “how complicated is the fictional world?” In these very simple world, sometimes called microworlds or toy worlds, there’s only so many characters, those characters can do so many things, they can go so amny places. So you can kind of innummerate all the different things that cna happen. You can basically write down that model by hand.

Because the parameters are so constrained?

Right. So, I know in Little Red Riding Hood, I know there’s four characters, and those characters can go places, they can carry baskets, they can talk to each other, they can eat each other, they can hit each other with knives.

You can realistically write down the “rules” of that story world. And then the algorithm that makes new stories is just the plausible combination of all those things I just wrote down.

But in the real world there’s an infinite number of possibilities. Would it be the same in this fictional world?

It can technically be infinite, but infinite in uninteresting ways. So the story in which Little Red Riding Hood goes to Granny’s house, then back to her own house, then back to Granny’s house – a story in which they go back and forth three times.

There’s really more of a finite set of, what we’d consider, truly different outcomes.

In what applications should we expect to see this type of technology first?

I think you might start to see it in VR, in the sense of these character backstories we’re talking about. I think that’s a relatively safe application, in the sense that if you screw it up it’s not so bad.

Where I think the most compelling application cases involve education and training. Imagine interactive word puzzles, or word math problems, where the story keeps changing or continues to build on itself. There’s a way of using the story to engage people in the exercises.

Or military training scenarios. If the military training scenarios are constantly being updated, then you’ll have an infinite number of training scenarios you could do before you deploy.

[Continued conversation…]

My research is looking more at that big horizon of “be able to tell me a story about any topic.” So it’s kind of like “Tell me a sitcom, tell me a fairy tale.”

I want [the neural network] to be able to learn as much as it can about all aspects of the real world in one single model, all at once, so it has maximum flexibility when it’s asked to tell a story.

And this feeds into this notion of improv I was talking about, because what happens in improv is “Tell me a story, but change this, or do this. Or, what if the knight was really a lumberjack?” So [the A.I.] has got to understand what it means for a lumberjack to be in that type of situation.

And this is all super aspirational. I’ll probably be working on this for another 5 to 10 years.

Is 5 to 10 years when consumers might expect to have access to these types of applications, or at least a rudimentary version of them?

Yeah, in the sense that we might actually feel comfortable taking this out of a research lab and putting it into an application.