James Gleick ponders the paradox in information theory that since information is based on surprise, it is also chaotic and in many cases devoid of meaning.

James Gleick: I actually first started thinking about information as a possible glimmer of an idea for a subject for a book when I was working on Chaos, that's when I first heard about it. And it was strange. It was strange to hear about information theory as a scientific subject of study from a bunch of physicists who were working on chaos theory. I mean, they were analyzing a physical system. In fact, it was chaos in water dripping from a tap, the chaos of a dripping faucet. And they were analyzing it in terms of this thing called information theory, as invented by Claude Shannon at Bell Labs in 1948. And I remember thinking there's something magical about that, but also something kind of weird about it. Information is so abstract. We know that there is such a thing as information devoid of meaning, information as an abstract concept of great use, more than great use, of fantastic power for engineers and scientists. For Claude Shannon to create his theory of information, he very explicitly had to announce that he was thinking of information not in the everyday way we use the word, not as news or gossip or anything that was particularly useful, but as something abstract.

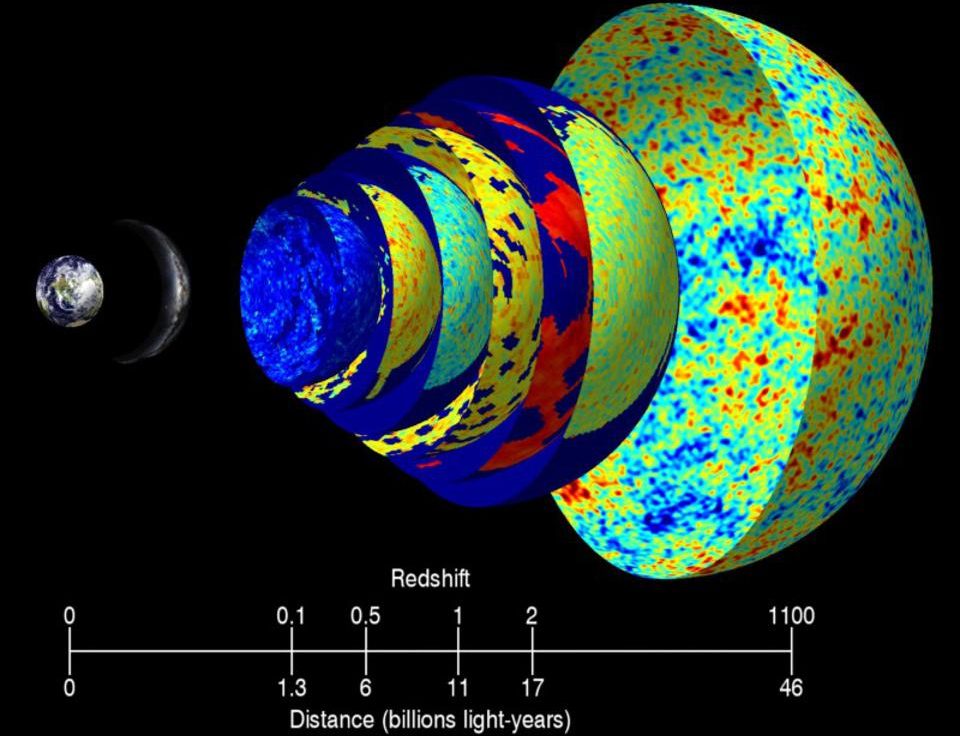

A string of bits is information, and it doesn't matter whether those string of bits represent something true or false, and for that matter it doesn't matter whether the string of bits represents something meaningful or meaningless. In fact, from the point of view of an electrical engineer who believes in information theory, a string of random bits carries more information than an orderly string of bits, because the orderly string of bits, let's say alternating ones and zeroes, or let's say not quite as orderly, a string of English text, has organization in it and the organization allows you to predict what the next bit in a given message is going to be. And because you can predict it, it's not as surprising. And because it has less surprise, it carries less information. There's one of the sort of paradoxes of information theory, that because information is surprise it's associated with disorder and randomness. All of this is very abstract. It's useful to scientists. It lies in the foundations of the information-based technological world that we all enjoy. But it's also not satisfactory. I think we as humans tend to feel that this view of information as something devoid of meaning is unfriendly. It's hellish. It's scary. It's connected with our sense that we're deluged by a flood of meaningless tweets and blogs and the hall of mirrors sensation of impostors, the false and true intermingling. We may feel that that's what we get when we start to treat information as something that is not necessarily meaningful because as humans what we care about is the meaning.