Hasty generalization: how to escape your biases and be more rational

- "Hasty generalization" is a common fallacy people make, which is born of our natural tendency to make rules of thumb.

- We can only be sure of our own thoughts, emotions, and consciousness, so how can we be truly rational about anything else in life with such a limited sample size?

- The solution is to reach beyond ourselves to find more sources in the hope of diluting or countering our own biases.

It’s Michael’s first time in Borneo. As he gets off the plane, he looks around to see it is pouring with rain. He says, “Oh, it always rains here.”

John picks up a friend’s newborn baby, who is smiling and giggly. “She’s such a happy baby!” he says.

Olivia has never been to the zoo before and so is excited to see a giraffe for the first time. “Giraffes always have such long necks!” she says.

We all probably could project ourselves into these situations. But, all three are guilty of the same informal fallacy. It’s called hasty generalization, or sometimes secundum quid in Latin. It goes back as far as Aristotle and is explored in a recent Big Think video.

There may be many shortcomings to human rationality, but there are also practical, clear ways by which we can prevent falling into error.

Sui generis

We all make generalizations. In fact, it is one of the most common and useful heuristics we employ to make the mind’s job a bit easier. For instance, when we talk to people, we generally assume they are telling the truth. When we stop at a red light, we assume it will turn green again soon. If we see a dog, we make the generalization that it will be able to bark. It would be impossible to navigate life without certain assumed, or generalized, rules.

But the strength or weakness of these generalizations depends upon the sample size, as well as how representative that sample is. For instance, if we only ever have met two French people in our life, it would be inappropriate and a bad argument to make generalized rules that apply to the French. If we only ever have met two French people in an English-speaking country, the statement, “French people speak really good English,” would be based upon an unrepresentative sample.

One of the big problems in philosophy is that there are so many things that are sui generis, or “one of a kind”, which lend themselves to the fallacy of hasty generalization. For instance, in the philosophy of religion, if God is entirely unique, what can we say of Him/Her/It that isn’t anthropomorphic? In aesthetics, I know and can say what beauty means for me, but how can I come to a general working definition for everyone? In moral theory, if I want to suggest “moral facts” exist, how do they relate or overlap with how we understand other kinds of facts?

The biggest issue we have in this kind of sui generis reasoning applies to our own minds. In the philosophy of mind, we only know our own consciousness, so how can we meaningfully talk about that of anyone or anything else? It is an issue that underpins the “problem of other minds,” as well as all sorts of cognitive biases we employ. We each project our own understanding and experiences onto the world. These are, as Daniel Dennett mentions in our video, the “foibles and blind spots in our thinking.” Knowing this, though, gives us an advantage, and as he goes on to say, “a weakness identified is something that can be avoided, to some degree.”

Less hasty generalization, more rationality

If we know that we have a natural propensity to generalize our own condition as the rule of the universe, we are better placed to avoid it. We can take steps to overcome it, even.

One tip, offered by Dan Ariely in the video, is to consult those who we consider to be competent judges or third-party experts. Ariely gives the example of when you are falling in love with someone. He says, “Good advice is to go to your mother and say ‘Mother, what do you think about the long term compatibility of that person?’” When we are in the first throes of a new relationship, we are so weighed down and blinkered by our own infatuation, everything we see passes through the lens of this love. Ariely’s point is to seek and use others as a trusted and objective vantage point to counteract our own rationality’s day off.

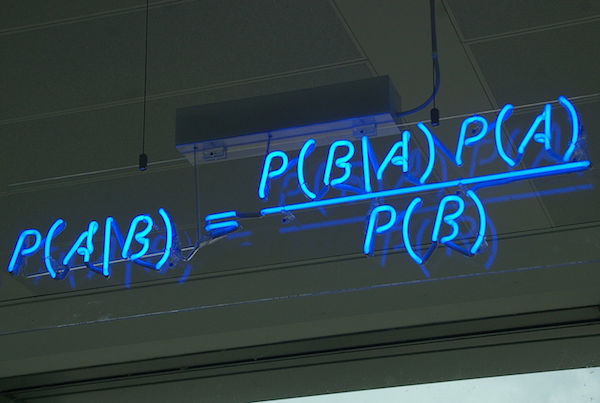

Another suggestion, offered by Julia Galef, is to apply “Bayes’ Rule.” Essentially, Bayes’ Rule is a practical application of the philosophical school known as coherentism. It asks us to consider what we do when confronted with a major and momentous new piece of information. We really have two options. Either we slot the new datum into our existing frame of how we understand the world, or we have to ask, “Would it be explained better with another theory?” Helping to see our belief network in this way can help us avoid the hasty generalizations or emotional responses that are, according to David Ropeik, our default approach to any new information.

Take more time and get more information

Ultimately, the fallacy of hasty generalization points out how narrow our viewpoint actually is. I am one person, in one time, on one planet — yet all of us tend to think that we are the creators or discoverers of universal rules. We want to be as rational as can be, but we are fundamentally limited in that we constitute a sample size of one and see everything through our own lenses.

As Dennett says, acknowledging this can help us overcome it, and as Ropeik concludes, a lot of the problems can be overcome by taking more time to expand our knowledge base. Yes, each of us is only one person, but we have communication and intellect. We can reach beyond ourselves to find more sources in the hope of diluting or countering our own biases.

Jonny Thomson teaches philosophy in Oxford. He runs a popular Instagram account called Mini Philosophy (@philosophyminis). His first book is Mini Philosophy: A Small Book of Big Ideas.