A Computer Walked into a Bar: Take the Turing Test 2.0

Cryptography has long been a powerful security tool. It has been used by nations at war to send messages that might be intercepted but that only the intended recipient can understand. That is why wars have been won, or at least shortened, by codebreakers.

That was the case in the Second World War. The German Enigma machine was broken by the Polish Cipher Bureau prior to the war. This enabled British codebreakers at Bletchley Park such as Alan Turing to decipher a vast amount of high-level military intelligence. Winston Churchill and Dwight Eisenhower both said after the war that Allied cryptanalysis, codenamed “Ultra,” was key to victory.

Today cryptography is a tool that is commonly used in cybersecurity. Since the Internet is a public medium, the data you send has to pass through a lot of pipes. And at every twist and turn there are bad guys waiting to steal your data, whether it is credit card information or trade secrets.

You have probably encountered a captcha before, something with text that looks like this:

Instead of being asked to prove you are not a Nazi spy, a captcha asks you to prove you are not a data-snatching robot. Captcha is an acronym for “Completely Automated Public Turing test to tell Computers and Humans Apart.” Of course, since this test is administered by a computer to a human, it is sometimes referred to as a “reverse Turing Test.”

Captchas display distorted versions of the font that most browsers use to display text. Robots are not supposed to be able to identify this distorted pattern of letters. And yet, the Turing Test, which is based on language processing, is seen by many as imperfect.

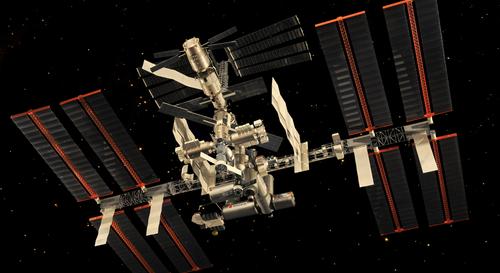

That is why a group of scientists at the University of Exeter in England have proposed a “Turing Test 2.0.” This test is designed as a visual description task that asks machines to mimic certain human visual abilities. One thing that humans are good at is describing where one object is in relation to another object. Humans are especially good at picking up on the relevance of certain objects when it involves subjective judgments. Robots, not so much.

Consider the scenario below. Your response will determine if you are a human or a robot who just walked into this bar.

The University of Exeter colleagues Michael Barclay and Antony Galton created 15 other working scenarios, some of which form the basis of a Turing 2.0 Test, available at New Scientisthere.