Collective intelligence out-diagnoses even professionals

OSTILL is Franck Camhi via Shutterstock

- The Human Diagnosis Project can develop medical diagnoses with startling accuracy.

- The platform combines the knowledge of medical professionals and artifical intelligence.

- The goal of the project is to provide open, readily available high-level guidance and training to health care professionals across the globe.

The world-class Mayo Clinic is often the place patients go for a second opinion on a medical diagnosis. It’s a good thing they do. According to a report issued by the clinic in 2017, 88 percent of them return home with either a completely different diagnosis or a significantly altered one. Only 12 percent receive confirmation of their doctors’ original conclusions.

It’s hard to overstate the life-and-death importance of medical misdiagnoses, and with all the artificial intelligence and data collection tools out there, you’d think there might be a way to improve on these statistics. This said, the goal of the Human Diagnosis project, or “Human Dx,” (a triple pun their site explains) is to create the world’s open medical intelligence system, a “collective intelligence” that can produce vastly improved diagnostic accuracy.

In early March, JAMApublished the results of an experiment conducted by Human Dx in cooperation with Harvard, and the results were impressive. Where 54 individual human medical specialists correctly diagnosed 156 test cases 66.3 percent of the time, collective intelligence achieved an 85.5 percent accuracy rate. Nine medical professionals contributed to the collective intelligence conclusions.

Human Dx founder Jayanth Komarneni tells Big Think that, “We can get numbers in the 97th, 98th [percentile], and even — if we have sufficiently large numbers of participants — we can get to super intelligent results. That means that it outperforms 100 percent of individual participants.”

About Human Dx

The Human Dx project is a partnership between the social, public, and private sectors — in the U.S., it’s a 501 (c)(3) not-for-profit/public-benefit corporation. According to Komarneni, Human Dx’s business model is as free of cost to users as possible while still generating enough income to be self-sustaining. There are now nearly 20,000 medical professionals in almost 80 countries contributing. Among Human Dx’s partners are, as the company states: the American Medical Association, the Association of American Medical Colleges, American Board of Medical Specialties, and the American Board of Internal Medicine. They’re also working in collaboration with researchers at Harvard, Johns Hopkins., University of California San Francisco, Berkeley, and MIT.

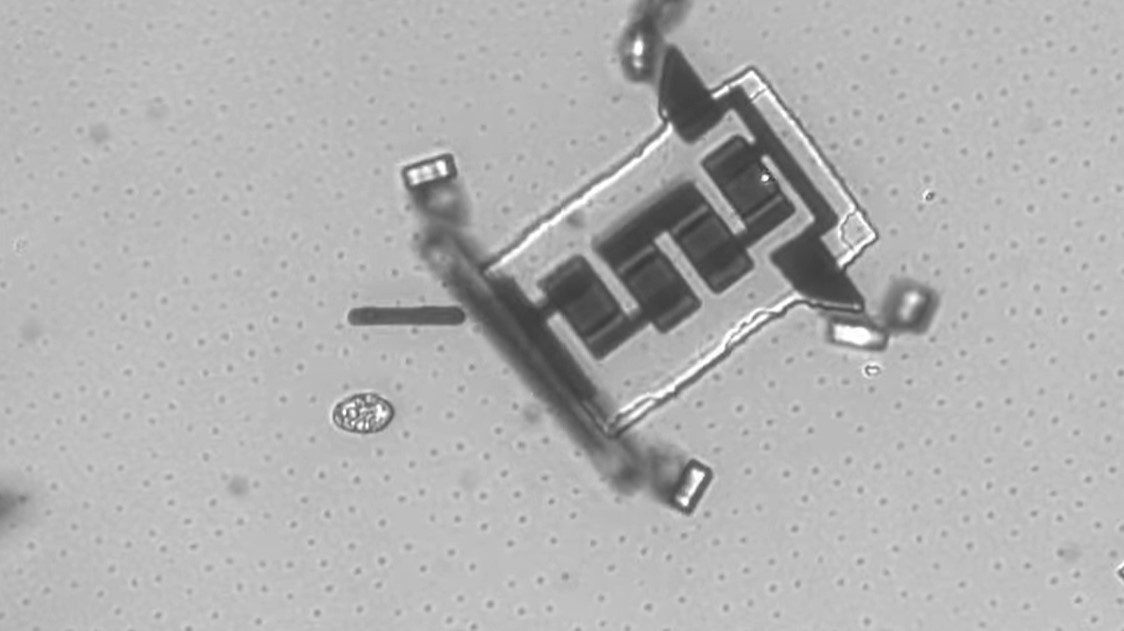

Image source: koya979/Denis Komolov / Shutterstock / Big Think

Open Intelligence

While diagnoses produced by Human Dx do bring together the opinions of multiple medical professionals, it’s far from a simple voting system. It incorporates its own massive data set, machine learning, and artificial intelligence in addition to the input from medical professionals to develop its diagnoses. In designing their collective intelligence, says Komarneni, Human Dx had to first re-think the idea of open intelligence itself.

“We believe that open intelligence is the third form of open knowledge,” he explains. The first was open source-protocols such as those on which the internet is based, as well as operating systems such as Linux. These protocols enabled the second form, open content: Wikipedia, data libraries, and so on. Open intelligence combines the first two: “And when you think about A.I. in the context of software,” says Komarneni, “it really is code which is smartly delivering content to you based on what you put into the system.”

The importance of open intelligence is that without it being available at low cost or free, the cost of A.I. is going to be so prohibitive that it’ll “exacerbate, as opposed to close, income, health, and other disparities in society,” warns Komarneni. Nowhere will the ramification be more serious than in health care, since “there is nothing we care more about than the well-being of the people we love and ourselves.”

(TaLaNoVa/Shutterstock/Big Think)

How Human Dx collective intelligence works

Collective intelligence in the Human Dx project is not unlike a panel of participants, when are referred to as “agents.” Some of these are medical professionals, but they may also include the outputs of other systems. For example, Komarneni mentions that it’s entirely possible IBM’s Watson could be one of these agents, or even a data set from the National Institutes of Health.

Lingua franca

Of course, individual agents, even the human participants, express themselves in their own ways — is a lump “blue” or “blueberry-colored,” for example — not to mention that contributions from some agents such as A.I. or datasets may be in the form of raw data. Before any meaningful synthesis of all these opinions can be performed, the first step is to convert them all into a common language of some sort. Human Dx’s AI uses natural language processing, text prediction, and medical ontologies to derive these translations as the process’s first step.

Ranking opinions

Human Dx establishes the capability, or CQ (“clinical quotient”), of each agent. To do this they rank agents’ skills using test cases with known diagnoses, including “some of the most wickedly complex cases,” says Komarneni. This allows Human Dx to determine how accurate agents’ diagnoses can be expected to be, and how heavily they should be weighted against other participants’ contributions in solving the current case.

A.I. joins the panel

At this point, the agents’ inputs are synthesized to derive the most likely diagnosis, and this is combined in an A.I. model with all of the aggregated case data that’s ever been captured by Human Dx — interactions in the “tens of millions” — including how “lots of other participants over many other cases have solved these cases.” This A.I. model then joins the panel in arriving at the final diagnosis.

“And those [agents] combined,” says Komarneni, “are how we can get to results that outperform the vast majority of individual participants.”

The Harvard and Johns Hopkins studies

The Harvard study published in JAMA is the first public demonstration of the Human Dx system as a diagnostic tool. Working with an international cohort of medical students and professionals, the results were unquestionably amazing. There were 2069 users working 1572 cases — again, these were cases with known correct answers — from the Human Dx data set. About 60 percent of the participants were residents or fellows, 20 percent were attending physicians, and another 20 percent were medical students. In the study, as more medical professionals were added to the collective intelligence “panel,” up to nine individuals, its accuracy consistently rose. Physicians who weren’t specialists in their test-case areas achieved just a 62.5 percent accuracy score.

A previous study published in JAMA in January, and done in cooperation with Johns Hopkins, looked at Human Dx as an automatic platform for assessing the diagnostic abilities of health care professionals and students. That the scores of participants looking at 11,023 case simulations were consistent with their training level shows, in Komarneni words, “that we provided a valid, quantitative, scalable measure of medical reasoning.” While he admits this doesn’t sound like a big deal, it is, since it offers a far more accurate and scalable option to current multiple-choice assessments, which have been shown to correspond poorly to real-world diagnostic skills.

(Human Dx)

The future of health care and Human Dx

Komarneni says that there are basically only two ways to provide global universal health care, a pressing need since, “Almost half the world has no access to essential health services.” One way, he says, would be to create a God-like A.I. system to provide health care to everyone, but, “We know that’s not going to happen.” God-like AI is just too hard, potentially requiring having to know everything about a patient from the tiniest details — say, the quantum behavior of electrons in mitochondria — to the huge, as in the kind of environment a patient lived in as a child.

In addition, Komarneni says, “In a world where data is locked up in many disparate silos, there isn’t going to be a single collective agent. There’s going to be a collective of many intelligent agents, both human and machine. The key is how do you integrate intelligence into larger buckets of intelligence than can solve the world’s hardest problems.”

This is where the Human Dx project, and the second approach, comes in. It actually has two components:

- The first is the expansion of existing medical professionals’ diagnostic accuracy skills by providing them access to the Human Dx platform and its collective intelligence as a diagnostic tool.

- The second is helping to train new professionals, and Human Dx Training is already offering this on the Human Dx site.

For those concerned with privacy in a system such as Human Dx, Komarneni says it’ll be a non-issue, explaining with an example. When two people converse, “We don’t have access to the underlying data of each others’ minds. We’re agents that are interacting with each other to gain relevant and useful information from each other.” Similarly, Human Dx’s system of interacting agents doesn’t require the exposure of patients’ personal data. What’s shared with Human Dx are the conclusions agents draw from that data, not the data itself. In the case of a dataset operating as an agent, the data would be anonymized.

Human Dx’s interest in all this is developing a platform it hopes others find uses for. “We believe we’re just building the enabling technology that many other stakeholders could use.” As examples, Komarneni imagines, “The VA could implement their own version of this. Kaiser Permanente could implement their own version. Employers could contract with us or with their own insurers. You could even also have individual and group practices use Human Dx software to serve patients directly.”

Human Dx is currently looking at ways to open up as much of the project for non-professionals as possible, and they’ve already made a start: On their home page is a diagnosis cloud — mouse over the various blue bubbles to see different conditions, and then click for further details. In addition, just beneath the cloud is a search field with which you can look up diseases and symptoms.