Researchers at MIT create “psychopathic” AI

You know the saying “let’s not and say we did”? Artificial intelligence researchers at MIT decided to follow through on a particularly bad idea by creating an AI that is purposefully psychopathic. The AI is named Norman, after Norman Bates from Alfred Hitchcock’s Psycho.

They did it to prove that AI itself isn’t inherently bad and evil, more-so that AI can be bad if fed bad and evil data. So they went to “the darkest corners of Reddit” (their words!), particularly a long thread dedicated to gruesome deaths, and fed it the data from there.

“Data matters more than the algorithm,” says Professor Iyad Rahwan of MIT’s Media Lab. “It highlights the idea that the data we use to train AI is reflected in the way the AI perceives the world and how it behaves.”

This largely speaks to a very common theory called GIGO, or ‘Garbage In, Garbage Out,’ which is as true in AI as it is for the human diet. To the truth of if you eat only junk food and candy you’ll get fat, the same holds for feeding AI disturbing data. Nevertheless, the idea that there’s an AI that was born psychopathic is obviously quite juicy. So long as the code never makes it out of the box in MIT that it’s kept in (presuming that it’s kept in a box), we should all be OK.

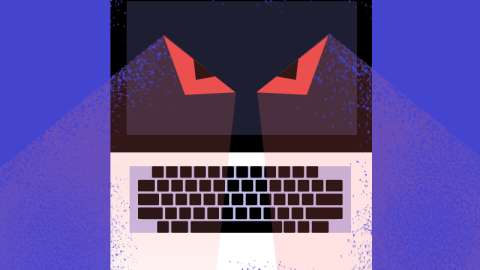

Fortunately for us, the AI is only designed to caption Rorsach tests. Here’s an example:

Lovely! I’m sure MIT will be putting these up on the refrigerator.