The Literary Darwinists: The Evolutionary Origins of Storytelling

The summer of 1975 was a rough time for beachgoers at Amity Island. Citizens of the Long Island getaway became terrorized when a shark devoured a young woman and two young boys in the span of a few days. Luckily, three brave men – Brody, Quint and Hooper – traveled out to sea in a small boat, killed the sea monster, returned safely and restored peace to the town.

Fortunately, the only real harm Spielberg’s Jaws inflicted was on future American moviegoers. Jaws is the prototypical thriller that opened the floodgates for big budget summer blockbusters such as Star Wars and Indiana Jones. It is also credited with inspiring Ridley Scott’s Alien and other man-eating animal thrillers. But once Hollywood realized the draw of über stimulating summertime films it was only a matter of time before Deep Impact and The Expendables 2 hit the big screen.

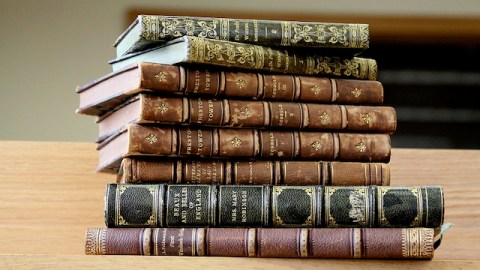

Jaws wasa groundbreaking film in some respects, but the plot was nothing new. About 1,200 years earlier Saxons told an eerily similar story. Beowulf is set in a peaceful village that’s suddenly terrorized by the monster Grendel, who resides in a nearby lake. Luckily, the hero of the story – Beowulf – slays the monster (along with his evil mother) and restores peace to his village.

The British journalist Christopher Booker parallels these two tales in a recent book to argue that all stories are built off of seven basic plot templates. For Booker, Jaws and Beowulf fall into the “Overcoming the Monster” category along with Little Red Riding Hood andDumas’ The Three Musketeers. From Homer’s Odyssey to the James Bond series, Booker’s widespread analysis reminds us that literature is a human universal; where there are people, there are stories.

Given the ubiquity and pleasure of stories, as well as the important role they play in human societies, it’s not surprising that literary scholars and evolutionary psychologists are beginning to critically examine literature with a Darwinian lens. In question is nothing less than the nature of literature from an evolutionary perspective.

What’s odd about literature is that a mind immersed in fantasy appears ripe for fixing by natural selection, since it always leads to erroneous beliefs and distracts from reality. Wouldn’t a daydreaming hunter-gatherer be easy pray for a predator on the African Savannah? It turns out that our ability to invent and tell stories might be a key adaptation that significantly distinguished our species. There are at least three reasons for this.

First, stories are time-savers. For instance, we don’t have to jump off a cliff to know what will happen. We can just simulate it in our minds and imagine the deadly result. Steven Pinker puts it this way:

Intelligent systems often best reason by experiment, real or simulated: they set up a situation whose outcome they cannot predict beforehand, let it unfold according to fixed causal laws, observe the results, and file away a generalization about how what becomes of such entities in such situations. Fiction, then, would be a kind of thought experiment, in which agents are allowed to play out plausible interactions in a more-or-less lawful virtual world.

Second, stories make communication easier by embedding important information about the world and people into coherent narratives. We have a hunger for social and factual information; stories help us organize all of it so we can efficiently exchange information and make more informed decisions. This is why stories are often reduced to maxims, i.e., the early bird gets the worm or he who hesitates gets lost. The brain prefers a concrete scenario to a laundry list of information. Stories, then, serve as mnemonic devices for important tips on how to navigate the world.

Finally, stories help us understand and organize our lives. Instead of seeing life as a collection of random events, our ability to look at sequences of events and weave explanations into them serves as a key existential aid. An October 2009 StrategyOne poll found that when Americans were asked what metaphor best describes their lives, 51 percent responded with “A Journey,” 11 percent with “A Battle” and 8 percent with “A Novel.” It’s nearly impossible to not think about life as a journey where a person is a traveler, a purpose is a destination, teachers and coaches are guides and birth denotes a starting point, death an ending point. In English, for instance, we describe ourselves as being “lost”, “found” or “at a crossroads”; we encounter “twists and turns” and manage to “find our way.”

Another line of reasoning argues that storytelling is simply a delightful byproduct of human evolution. This helps explain why we like Jaws; it’s a vicarious experience in which we get a taste of what it’s like to save the day. As Pinker says:

Fiction may be, at least in part, a pleasure technology, a co-opting of language and imagery as a virtual reality device which allows a reader to enjoy pleasant hallucinations like exploring interesting territories, conquering enemies, hobnobbing with powerful people, and winning attractive mates. Fiction, moreover, can tickle people’s fancies without even having to project them into a thrilling vicarious experience.

Stories might also be the byproduct of our instinctual craving for gossip. Because humans are inherently social animals, information about other humans is valuable. This is why companies do background checks and why profiles on matchmaking websites are so thorough: reputations are fairly good predictors of future behavior; information is power.

Stories might feast on our social instincts by cherry picking dramatic and outlandish scenarios. As the behavior scientist Daniel Nettle argues:

Conversations are only interesting to the extent that you know about the individuals involved and your social world is bound into theirs… Given that dramatic characters are mostly strangers to us, then, the conversation will have to be unusually interesting to hold our attention. That is, the drama has to be an intensified version of the concerns of ordinary conversation.

Nettle goes on to argue that we don’t watch films about people going shopping; we watch films about people going shopping who are having an affair with an ex-lover. This helps explain why gossip tabloids surround so many checkout counters and why so many people seem to prefer watching Friends instead of being with friends: we love drama. Likewise, few would read a book about a man who goes fishing. However, Hemmingway’s Old Man and the Sea reminds us that many would enjoy a book about a man who goes fishing, ends up enduring an epic battle with a giant marlin and returns veiled in tragedy.

Returning to the question of literature as an adaptation versus a byproduct, my hunch aligns with the literary scholar Jonathan Gottschall, who says in his recent book The Story Telling Animalthat

… we probably gravitate to story for a number of different evolutionary reasons. There may be elements of storytelling that bear the impression of evolutionary design… there may be other elements that are evolutionary by-product… and there may be elements of story that are highly functional now but were not sculpted by nature for that purpose, such as hands moving over the keys of a piano or a computer.

The real mystery is not just the evolutionary origins of literature, but movements and attitudes such as modernism that insist on transcending the traditional plot lines that Booker diagnoses. If Booker is right and all stories fall into seven basic templates, then writers who strive for complete originality might be out of luck. The human mind, it appears, has its limits on literature. This is supported by several cross-cultural studies clearly demonstrating that all humans gravitate towards similar literary theme. As Hume said, “the general principles of taste are uniform in human nature… the same Homer who pleased at Athens and Rome two thousands years ago, is still admired at Paris and London.”

Of course, the fact that humans share certain literary hot buttons didn’t stop Joyce from throwing out plots altogether in Finnegan’s Wake. Nor did Virginia Woolf hesitate when crafting the free-flowing Mrs. Dalloway. For various reasons, writers in the 20th century were motivated to create stories that don’t appeal to the senses. Pinker explains that a “compelling story may simulate juicy gossip about desirable or powerful people, put us in an exciting time or place, tickle our language instincts with well-chosen words, and teach us something new about the entanglements of families, politics, or love.” Why, then, were so many authors in the 20th century obsessed with disjointed narration, bewildering characters and exhausting prose? And why did they (and do they) look down on the mainstream?

These examples test the limits of literary Darwinism. Science gives us some reasons, which I hoped to clarify above, for why we liked Jaws, why moviegoers paid to see Deep Impact and The Expendables 2, and why we have recycled, replayed and rehearsed the same plot lines for thousands of years. Why the metafiction of Vonnegut’s Slaughterhouse-Five and Stoppard’s The Real Thing? It’s unclear. But elite art is not off-limits to scientific investigation.