Extended senses & invisible fences

By Chris Arkenberg

“The intelligence of the city is on the streets.“ – Manu Fernandez

Amidst the swirling maelstrom of technological progress so often heralded as the imminent salvation to all our ills, it can be necessary to remind ourselves that humanity sits at the center, not technology. And yet, we extrude these tools so effortlessly as if secreted by some glandular Technos expressed from deep within our genetic code. It’s difficult to separate us from our creations but it’s imperative that we examine this odd relationship as we engineer more autonomy, sensitivity, and cognition into the machines we bring into this world. The social environment, typified by the contemporary urban landscape, is evolving to include non-human actors that routinely engage with us, examining our behaviors, mediating our relationships, and assigning or revoking our rights. It is this evolving human-machine socialization that I wish to consider.

The intelligence of the city is on the streets, as Manu Fernandez suggests. This is an important distinction reminding us that humanity is at the center of intelligent cities, and offering a counterpoint to the productized packages of technological solutions so popular in the techno-strain of contemporary urban development.

There are several fundamental drivers that set the frame for our relationship with machine intelligence. Computation is a deep current within human innovation & adaptation, and it’s only gained momentum on the inertia of civilization. However, nature is a deeper current engineered into our very cells. We’re spreading computation universally but this path is actually leading us to better understand and more effectively mimic biosystems. Biological patterns are at work, albeit on considerably longer timeframes, within everything we do as human animals. Like nature itself, the technosphere is expressed heterogeneously, organically and distributed across many scales. Although we seem compelled to create it, there is a tension between technology and humanity. We’re not entirely at ease with our creations, burdened by the recognition that the pace of technology is faster than our ability to understand its consequences. This is especially so as we yield more and more agency to machines & algorithms working at greater and greater scales. More often than not, regulatory structures emerge after things go wrong rather than before they have a chance to fail. The resulting dialectic is one where governance contains while innovation releases, leaving us in the middle to experience the consequences of collapsing our imagination into reality.

We know this, of course, but somewhere in the recent past we crossed a threshold from building tools dependent upon humans to those with enough autonomy and agency to make rudimentary decisions on our behalf. We create our tools and believe that we control them but the arc of our technos is yielding more and more control to the machines themselves. By making them more like us we give them more freedom, agency, and authority. This is the trade-off we find ourselves navigating here at the dawn of the 21st century.

If the frame is one of both progress and tension, what are the assumptions underlying this examination of our path forward? It is essentially a Growth model through which I consider this arc (this model being the easiest to bet on, if not the least resilient). Global GDP continues at a roughly linear pace with the bulk of growth shifting to Asia and, later, Africa, but driven and funded by the aging West. Cities continue to add population yielding optimizations and degradations, boomtown build-outs and downturn data decay. Economic models evolve slowly without major revolutionary shifts. Capital will continue its steady re-distribution into younger markets seeking to capture the prosperity of the West. Energy constraints are managed through a combination of old & new inputs but there are many bumps along the way as the resource needs of the developing world begin to dominate the global stage. Environmentally, we’ll see more adaptation than mitigation of climate chaos, driving migrations, shifting food stocks, and impacting health and reproductive fecundity. In this scenario it will be some time before we’re able to effectively understand and manage complex natural systems. Politically, governance and sovereignty will continue to balkanize and governments will be more & more distracted contending with multinational corporations, NGO’s, syndicates, super-empowered individuals, and the ongoing challenges of climate and resource instabilities. Such distraction of governors opens tremendous gaps & opportunities for innovations, both to good and bad ends. Small-town mayors and local tech collectives are as likely as gun traffickers and drug cartels to drive regional innovation.

Cities will evolve within this broader context, expressing the deeper currents organically. The living city is emergent and messy and is itself an organism composed of innumerable interstitial and ephemeral cellular structures. Driven by the will of its inhabitants, cities will continue forward as an accelerating patchwork of implementations, seeking greater efficiency & resiliency, flexing to absorb discontinuities, and continually extruding a rich skin of connected technologies and distributed services.

Given this environment and the assumptions underlying the discussion, there are 6 domains worth considering through which we engage the city and its inhabitants, both human and machine. Within this is a loose taxonomy of mediated interactions we have with the urban computational scaffolding.

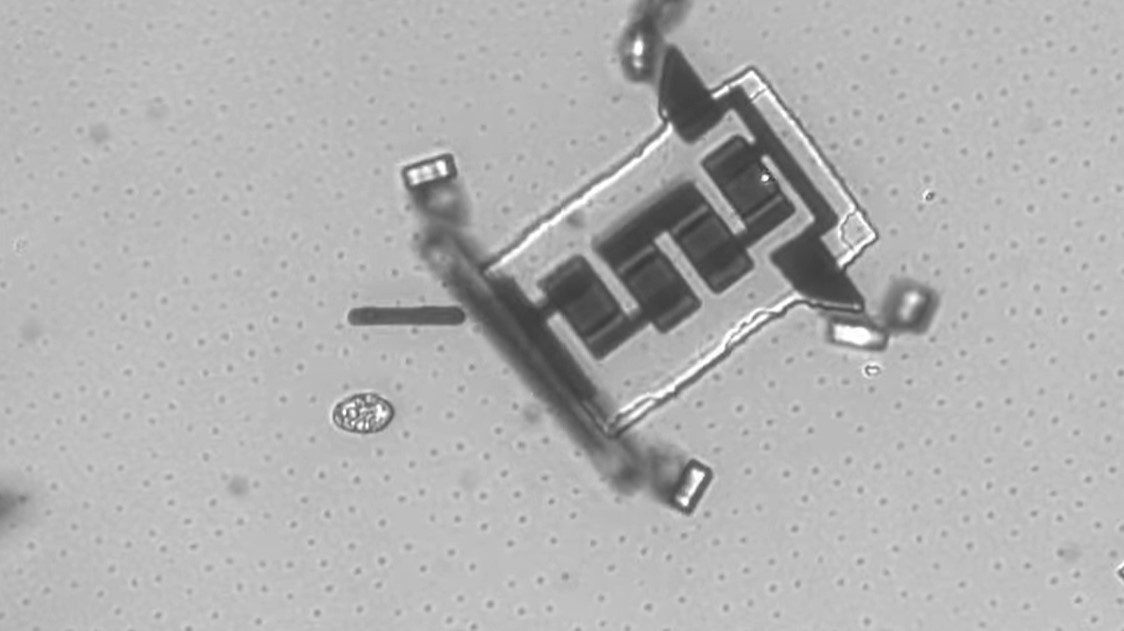

In the personal domain the individual is the reference point around which various experiences are arranged. With connected mobile devices we carry a network identifier that effectively labels every individual with an IP address. Our network ID authenticates and provisions us with access and can bar us from admittance to both virtual and physical relationships. The clothes we wear and the devices we carry begin to interact and register an online presence as part of our personal device mesh. Our identity is wrapped in and contextualized by our location, conferring spatial intelligence and invoking situated technologies around us. Through connected sensing & mediation our data profile accumulates personal information about memberships, affinity networks, paths, transactions, history, and more, pairing our network identification with our personal identity. This is the core information structure around which the urban interface assembles. In this manner, we are clothing ourselves in data-rich, sensing technologies.

Surrounding the personal domain is the local sphere. From identity & location we derive proximity & context. What are we near, can we interact with it in some way, is it interested in us, does it contain something to be discovered? Proximity reaches out to ambient information just as the local domain examines us. The pathways we traverse through the city contain information valuable to us and to others. Where have we been, what is our trajectory, where are we going, are there path optimizations available? How can we meet and assemble, disperse and evade…? The relationship between the personal and the local is the site of context. Identity and proximity enable context awareness and situated technologies around which assemble relevant services & solutions and authoritative structures adjudicating our rights. We understand physical boundaries through their viscerality but we also pass through layers of invisible edges. Across such boundary zones and perimeters an always-on network that understands identity, proximity, and context can provision or revoke services based on our location. Geofencing is a friendly welcome, a helpful agent, or an ankle bracelet under house arrest. In the local domain we have extended senses and invisible fences, anchoring the virtual in the actual and wiring the real to the transreal.

Around the local rises the structural world. Ubicomp marches into this domain to instrument the built environment, conferring onto it sensation and communication. This vision begets the IBM brochure of the idealized Smart City alive with instrumented architecture and conversant infrastructure. Building information management systems define a broad data profile for structures. Embedded microprocessors and visualization dashboards reveal the runtime mirror of living architectural systems. The modern structure is aware of itself, its occupants, its environment, and its provisioned operators across the globe. Individual structures tie in to civic infrastructure as organs interface with the circulatory & nervous system. Energy, security, water, waste, roadways, rail, inflows, outflows, seismic, atmospheric… It’s all coming online communicating and correcting. New data instruments are necessary to comprehend the volume of flowing urban informatics as we presently find ourselves overwhelmed with voices from literally billions of microprocessors. Fundamental to these systems is communication infrastructure. Ubiquitous wireless coverage and unavoidable instrumentation express a civic nervous system wired by fiber to the urban brain. What doesn’t flow through conduit & plumbing travels through the city along transport lines. Instrumentation promises efficiencies in scheduling, way-finding, traffic management, and tracking of goods but will also create automation, remote management and control, as well as new systempunkts for disruptors to attack.

We are constructing a computational sensorium of urban informatics, waking the built environment through instrumentation and connectivity. Like a membrane around us, the physical environment expresses itself through a virtual layer of interaction. This interaction layer is implicit in all previous domains but it’s important to consider the parameters of access and interface. Visual interaction is the most common to current computational metaphors, and the most obvious to the emerging layer of augmented reality. With a computationally mediated visual interface like AR we enable a deeply personalized experience of the city and of each other. Tags and annotations, memorials and territorial markers, visible avatars and secret locations, and the challenge of occlusions and relentless bill-boarding by marketers all compete for our field of view. How will the shared construct of reality be forced to shift (or possibly fragment) when what I see is different from your annotated view of the augmented world? Add an auditory layer and the city begins to talk to us, personal, contextual, instructively and artistically, like a poem embedded in a memorial bench spoken by an ancestor as we walk past. Touch it to feel the living city as haptics engage the tactile needs of our social species through handprint biometrics and sensing surfaces demanding our skin. How might the tactile be engaged in personal, social, and public contexts bot local and remote? How might distant touch collapse the space between us? Between touch and sight, gestures employ a visible language of form and movement seen by machine eyes and relayed to networks, actuators, and servo arrays collating gait analysis and biometric profiling. Voice recognition and natural language processing delivers verbal commands to digital ears (and secrets to ambient listeners). Talk to your device, talk to the walls, speak “friend” and pass. These are some of the ways we interface with the awakening world.

Our nature is social. Relationships are interactive and transactional. We build technologies to enable new relationships, and it appears that some deep animism drives us to awaken the inanimate to engage with us more directly. Yet, in these relationships we’re not always willing participants.

At its core, cybernetics is a means to control information systems. The convergence of ubiquitous computation and network connectivity is, by design, a control system. In the living city, the regulatory domain is a good thing and a bad thing. Control is both optimization and oppression, depending on the circumstances. Connected identification, proximity and location awareness, remote access to embedded systems, and ubiquitous surveillance and sousveillance enable an array of solutions to a host of interested 3rd parties. Malcolm McCullough suggested that “contexts remind people and their devices how to behave”, acknowledging the moral ambiguity within some conversations about ubicomp. This is an important point to consider as we bond more closely with machines and algorithms.

Cybernetic regulation begins with feedback. Implicit in feedback is knowledge of the system. Feedback is state & status. State and status becomes assessment and response. Examples include the auto-pilot in aircraft and the content recommendation algorithm in Facebook. Once keeps us safe from change while the other guides us towards an ignorance of diversity. Guidance becomes governance of embedded systems and human behavior. Algorithmic guidance is the Prius dashboard showing you your fuel consumption. Embedded governance is a bottle of Valium that won’t open if you’re above your weekly allowance. Cybernetic control is greatly enabled by shared network computation mediating our interactions, regulating our structures, guiding our vehicles and devices, and slowly, being invited into our bodies.

By developing algorithms to help us we enable them to contain us. This is a delicate path to tread, from unyielding embedded governance to the inevitable decay and obsolescence of technology, and beyond to the cognitive disruptions and psychic malaise born of intractable dependencies on virtual agents that may up and quit us or simply fall offline when we need them most. Such risks are not new for our tools but we must be wary of how tightly we chose to entangle ourselves with them as as they are deputized to manage more sub-routines on our behalf.

The balance to cybernetic governance may lie in programmed serendipity, digital artistic license, or simply the freedom allowed by a sudden glitch in the algorithm. In articulating the New Aesthetic, Bruce Sterling considered the movement as arising from “an eruption of the digital into the physical”. The domain of aesthetics is the way we navigate and express our emotional engagement with this disruption. Blended realities emerge through the abundance of screens, annotations, and overlays, characterized in part by a growing inability to distinguish authentic from synthetic, or to clearly separate the self from the other. Polysocial reality, as articulated by Sally Applin and Michael Fischer, examines how society is modulated by these connective technologies and how multiplexed channels of experience reform group behaviors and their contexts. Spatial convergence is already challenging our ability to disambiguate between presence and distance. The brain evolved to handle one construct of reality yet we now overlay multiple local and remote experiences simultaneously. This is an entirely new cognitive map. The psychological exploration of this territory reveals itself, in part, through our artistic expressions. Telepresence, data compression, machine vision, reality capture, and glitch media inform a cyborg aesthetic to communicate the emotionality and fascination with this interface between humanity and technology. These become the artifacts of the New Aesthetic precipitating from the eruption of the digital into the physical, leaving the narrow domain of geekdom and painting itself across the walls of our world.

The great work of art & science is thus the communication of the centrality of humanity within these domains, and the hopeful accomplishment of more closely aligning us with each other and with the natural world in which we live. Yet, human perception, cognition, and expression are not constant but continually evolving under the modulating impact of this ingression of virtuality into our lives. The quickening emergence of ubiquitous computation, polysocial reality, and non-local cognition alters the way we experience the world around us, the way we connect with others, and the way we construct our sense of self. And while we must be very careful when we abdicate responsibility to mechanized objects and autonomous governance, the living city offers tremendous opportunities for novelty, innovation, empowerment, and a deep expression of humanity at play with the technosphere.

The French novelist, Alphonse Karr, is said to have quipped, “the more things change, the more they stay the same”. This is a necessary refrain to bear in mind. Underneath all the shiny new things we’re still playing the same game. The needs and goals of the human species have barely changed though they work through ever evolving forms. As we heave civilization forward into another churning millennium, dematerializing and virtualizing into greater sustained abstractions of information, it is still our humanity that frames the world more than our technology. How we act is still much more important than what we make.

Chris is a researcher at the Hybrid Reality institute. He is an independent researcher, analyst, and innovation strategist in the San Francisco Bay Area. Follow him @chris23