Dinosaur Weather

I usually write optimistic posts. This is going to be a scary one. I apologize in advance.

While I was in Columbus last month, I mentioned the furnace-like heat. Well, it wasn’t just me. In what’s becoming an annual tradition, heat records are being broken all across the country. And it’s not just hot, it’s dry: more than 80% of the continental U.S. is experiencing abnormally dry or drought conditions, with no relief in sight. Corn and other crops are being decimated by the drought, the worst in over 50 years, which has led Agriculture Secretary Tom Vilsack to announce that he’s praying for rain.

Nor is it just crops that are suffering. Colorado is burning in the worst wildfire season in history, with hot, dry weather fanning massive blazes that are incinerating rural communities and prompting mass evacuations. According to the article, fire experts are saying that this could be the future for Colorado: wildfires raging unchecked all summer, every summer, until they’re finally quenched by the autumn rains. And one more: It’s so hot that nuclear power plants in the Midwest are faced with shutdowns because the water they usually draw from lakes and rivers to cool their cores is too warm.

The usual caution of climate scientists is that climate is a large-scale trend, whereas weather is a local phenomenon subject to all the random ups and downs of chance. One hot summer doesn’t prove the pattern, just as one cold winter doesn’t disprove it. Nevertheless, there comes a point where the anomalies begin to pile up; where the accumulating weight of evidence forces the objective observer toward a particular conclusion. The Earth is changing, it’s changing because of us, and we’re starting to feel the brunt of it.

I don’t think there’s a good term in popular use to describe what’s happening to our planet. “Climate change” is too sterile, too antiseptic. “Global warming” is misleading: it’s technically accurate but gives the impression that we can expect one uniform blanket of change, when the reality is that different parts of the world will be affected in different ways.

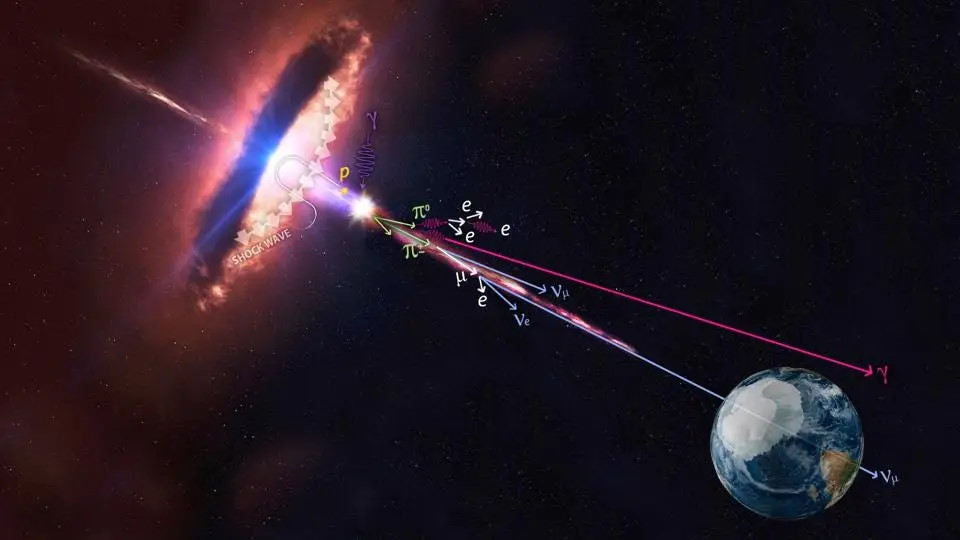

I have a suggestion. What we’re bringing back, by our heedless burning of hydrocarbons, is the kind of climate the planet last saw millions of years ago, when the dinosaurs reigned; when the carbon that’s now buried in coal banks and oil fields was aboveground, cloaking the Earth in sweltering jungle. How about we call it “dinosaur weather”?

We environmentalists have always prided ourselves on being clear-eyed realists, so we need to face facts: it’s too late to avert this. We’re beginning the shift to renewable, carbon-neutral power sources, but too much carbon is already in the air, and fossil fuels still have far too much momentum. This planet is going to change, in ways we can’t fully foresee. The only question is how much damage we’re going to cause – how much worse it’s going to get before we stop digging up and burning the buried forests of the dinosaurs.

To get a glimpse of one possible answer, consider an engrossing site called the World Dream Bank, whose author imagines whole planets and then illustrates them in meticulous, plausible detail. Some are totally alien; some are strange variations on our own planet, hypothetical Earths from parallel universes. (There’s also lots of weird pseudoscience on other sections of the site, which I obviously don’t endorse.)

One of these parallel worlds is called Dubia (and you’ll have to click through and read to see the explanation of why it’s called that). The concept is simple: it’s what Earth would look like with carbon-dioxide levels of 700 parts per million, about double what we currently have. This leads to massive climatic changes, which lead in turn to geographical changes: with no more polar ice caps, the sea level rises by over 100 meters, and the world is inundated.

Scary as that sounds, the overall impression you get if you read all the way through is that Dubia isn’t a desert wasteland or a barren inferno. It’s a lush, perfectly livable, even hospitable world. The problem is that its livable regions aren’t where our planet’s livable regions are. And to get there from here would entail disruptions on an unimaginable scale: the migrations of billions of people, cities and nations drowned or abandoned to desert, agriculture completely uprooted and restarted from scratch in the new fertile areas. There would be mass extinctions, famines, wars, and probably much worse. The human species would survive; whether civilization would make it through, and in what form, is a different question.

Here’s what we’d have to give up, to get from Earth to Dubia. Here’s what would be underwater in Europe:

Scandinavia, Spain, Brittany and Normandy are now islands, and the northern plain is gone, from Belgium to Murmansk. So are Athens, Venice, London, Brussels, Amsterdam, Hamburg, Copenhagen, Helsinki, St. Petersburg.

And in southern Asia:

India’s heartland, the Ganges Plain, is gone. The sea’s crept up the Brahmaputra valley, too, nearly to the Chinese border. The new breadbaskets are the green Deccan and what’s left of the Indus Valley, where the rains have increased. But even the Thar Desert and Pakistan’s dry mountains are grasslands, while the Punjab, straddling the Indo-Paki border, is downright lush. The Rann of Kutch is now a great sound; the coastal hills are an island-chain stretching all the way to Bombay, now a modest island town. Calcutta has, of course, been obliterated.

The nation of Bangladesh is gone too.

So is half Burma. And Thailand. And southern Cambodia and Vietnam… Singapore is long abandoned–just another rusty reef.

In America, Florida is of course gone. Louisiana is gone. Alabama and Mississippi are partially swallowed by a new inland sea. And in the Northeast:

New England’s now an island, cut off by the St. Lawrence and the narrow Hudson Straits. I won’t dwell on the view from the Hudson Palisades, looking out at the great rust-red towers rising from the sea–it’s such a cliché, repeatable all the way from Toronto to Boston to Washington. Instead let’s admire Niagara Falls pouring into the sea. No, no, I exaggerate–it’s still a good five miles from the beaches of Ontario Sound.

Is this humanity’s future? In all likelihood, we’ll never know personally. We’ve caught the first rumblings of it, but we’re not going to live to see this new world. It will be our distant descendants who’ll have to live with the full measure of what we’ve done to our planet. But just because we’ll escape the worst consequences doesn’t make our selfishness any less deplorable.

We may yet avoid the worst of this. Perhaps our economy will reach a tipping point and decarbonize faster than anyone expected; perhaps we’ll invent some kind of geoengineering technology to suck all the carbon out of the atmosphere and restore our climate to its former state. But it would be foolishness to count on something like this happening, and I’m increasingly pessimistic. I still believe that great things lie ahead for humanity; that our future will be more peaceful, more free and more enlightened than our present. But this will be another giant hurdle we’ll have to surmount to reach that future state, and like many of the others, it’s one we created for ourselves.

Daylight Atheism: The Book is now available! Click here for reviews and ordering information.