Robot Apocolypse

The technologies that contribute to automation are likely to follow an exponential pattern, which means that more industries will start to lose jobs at an astounding rate as machines get more and more capable.

Let’s say Automation could reduce the number of employees needed for the Retail Industry (17M) by 80%. More than 13.5 million jobs would be lost. The impact from automation’s effect on just one industry could double our nation’s current number of unemployed workers.

And here’s the punchline: automation won’t just eat one industry. It will eat many, and it will eat them with an ever-growing speed.

Our society is having a hard enough time coping with 9% unemployment, what happens if automation claims 100 million jobs in a decade?

Proponents of technology are quick to point out that humanity has adapted to these changes in the past, and that new jobs will be created by these technologies. The historic record isn’t enough guidance, the raw increase of speed will disrupt our ability to cope.

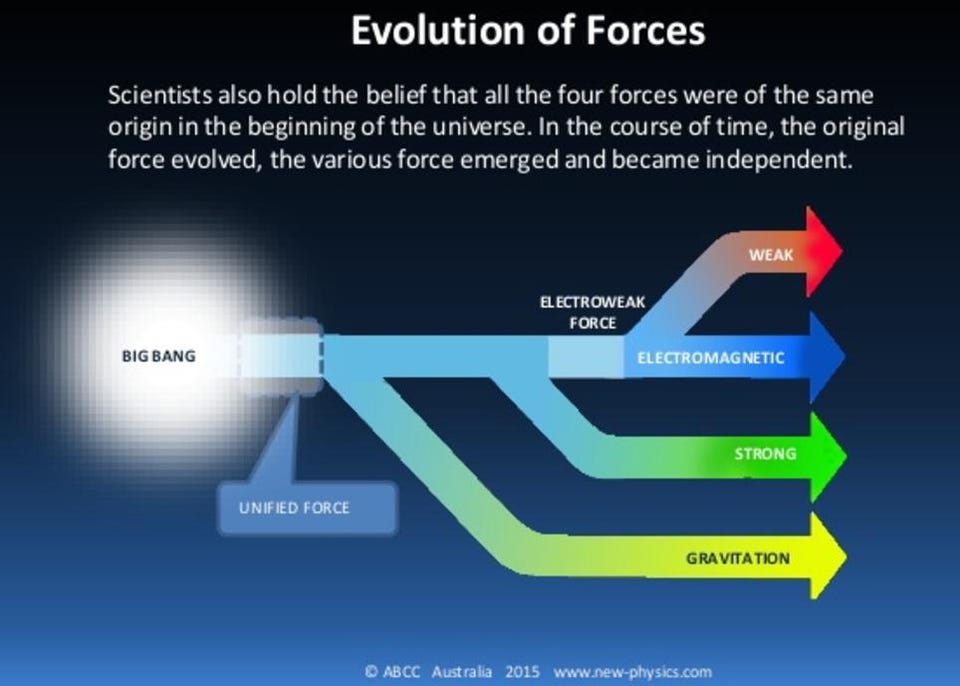

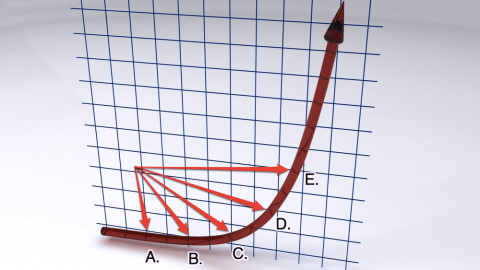

Exponential growth is a funny thing. It looks like slow growth for a long time, and then it looks pretty manageable for a short period of time, and then it looks out-of-control.

For an example, take a look at the picture on this post. The time period between Point A and Point B is the same amount of time between Point D and Point E.

Right now, I’d say our culture is right around Point C.

One disruptive automation technology between Point A and Point B (the printing press, for example) will be a lot easier to respond to than the huge number of disruptions that happen between Point D and Point E.

This trend isn’t inherently a bad thing, it creates a lot of good. And, even if we thought the costs outweighed the benefits, it would be near-impossible to stop automation’s progression.

But, we should all realize that if we don’t have support systems setup for when the system starts displacing people too quickly, we’re going to have an angry mob chanting “kill technology. If we get to that scenario, I only see two outcomes: massive war (and possibly nuclear holocaust) or our very own version of the Dark Ages.

Neither of those would be very good for humanity, let’s figure out how to avoid them.

This post was inspired by two intellectual efforts to “ring the alarm” on this concept. The first was Marshall Brain‘s talk at the 2008 Singularity Summit called ‘Increasingly Automated Economy” . The second was this NYT article about the authors of the eBook, “Race Against The Machine,” which I haven’t read yet, but purchased this week for my Kindle.