Question: What challenges arise in doing research with young children?

rnPaul Bloom: I think there's tremendous insight to be gained by looking at babies and young children. It's basically a way of seeing human nature before it gets tainted and corrupted by culture and if you test them young enough, before it gets even affected by language. So it's a way of seeing human nature in a very direct and sort of untainted way. But it's really difficult working with kids and with babies because they are not cooperative subjects, they are not socialized into the idea that they should cheerfully and cooperatively give you information. They're not like undergraduates, who you can bribe with beer money or course credit. And so you need to be somewhat clever in designing studies that tap their knowledge. You have to tap their knowledge indirect and sometimes kind of interesting and subtle ways. And even when you do that, you know, some of them are just going to run away from you. Some babies are going to fall asleep and cry. Some kids are going to think it's hilarious to answer every question with the opposite that, you know, they believe to be true.

rnAnd so you have to work around that in all sorts of ways. But as a developmental psychologist, I'm committed to the idea that the benefits outweigh the costs.

rnQuestion: How has your research affected your parenting and vice versa?

rnPaul Bloom: It's funny, I don't think, I'll be honest, I don't think anything I've ever learned as a developmental psychologist, as a scientist, has affected how I treat my kids. I think that at this stage of the game, there's a real disjunct between what the science tells us and actual practical applications, and I would distrust someone who told you otherwise.

rnBut there's been a lot going around on the other way around. I mean, having kids has proven to be this amazing, for me, this amazing source of ideas of anecdotes, of examples, I can test my own kids without human subject permission so they pilot, I pilot my ideas on them. And so it is a tremendous advantage to have kids if you're going to be a developmental psychologist.

rnQuestion: Why does religious belief exist?

rnPaul Bloom: Most humans are religious. If you ask most people, they'll tell you they belong to one or another religion. The most common religion on earth is Christianity, but Islam is coming a close second. And then there's just all sorts of religions. They differ in many ways, both in the beliefs that they have and in their practices, but they share certain common properties. So, all religions believe in some sort of supernatural entities, preachers without bodies, but have minds, like gods and spirits and ghosts and angels. All religions believe that one or more of these supernatural created the earth and created animals and created us. And they all believe in different forms, that we can survive the death of our body, that we are immortal.

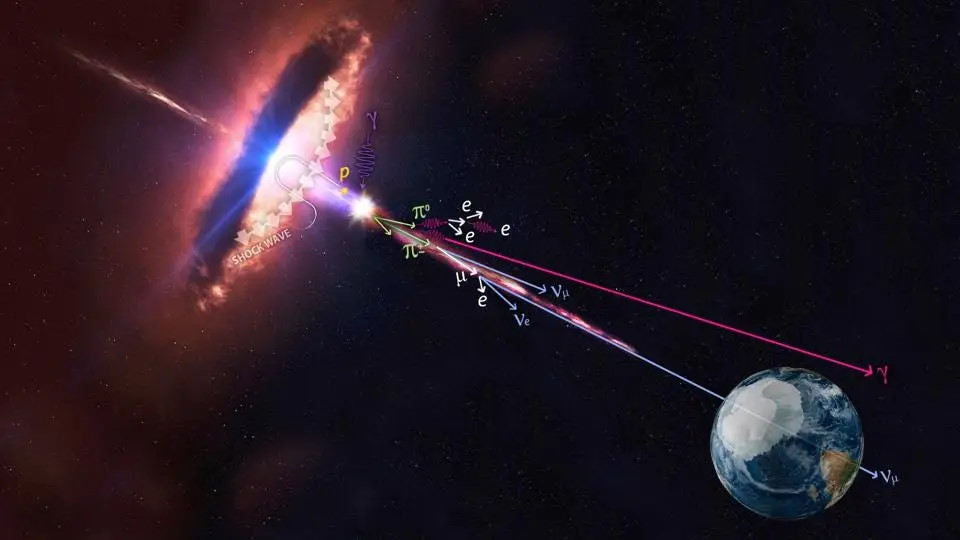

rnAnd I'm very interested in where these beliefs come from, why they exist at all. And you can imagine different extremes, so some scholars argue that they're social constructions, they're inventions of culture, and that's why they're universal. Others argue they're biological adaptations. They exist because of the selective advantage they gave to our ancestors.

rnI have a view which is different from both of those. I think they're accidents. I think they're accidental byproducts of cognitive systems that we've evolved for different reasons. More specifically, we've evolved a highly powerful social cognition. A highly powerful cognitive mechanism for thinking about the mental states of others and evaluating them and judging them. And I think that the system is so powerful that it sometimes leads to certain unpredicted byproducts. Things that we haven't evolved to do. So, for instance, we're highly animistic. We see consciousness and agency and humanity all over, even when, you know, a scientist would tell us it doesn't exist. We're natural creationists. In that when we see structure in the world, like a tree or a tiger, we think somebody must have made that, again, we're on the look out for design. And I think we're natural born dualists. And what I mean by that is, that we see our minds as inherently separate from our bodies. And so this makes possible idea that our mind could survive the destruction of our body, or that it could go to another body, as in reincarnation.

rnSo, I think that these three habits of mind, animisms, creationism, dualism, are present in all of us. They're not biological adaptations, they're accidents. But I think they're what make religious belief attractive and plausible and universal.

rnQuestion: What connection, if any, exists between religion and morality?

rnPaul Bloom: Many people, many religious people, many people in general, think that religion plays an important role in one's moral life. The very strong view, which I think nobody can take seriously, is that without religion we'd be monsters, we wouldn't care about other people. And the existence of atheists, who don't rape and murder and so on, seems to falsify that claim. But what about a more subtle claim, which is that religion on the whole makes you nicer? Well, this isn't a crazy theory, there's actually some statistical evidence in favor of it. So, for instance, religious people in the United States tend to give more to charity than atheists, even when you factor out religious charities, even when you think about things like donating blood or giving to the homeless.

rnAnd so you might think that religion is sort of ramping up your niceness in general, but I think there's another explanation, which is that religious in the United States are part of the vast majority and they tend to be happier and they tend to be parts of communities to a greater extent than atheists. And it might be the happiness and the community nature that explains this difference in giving, not religion per se.

rnAnd the reason why I believe this to be correct, is that you have now societies in Europe, like Scandinavian countries, that are quite a bit atheist, far more than the United States, and if religion makes you nice, you would expect these societies to be monstrous, high rates of crime, of exploiting one another, and none of that's true. In fact, these are swell places to live. These atheist communities are filled with people who don't tend to murder each other, don't tend to rape each other, don't tend to have all sorts of social ills that the United States have.

rnSo I think the relationship from religion from morality is going to be complicated and interesting. I think it won't be hard to find places where religion corrodes morality, where religious belief leads to people rejecting their gut and appropriate moral feelings and doing monstrous things. Religion is an ideology and all ideologies have that power. But for the most part, I think people can be religious and good and be atheists and good, and that's what the data shows us.

rnQuestion: How does your idea of “common-sense dualism” square with your idea of multiple inner selves?

rnPaul Bloom: The claim about dualism, is that we naturally see the world as having physical bodies, things like chairs and tables, and our own bodies, and immaterial souls, things that have beliefs and desires and consciousness. And the fact that we're dualists makes all sorts of beliefs possible. It makes religious beliefs possible, because it makes is possible that we can survive the death of our body. It makes, it shapes how we think about morality in that often when we judge whether something has moral status, when we're thinking like a non-human animal, we'd ask, or a fetus, or an embryo, we might ask, does it or does it not have a soul? This is how people often frame moral issues. So that's my claim about dualism.

rnMy claim about multiple selves is consistent with this, but it takes in a bit of a different direction. The claim about multiple selves is that as a matter of fact, and this may not be common sense, but as a matter of fact, within each of us, there are different agents fighting it out for control and often these can clash. So a standard example that behavior economists often use is that of someone who wants to diet. It's not, they would say, that you want cake, but you don't have the will to stop yourself from eating it. It's rather that you could think of it as two agents in your head. One is the cake eater, when there's no cake around, lies pretty dormant. The other one is the dieter, doesn't want to have cake. But when there's cake around, the cake eater rises in power and can dominate the dieter. Now, what does it buy you to think in these terms? Well, it lets you make sense of the fact that one self can try to thwart the other self. So someone trying to diet may block the cake eater from access to cake by not buying cake, or by punishing himself for using cake, and so on. And a lot of addictions and compulsions can be seen in terms of a clash. Can be seen on analogy between a clash of two people with different desires. So I think this is consistent with dualism, but it's not the same thing.

rnDualism is a claim about our conception of the world. The multiple selves view is a claim about how things really work.

rnQuestion: How do these internal divisions inform our sense of moral reasoning?

rnPaul Bloom: It's an interesting question, how to reconcile what we know about people's moral actions, how morality works and why people choose to do good and evil, from our common sense notion. What I'm most interested in my own research, about adults and kids, is the common sense notion. And the common sense notion very clearly involves notions of good and evil. So universally we see some people, everybody on earth, sees some people around them as nice, decent, fair, honorable, and others as jerks, as bastards. And we believe the good people should be rewarded and the bad people should be punished. So you have that as a human universal.

rnWhat's interesting then is where does this universal come from? And I think we could be informed there by evolutionary thought. So a caricature view of Darwinian evolution says we've evolved to be entirely self-motivating, self-interested, perhaps interested as well in our family, but not beyond that. But over the last many years, there's been a more sort of subtle and complicated notion of evolution which grants the fact that some moral intuitions and moral actions might themselves be biological adaptations. We might have evolved them because they're useful things to have for creatures like us that live in small interconnected groups.

rnNow, if that's right, then you should expect a moral intuition and moral actions to show up early on in development, before you get exposed or immersed in the culture. And this is some of the research I'm doing, I'm doing some of this in collaboration with my colleagues, and my wife, Karen, who went to Yale University, in their infant lab, as well as with Kylie Hamlin, who works on these projects, and what we do is we show babies different situations. So, for instance, we might show babies a situation where somebody's trying to get up a hill and one character helps it up the hill. Then the same thing, it's trying to get up a hill, and another character pushes it down the hill. And then we see who do babies like more? Do they like the one who helped it or the one who hindered it? We find to a tremendous extent, babies long before they reach their first birthday, prefer the character that helped the guy up the hill and don't like the one that pushed him down the hill.

rnWhat about reward and punishment? Well, in some recent work that we just completed, we find that, again, one year olds, kids who've just turned one, want to reward the character who helped the other guy up the hill and want to punish the character who pushed the guy down the hill. It gets more sophisticated than that. What if you show babies these interactions and then show them someone else rewarding the good guy and punishing the bad guy? We find babies like such a person. But what if somebody punishes the good guy and rewards the bad guy? Babies hate that person and believe that that person should be punished, too, for his misapplication of reward and punishment.

rnNow, I don't think babies know all there is to know about morality. But I think this work suggests, and a lot of other work that sort of coincides with this, suggests that some fundamental, moral understanding is there from the get-go. Part of our evolved understanding, our evolved social capacity to deal with other people.

rnQuestion: How does disgust inform our moral reasoning?

rnPaul Bloom: There's a big debate in the field over how we make our moral judgments. And a lot of scientific debates are fairly abstract and don't connect to public policy, but this matters. Because this asks questions like, what underlies our intuitions about abortion? Or gay marriage? Or the war in Afghanistan? And a lot of psychologists push a very hard line here, arguing that our moral intuitions are driven by gut feelings, by gut emotional reactions. We might tell these elaborate stories, "Oh, I think gay marriage is fine because I get a bunch of arguments," but the arguments really have nothing to do with why I've come to my opinion. I've come to my opinion because of my feelings of empathy or anger or disgust because I want to affiliate with other people around me and they share that opinion, because of how I was raised. Many psychologists believe rationality is irrelevant for adult moral judgments. I think that's mistaken. I think that's way too strong. I think that there's demonstration after demonstration of how people's rational, deliberative thoughts can actually shift their moral views, lead to a moral decision, lead to moral action, cause people to change their mind and then change the minds of others. I think unless you allow for an important role or rationality, you're unable to explain moral progress or moral change. You're unable to explain why the fact that right now, you and I have all sorts of different views about gay marriage, racism, slavery, then people 100 years ago, even 50 years ago. So I'm a big fan of rationality.

rnBut a lot of my research does address the question of the role of the emotions and our moral judgment and our moral decision making and again, we typically use people's attitudes for homosexuality and gay marriage as a case study. And this is some work I've done with David **** at Cornell University, as well as with other colleagues, and what we do, what we find, is that the emotion of disgust plays a very strong role in one's feelings about gay marriage and about gay people more generally. So we find that people who are disgust-sensitive, so we all have various sensitivity, we're all grossed out by some things and not others. Some of us are more grossed our, are grossed out easier than others. Those of us who are easily grossed out tend to be more morally disapproving of certain human activities, including homosexuality.

rnAlso, you can do this in the lab. So following some work by Jonathan Hite and his colleagues, what we did was, we put people in the lab, we'd ask them questions about all sorts of activities, including their attitudes about gay marriage. For half the people, we'd just put them in a lab. For the other half of people, we evoke disgust. We did that actually by spraying a fart spray in the room, so people get a bit grossed out. Being grossed out makes you meaner, it makes you less approving, it makes you sterner in certain ways.

rnSo, my view on the interplay between reason and emotion and adult moral judgment is it's a complicated and very rich story. But it seems inescapable that our emotions play a role in our moral judgment, even in cases where we might not know this is happening. And even in cases where we'd rather it wasn't happening.

rnQuestion: What do young children understand about art?

rnPaul Bloom: If you read a developmental psychology textbook from 10 years ago, or if you just ask a psychologist, said, "What do kids know about art?" What they would say is, "Children start off as realists about art." So, you know, if they see a picture of a bunny, that looks like a bunny, they'll say, "bunny," because it looks like a bunny. When they draw pictures and name them, they'll name them based on what it looks like. Kids start off realists.

rnNow, adults can do all sorts of crazy things. Adults can have abstract art, we can understand that a scribble can be a person, we can understand caricature and nonrepresentational art. But that's adult stuff. Kids start off simple and representational. And I believed that, until I had kids of my own. And so the case I remembered that got me interested in art in the first place is my son, Max, did a painting and it was just, he's a horrible artist, just like me, it was just a scribble of colored paints, and he held it up to me and I said, "What is it?" And he said, "It's an airplane." It didn't look at all like an airplane. And then I got to thinking and I realized children draw all the time and they name their artwork, but their artwork doesn't look like anything until they get old enough to get more proficient. So I wondered what was going on.

rnAnd the idea which I explored, and this is with a then-student of mine, Lori Markson, we explored the question of, whether children actually, when they're naming pictures, are doing something extremely smart. When they're naming pictures, they're not looking at what the picture looks like, rather, they're trying to figure out what the picture was intended to be. So for their own pictures, it's easy. If Max was intending to draw an airplane, so it was an airplane, it didn't matter what it looks like. We did experiments finding this is true even for their understanding of pictures made by adults. This is like two year olds. You look at an object, you make a picture, but it's just a scribble, you show it to the kid and say, "What's this?" Kid will say, "It's that thing over there." They're smart enough to realize that pictures are of what the artist wants them to be.

rnAnd I think we should take the common-sense, traditional notion of what kids know about art and flip it around. I think kids start off with a very abstract understanding of art, linked up with notions like artistic intention. And it's only later that they understand the conventions of realism. It's only later that they might get the idea that for something to be a picture of a bunny, it has to look like a bunny.

rnQuestion: Do adults take the same approach as children to understanding abstract art?

rnPaul Bloom: The motivation for a lot of this work on children comes from philosophical analysis of what adults do when appreciating art. So when adults try to make sense or an artwork, we often ask ourselves, even though critics sometimes we shouldn't, but we often ask ourselves, "What was going on in the artist's mind? What was he intending to do? What did she want to depict?" And this is just a core part of how we name artwork, how we categorize artwork, and how we appreciate artwork. Philosophers like Arthur Danto point out that the very notion of what art is, as opposed to other things, Duchamp’s Fountain, which looks a lot like a urinal, but is viewed as an artwork, the very notion of what art is, is based on artistic intent. Something can get transformed from an everyday object to an artwork under the right circumstances of what people want it to be.

rnAnd in fact, in some other research I've done with children, we find that you show children a canvas with paint splashed on it, and you tell, in one group you tell the kids, "Somebody spilled paint on this, what is it?" And they'll say, "It's a mess, it's whatever." But if you tell the same kids, "Somebody worked very hard on this for hours and hours," what they might say is, "It's a painting." Their notion of what a painting is doesn't rest just on what something looks like, rather it rests on their belief about how it was created.

rnQuestion: Why do humans enjoy fiction?

rnPaul Bloom: Fiction is such a puzzle. You know, you'd expect as good Darwinian creatures, we would evolve to be fascinated with how the world really is, and we would use language to convey real-world information, we'd be obsessed with knowing the way things are, and we would entirely reject stories that aren't true. They're useless. But that's not the way we work. We love stories. Most average humans spend, I think, most of their day-to-day life reading, watching TV, doing, going to the movies, or day dreaming. We spend all or most of our lives, and imagination is our favorite leisure activity. That's a real puzzle.

rnSo the question is, why do we get pleasure from doing this? And I think there's two main reasons. One reason is that our system that scans reality and gets pleasure from reality is not perfect and it can be deceived. And what fiction often is, is a form of purposeful deception. If I really would enjoy winning the World Series of Poker, then what I can do to give myself pleasure is to imagine winning the World Series of Poker and that will press the same pleasure buttons that really winning it would. Not to the same extent, it's always more pallid to imagine something than to experience it, but to some extent. If somebody enjoys sexual intercourse, they would probably prefer to have sexual intercourse, but failing that, they could appeal to pornography, that would stimulate their mind in some way as if it was the sort of thing they're aspiring to.

rnAnd one reason why fiction is pleasurable is, it's reality-like. Often we seek out in fiction pleasurable experiences that we want to have in the real world and it's close enough to be enjoyable.

rnBut that only explains some of fiction. Some of the stores we like, and this is a real puzzle, are unpleasant. People are drawn to tragedies and are drawn to horror movies. Now, it's not hard to explain why I might like to see a movie where a professor wins a million dollars and everything, "Oh, that's great, I can imagine myself in that situation." Why would I want to see a movie where a professor is captured by a sadistic axe murderer and chopped to bits? Why would I seek out something so unpleasant? I think some of what goes on in fiction is that we also use it as a way to imagine alternative worlds and we imagine certain sort of alternative worlds, we imagine worst case scenarios, and we do this because there's an adaptive value to exploring the imagination possibilities that might occur as a way to prepare for them, to plan them. Any athlete thinks ahead to, well, what's going to happen if this happens? What's going to happen if that happens? And these alternatives aren't always pleasant ones. Anybody preparing for a **** or preparing for a combat always imagines situations that are bad situations and do this because it helps prepare for them when they really occur. And I think that's what fiction is. I think what a lot of fiction is, is the imagining of the worst so as to prepare ourselves.

rnNow, developmentally, I'm interested in where, in the origin of this appreciation of fiction. We know very young children like stories. We know, and this is some work I've done with Dina Weisburg, who's now **** directors, Dina and I looked at children's understanding of stories, again, they're very sophisticated. So they know that their best friend is real and Batman is make-believe. They also know that Batman thinks that Sponge Bob Square Pants is make-believe, those are in different story worlds, but Batman thinks Robin is real, because they're in the same story world. Incredibly sophisticated knowledge. And a lot of my work has explored what children know about fiction.

rnWhat I'm also interested, more and more recently, is why children enjoy fiction? And I think in part, it is they enjoy fiction that simulates real-world pleasures. But I think they also enjoy fiction that exposes them to worst-case scenarios. I think that even young children are drawn to some extent to tragedy and horror. And we're doing some experiments in my lab to see whether children will actually choose an unpleasant fiction over a pleasant fiction under the right circumstances. And our prediction is that they will.

rnQuestion: Do stories help reconcile what you’ve called our multiple inner selves?

rnPaul Bloom: So a different function of fiction that people have been interested in, is as a way to give voice to different parts of ourselves. To simulate, not just situations, but different personalities and different characters. And this shows up in its sharpest form in situations, the more modern situations, where people can create alternative selves. As when we become avatars on, in a computer game. Or when we do various forms of role playing online. And what people will often do in these situations, like second life, for instance, is they'll create a persona and explore it. If I'm a man, I might explore what it would like to be a woman. If I'm aggressive, if I'm passive, I might explore what it's like to be aggressive. And this serves, I think, a useful function in that it's a way, in some way, to put it, perhaps metaphorically, giving voice to the multiple parts of ourselves. And that can help us later figure out how to deal with this, how to cope with these things.

rnSo you see this all the time in psychodynamic and psychoanalytic exercises, where you speak in different voices. But I think any kid who goes onto Second Life or World of Warcraft or even just, you know, any blogger, gets a chance to explore different facets of him or herself.

rnQuestion: What learning capacities do we lose after childhood?

rnPaul Bloom: So one interesting question is, in what ways are children superior to adults? What gifts and capacities do children have that adults lack? And I think that there's two ways of answering that question that give intersecting answers. One is evolutionary, which is what would you expect a child to be good at, given that what childhood is, is a period before maturity where you get everything up to speed. The second one is, developmental psychology and observation. What do we see that children do that's really good and that's better than adults? And I think one answer, for instance, is language. So children are better at learning language than adults, because that's the main task of childhood. By the time you're an adult, you had better know a language and there's not much evolutionary pressure to wire up your brain to learn more, you're done, you should be knowing it by then.

rnChildren are, I think, better learners regarding motor skills, possibly regarding certain aspects of social interaction. We'd be really screwed if we had to start our life over again as children with our brains right now, because I think we lose the plasticity and flexibility.

rnOne claim, which I'm not sure about, is whether children are better pretenders and better players than adults. And that I'm not sure, but there's a romantic notion that children know how to play and they know how to pretend and we lose this as adults. But I look around at my own life and the life of my friends and life of other people, and all I see is play and pretend. I see people, you know, playing video games, going to movies, reading books, doing all sorts of things. So I'm not sure that the play part ever goes away, I think that might be just as strong in adults as it is with kids.

rnQuestion: What is the concept behind “How Pleasure Works”?

rnPaul Bloom: “How Pleasure Works” explores the pleasures of everyday life. So it starts off with seemingly simple pleasures, like food and sex and love, and then it goes on to pleasures like the pleasure we get from owning certain objects, the pleasure we get from reading books and going to movies, the pleasure we get from art, even the pleasure of religious ritual. And I focus on these pleasures in all sorts of ways and I say different things about them. But the main argument of the book is that pleasure is deep. What I mean by that is, that when we get pleasure from something, we don't just resonate to its surface, superficial appearance, we respond instead to what we think that thing really is, where it came from, what's its history, what's inside it. So for sex, it critically matters for sexual arousal and sexual interest who you think the person is. Do you think it's a man or a woman, is it a relative or a stranger, how old is that person, what's that person's sexual history? All of those factors, invisible to the eye, have a deep affect on sexual interest. For food, it matters enormously what you think you're eating. Not just in sort of the abstract consciousness of what you choose to buy or what you choose to put in your mouth, but in how it tastes. The price of food, how natural it is, how healthy it is, are all considerations that affect your taste experience of it. Maybe the most practical application of this is wine. So there's now several studies showing that the more expensive you believe a bottle of wine to be, the better it will taste to you.

rnIn some of my own work, I've looked at the pleasure of consumer products. So people will pay more for something and will enjoy it more if they believe it was owned by a celebrity. If they believe it was touched by a celebrity. In fact, a way to make such an object lose value is to wash it thoroughly. They don't want it washed because it's as if it washes off the essence of the person who touches it.

rnI got into this work because I'm interested in a claim that cognitive psychologists and philosophers have made over the human mind, which is that we're common-sense essentialists. When we understand something, like when we name it or categorize it, or choose what to do with it, we don't just respond to what it looks like, we respond to what we believe it's deeper essence is. Now, this is commonly been applied to more cold-blooded tasks, like naming and categorization. I wrote this book because I was interested in the idea that essentialism applies more generally and that essentialism can help explain a lot of, some mysteries of our every-day pleasures.

rnQuestion: Why can our pleasure in something change when its essence doesn’t?

rnPaul Bloom: I think essentialism is an important part of the story and it's something which common theories of pleasure tend to miss. But I wouldn't argue for a minute against ideas or other factors that affect pleasure. So one factor that affects pleasure is simple experience. Faces, for instance, will look more attractive the longer you look at them. Something psychologists have called the mirror exposure effect. When it comes to music and art, you often get what looks like a sort of inverted U-shape curve. So you start off, when you hear a new song, you might not like it that much, then the more you hear it, the more you like, the more you like it, the more you like it, until it reaches a certain point where boredom kicks in, and now you don't like it any more. And a lot of this sort of U-shaped curve shows up for all sorts of things, from the foods we eat to the books we read, to the people we encounter.

rnSo I don't doubt for a minute that there are low level processes like mirror exposure and like simple sensory pleasures that exist. But my, the argument I make throughout the book though, is that we tend to overestimate how much of our pleasure is determined by these simple processes. People believe, for instance, that when they taste wine, the pleasure they get from wine, is due to the chemical composition of what they're drinking. It's due to what's hitting their tongue and their nose. And when you tell them that's not true, that their pleasure is easily manipulated by telling them different things about the wine, like where it came from, how much it cost, and so one, people balk at this, they say, "That might work for other people, but not for me, I taste the wine." But one of the great surprises, I think, from psychology, is the extent to which our every-day experiences are shaped by our beliefs. Even in cases where we're unconscious that we have these beliefs and these beliefs are playing that role.

rnQuestion: To what extent are our likes and dislikes innate?

rnPaul Bloom: It's a very hard question, looking at human differences, to try to explain their origin. So some people like cheese, I don't like it at all. Some people are gay, others are straight. Some people have radically different tastes in movies and books, in artwork, in ritual. And I'll admit, for the most part, these are mysterious. There's not much evidence that these tastes can be substantially shaped by your upbringing. There's also not much evidence that these tastes are genetically determined. So I think what you have is a picture that goes like this. We have innately universals of tastes. Everybody likes a melody of some sort, everybody responds in some way to sexual stimuli, every baby likes the taste of sweet milk, everybody likes art. But then you get these differences, these differences of cross cultures, different cultures have different music, and these differences within cultures. Your taste in music isn't going to be the same as my taste in music. And music is largely mysterious. One of the great puzzles of modern psychology is to explain these individual differences and I think we're largely at a loss.

rnQuestion: What’s the most unusual or exciting project you’re working on now?

rnPaul Bloom: There's a lot going on in my lab that I'm very, very excited about. I'll tell you about one study that's kind of cool, and it's with a graduate student named ****. We're interested in whether children will punish other people. Now that much we actually know, that they do. But the further question is, will they punish other people and suffer to do it? So the design that we came up with is, we put children in a room, we're testing four and five year olds, and we show them somebody in another room who behaves horribly, she knocks over someone else's blocks, she laughs, she's terrible. And we tell the child, and that person says, "I can't wait for my broccoli, I have a big plate of broccoli coming, I can't wait for it." And then the experimenter goes over to the child with a big plate of broccoli and says, "I'm going to bring this over to that person, you want to eat some of it?" And it's set up so that children know that if they eat it, that person won't get their broccoli. And we also know that the kids hate the broccoli, for the most part, we also test them to see if they hate the broccoli. The question we're interested in is will they force broccoli down their mouths to punish a stranger? Are they that instinctively spiteful? And right now, the data looks promising. We have some lovely film clips of children cramming broccoli into their mouth, almost weeping, because they hate the stuff, so as to stop this other person from getting it.

rnAnd I think, this is just part of a line of studies that show how powerful our moral impulses are, how deep the desire to reward the good and punish the bad run in all of us, including young children.

Recorded on November 20, 2009

Interviewed by Austin Allen