The Downside of Loyalty, from Afghanistan to Pennsylvania

Whatever the facts of the crimes in this week’s pair of institutional scandals (and it bears saying that trials in the Afghanistan “kill team” case are ongoing, while Jerry Sandusky hasn’t yet been convicted of anything), the facts about the institutional responses by the Army and Penn State are not in dispute. And they’re yet another reminder that, as Frank Bruni remarked the other day, institutions are bad at policing themselves. Which is putting it very mildly: So… you’re pretty sure you see one of your superiors sodomizing a 10-year-oldand you … go home and call your Dad? You get a phone call saying soldiers are killing people for sport and you … say there’s nothing you can do? Say what, now? We outsiders are astounded, and we can only ask: How could it come to this? Why aren’t institutions good at policing themselves?

Perhaps, though, that’s the wrong question. It’s premised on a rationalist model of moral behavior, which holds that an evil act must evoke the same response in all people at all times. Because child abuse and the murder of innocents are horrible, we’re shocked that an Army sergeant shrugged off a phone call about the “kill team” from the father of a troubled soldier. We’re amazed that Penn State’s collective response to a report that Sandusky raped a boy on its property was to tell Sandusky to stop bringing boys to campus.

It shocks the conscience, when you think about it in the abstract. But of course no one lives through these cases in the abstract, and there is no such thing as “the” conscience. There are only individual consciences, embedded in individual minds, and those minds are bound together into collective entities like families, nations, religions, football fan groups and countless other tribal-feeling forms of affiliation. And when minds are so bound into collectives, the bonds have powerful effects.

Specifically, it is immensely difficult to think ill of someone who brings glory and honor to your group. This is, of course, monumentally unfair: If you think Steve Jobs was a bully and a crank, it should not make any difference that he brought forth so many beautiful products that made you an Apple guy. But it does. Would students at Penn State have rioted for Joe Paterno if he just happened to be just some 84-year-old neighbor who failed to investigate what he’d heard about Sandusky? Would Calvin Gibbs, this week convicted of organizing the murder-for-the-hell-of-it of harmless Afghan civilians, have had much influence over his comrades if he hadn’t been good at his job ?(“What you want a soldier to look like, act like, speak like,” one of them told Luke Mogelson. “He’s like the epitome of soldier.”)

It’s also difficult, once you’ve developed loyalty to an institution, group or profession, to ignore its needs. French has a useful term for the effects of often-emotional, often-rigorous training: deformation professionelle. It refers to the difference between the way you or I might see a bloody wound and the way it would look to a doctor, or a soldier, or war reporter. Part of that difference is desirable (who needs a doctor who freaks out at the sight of a broken shin?) But part is undesirable, a kind of unfortunate corollary: The tendency to excuse, explain away and defend; the tendency not to see at all.

I would bet a lot that this effect doesn’t manifest itself in conscious thoughts like “I’d better not go to the police, it will make us all look bad.” I suspect it’s an unconscious influence on thought, emotion and even perception (“that can’t be what I think it is”), arising from a loyalty formed and maintained outside of awareness.

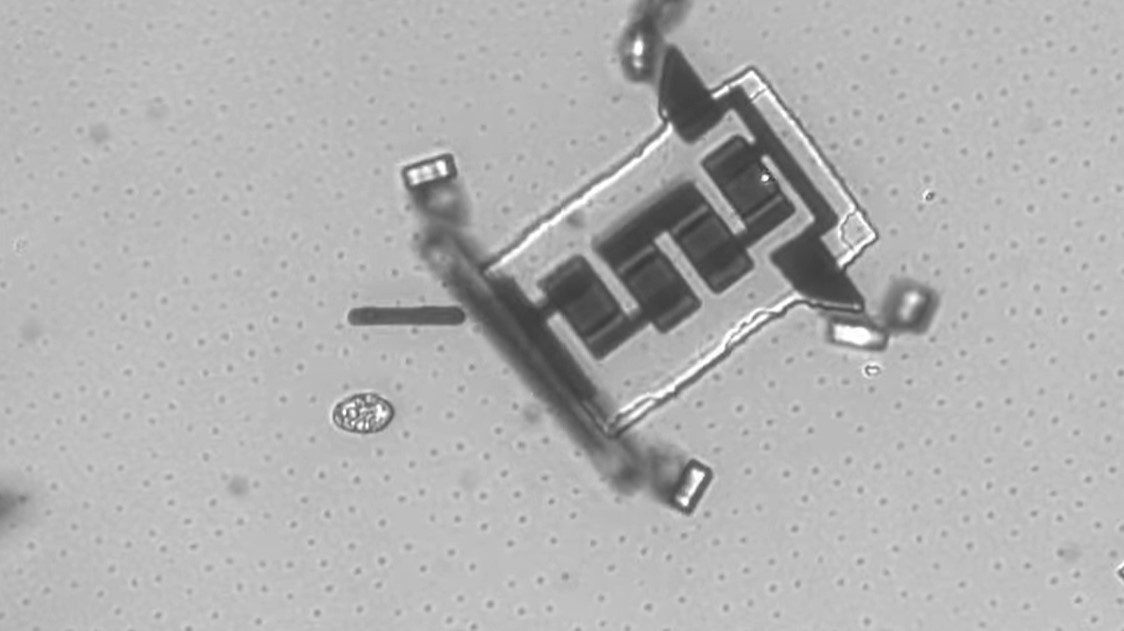

How might that work? Well, perhaps we’re wired to get a jolt of pleasure from fitting in. In this study, published last year, Daniel K. Campbell-Meiklejohn and his colleagues put 28 volunteers into a functional MRI scanner and had them rate songs and then see how their opinions compared to those of two supposed expert reviewers. When a volunteer found that both “experts” agreed with his or her rating of a song, activity increased in a region of the brain, the ventral striatum, that’s been found to spark when people receive a reward. (The ventral striatum is considered to be heavily involved in learning.) Ventral striatum activity during agreement with the supposed experts looked quite a bit like what happened when a volunteer received a tangible reward (a token that would permit download of a well-liked song).

Lying in a brain scanner is a weird experience (you’re immobilized in a metal tube, amidst a lot of unearthly clanging and banging). So if the experimenters saw this effect there, after presenting two fake experts moments before, I think it’s possible that the real-world pleasure of agreeing with authority figures might be quite a bit stronger.

In this groping for explanation, I don’t mean in any way to excuse institutional indifference in the Army or at Penn State. Nor do I mean to suggest that there should be any “you had to be there” defense for child abuse or murder.

I’m not even saying institutional loyalty is a bad thing. After all, allegiance to collective identity can also make people shun vice and shame, and do the right thing. In fact, the most successful way to fight perverse loyalty is with better loyalty—a fact that politicians seem to understand instinctively. Governor Tom Corbett of Pennsylvania, for example, didn’t tell rioting students to remember football is just a game. Instead, he said, “please behave and demonstrate your pride in Penn State.” Similarly, one of the Gibbs prosecutors told the court: “He betrayed his unit, and with the flag of his nation blazoned across his chest thousands of miles from home, he betrayed his nation.” Institutional loyalty can lead to good ends as well as bad. Let’s not talk, though, as if it’s irrelevant.

Campbell-Meiklejohn, D., Bach, D., Roepstorff, A., Dolan, R., & Frith, C. (2010). How the Opinion of Others Affects Our Valuation of Objects Current Biology, 20 (13), 1165-1170 DOI: 10.1016/j.cub.2010.04.055