Study: Combative People “Remember” Hostile Acts That They Didn’t Commit

What kind of people confess to crimes they didn’t commit? You might imagine they’re sleepless and terrified, with cops telling them there’s already proof of their guilt. And you’d be right (in experiments, people told there’s video of their “crime” confess at very high rates). But susceptibility to pressure varies from person to person. People who are young, depressed or exceptionally respectful of authority have been found to be more prone to false confessions. Now, this study proposes a new high-risk group: Aggressive people, it found, can be convinced that they committed hostile acts that never actually happened.

Though courts continue to presume that people know what the heck they’re talking about when they describe their experiences, researchers have been showing for some time that false memories are easy to plant. This leads to eyewitnesses who are certain they saw what they could not have seen, and victims passionately certain that they went through ordeals that didn’t happen. In this new study, published last summer in the journal Acta Psychologica, Cara Laney and Melanie K.T. Takarangi note that most of the false memories that researchers have implanted have cast people as witnesses or victims. (For example, in the lab people have come to believe falsely that as children they’d spent the night in the hospital or witnessed a bad fight between their parents.) Yet the authors suspected that aggressive people, because of the way they see the world, might be exceptionally prone to false memories in which rather than being victims or witnesses, they were themselves the bad guys.

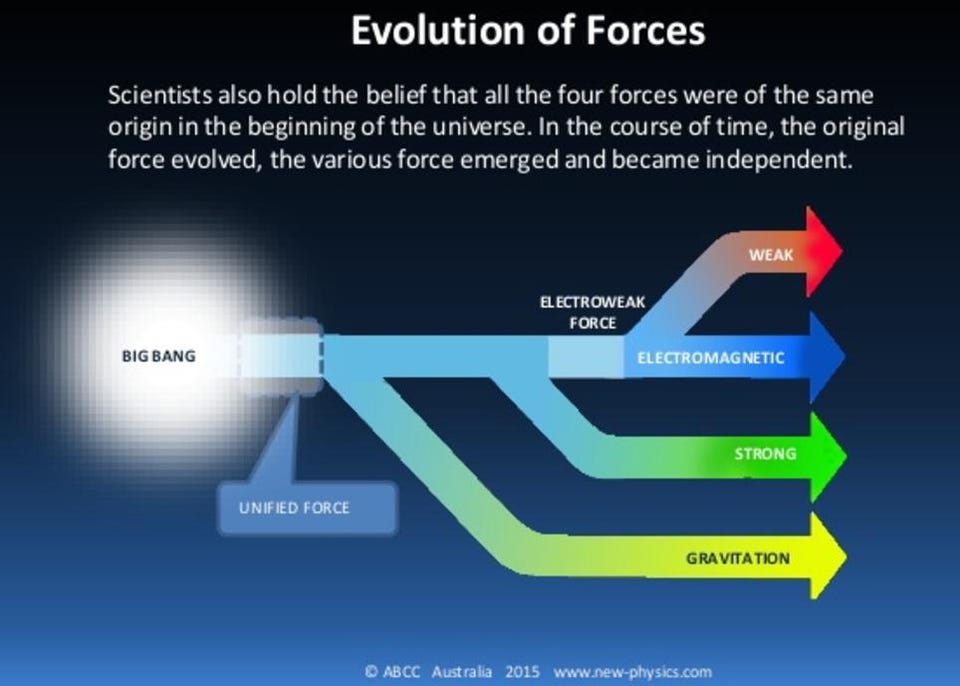

We all go through life having to make decisions about people and situations without a lot of information—is that a mugger or a guy looking for his house keys? Is this a good place to eat or the Casa Ptomaine? We don’t have hours to research each case, so we use the few visible facts we have to predict other, related facts. It’s our personal version of Big Data: Just as Netflix cast Kevin Spacey in House of Cards because it knew that viewers who liked the original BBC House of Cards also liked Spacey, so you conclude that the guy with the fedora and the gas-station vintage workshirt is a hipster because you “know” guys who make those fashion choices are guys who like artisanal beers, indie movies and loft bands. The difference is that Netflix is crunching vast amounts of information about behavior to arrive at its net of linked traits, whereas you are kind of winging it with the few data points that you’ve gathered through personal experience. Nonetheless, the principle is the same—both you and the corporation are treating each fact you encounter as a link in a vast net of associated facts. Pull this one up, these others must follow.

These interwoven chains of association have been called “schemas,” “scripts” and “frames,” depending on the discipline that used them. All are terms for the knitting together of traits in a way that lets me use some facts to infer other facts—that algorithm that lets me see one slice of cake with a candle in it and two balloons and immediately expect to hear “Happy Birthday” and see presents and a happy if slightly embarrassed person at a table. But that’s me. You might differ, because schemas aren’t standardized. Everyone builds his/her own out of their own experiences. This means, of course, that the same physical facts call up different schemas in different people. For example, I have a set of associations and expectations when a police officer asks me to hold on for a moment and answer a question. If I had been “stopped and frisked” repeatedly, my schema for this experience would be different, and a lot more negative.

Aggressive people, write Laney and Takarangi, have aggressive schemas. The net of interrelated concepts that they call up often involves expectations of a fight. For example, in an earlier study Takarangi had shown volunteers some words whose interpretation was ambiguous (“cut,” “whip” and “mug” could be about violence but they could also refer to a quiet morning the kitchen). When recalling those words, people who had scored high on a measure of aggression tended to add other words that they had not seen—like “hit” and “stab”—which removed the ambiguity and made the list, as they recalled it, even more combative. (Those results hark back to the first experiments that established that people use schemata—they involved British students recalling a Native American folk tale, and adding all manner of “American Indian” details that were not in the original.)

A schema that interprets people and experiences as aggressive can betray its owner, the authors argue. It inclines him to expect aggression not only in others but in himself, and that makes him vulnerable to believing he was aggressive in the past. (I’m using the male pronoun here because men commit more violent crimes than women do, but there were women in this study, and no one is claiming that aggression is confined to one gender.)

In their study Laney and Takarangi worked with 187 undergraduates at the University of Leicester who had filled out a variety of online questionnaires, one of which measured their views of their own aggressiveness. Another questionnaire asked them to say yes or no to 37 statements about their teen-age years (for example, “you cheated on an important test” or “you got a tattoo or piercing”). They were also asked to rate how emotional such an event would have been for them (whether or not it had happened) and, for the yes answers, how confident they were that their memories were accurate.

In the lab a week later, each student received a summary of results derived from the questionnaires—a personality profile and a statement about his or her “behavioral style.” Then 101 students in the group read a statement that said “the adolescent experience that has been most influential in shaping this behavioral style is:” followed by one of three items from the list they had seen earlier: (1) “You were punched and got a black eye”; (2) “You punched someone and gave them a black eye”; (3) “You spread malicious gossip about someone.” That gave the researchers three groups of students, each group reacting to a different statement.

The key here is that none of these statements about the past was true. The volunteers who really had had those experiences had been filtered out, along with a control group of students who weren’t served any untruths. Each student who was given a false statement then answered some more questions, including one about how confident s/he was about the nonexistent event that they had just read about.

Out of those 101 students, 40 assimilated the false memories: five thought they “were punched,” 17 thought they had “punched someone” and 18 thought they had “spread malicious gossip.” (As the researchers note, false memories of hostile acts were twice as successful as false memories of victimhood.)

Those who accepted a false memory of being aggressive had twice as many true memories of aggressive acts (like “you were a school bully” or “you carried a weapon”) than did those who didn’t take the bait. Moreover, statistical analysis showed that the volunteers who accepted the false memory scored higher on the measure of aggression than did those who resisted. In fact, write the researchers, “the greater their propensity towards aggression, the more likely that a person will develop an aggressive false memory.” Told they had committed hostile acts, the more aggressive people in the experiment simply accepted that they had, fitting that “memory” into their picture of themselves.

The problems of false memories, inaccurate eyewitnesses and untrue confessions have gotten some acknowledgement from the American legal system. Perhaps that legal system should also be worrying about a different and particularly insidious form of false memory: The one in which someone cops to violent acts not because he committed them but because he’s seen—and sees himself—as the type of guy who does that sort of thing.

Laney C, & Takarangi MK (2013). False memories for aggressive acts. Acta psychologica, 143 (2), 227-34 PMID: 23639921

Illustration: Detail from A Procession of Flagellants by Goya, via Wikimedia

Follow me on Twitter: @davidberreby