During a conversation at a cocktail party, you have to make one of two choices: actually listen to the person speaking to you, or nod your head in assent while you focus on a more interesting conversation.

Question: What is attention on a neurological level?

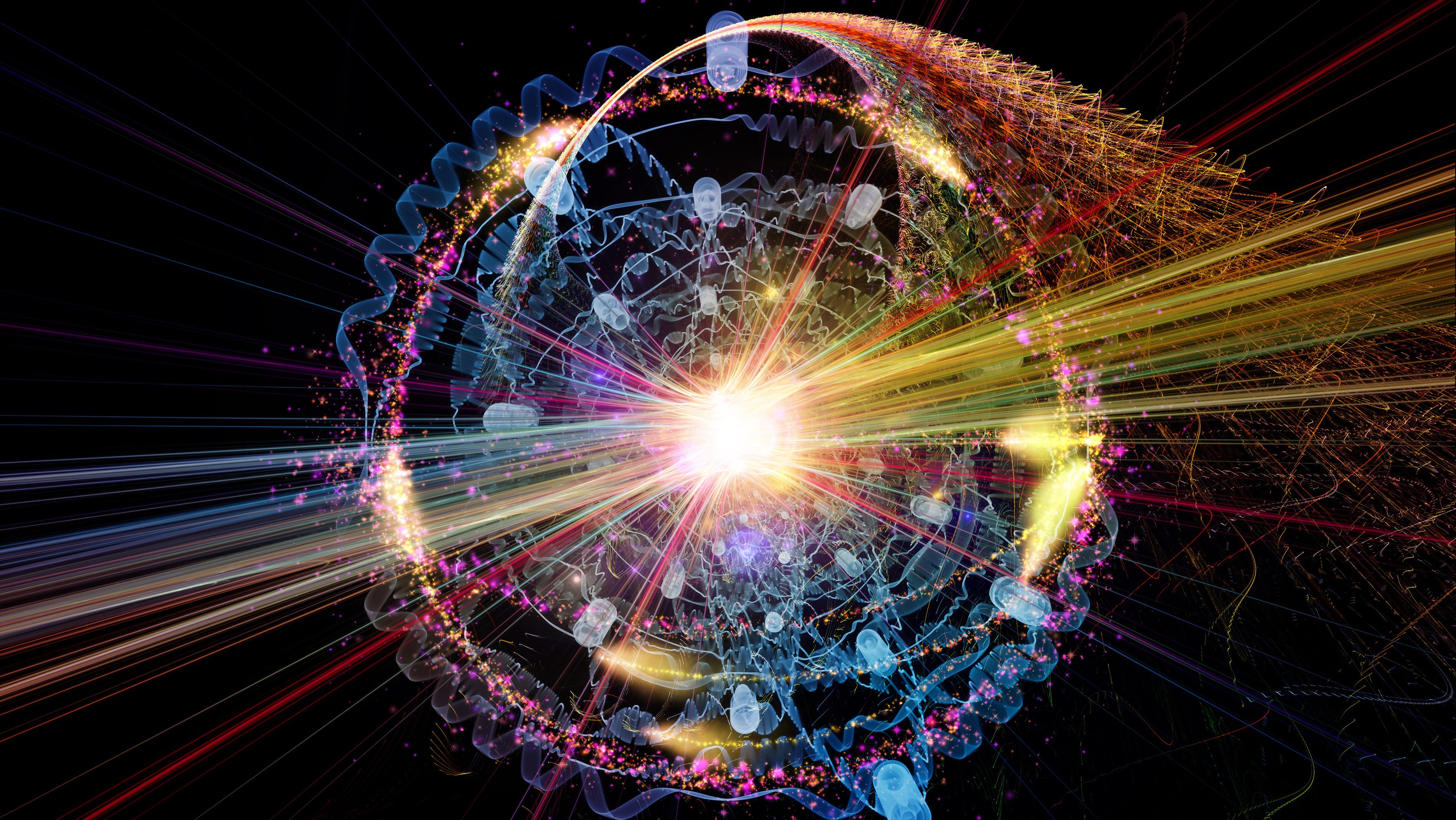

Tony Zador: Yeah, the way I think about attention is really as a problem of routing, routing signals. So actually we know a lot about how signals come in through your sensory apparatus, through your ears and your eyes. We know an awful lot about how the retina works, how the cochlea works. We understand how the signals travel up and eventually they percolate up to the cortex. It the case of sound they percolate up to the auditory cortex and we know a lot about... a fair amount about how they look once they get there and after that we kind of lose track of them and we can’t really pick up the signal until they’re coming out the other end. We know a lot about movement. We understand and awful lot about what happens when a nerve impulse gets to a muscle and how muscles contract. That’s been pretty well understood for 50 years or something, more than that.

So where we really lose track of them is when they enter into the early parts of the sensory cortex and when they’re... we pick them up again sort of on the other side. And so attention is one of the ways in which signals can find their destination if you like. So think about it in terms of a really specific problem. Imagine that I ask you to raise your right hand when I say "go." Okay, so I say "go"—you raise your right hand. "Go"—raise your right hand. Now let’s change it and I say "go"—raise your left hand. "Go"—raise your left hand. So somehow the same signal coming in through your ear is producing different activity at the other end. Somehow the signal on the sensory side had to be routed to either the muscles of your right hand or the muscles of your left hand.

Now attention is a special case of that kind of general routing problem that the brain faces all the time because when you attend to let’s say your sounds rather than your visual input or when you attend to one particular auditory input out of many, what you’re doing is your selecting some subset of the inputs—in this case, coming into your ears—and subjecting them to further processing and you’re taking those signals and routing them downstream and doing stuff with them. How that routing happens that’s the aspect of attention that my lab focuses on.

So the basic setup for the problem that I’m interested in is this: Imagine that you’re at a cocktail party. There are a bunch of conversations going on and you’re talking to someone, but there is all this distracting stuff going on in the sidelines. You can make a choice. You can either focus on the person you’re talking to and ignore the rest. Or as often happens, you find that the conversation that you’re engaged in is not as interesting and "uh-huh, uh-huh, oh really, yeah, summer," okay and you start focusing in on this conversation on the right. Your ability to focus in on this conversation while ignoring that conversation, that is sort of the challenge, understanding how we do that is the challenge of my research.

And that problem actually has two separate aspects, so there is one aspect of that problem that is really a problem of computation. It’s a problem that is a challenge to basically any computer, namely we have a whole bunch of different sounds and from a bunch of different sources and they are superimposed at the level of the ears and somehow they’re added together and to us it’s typically pretty effortless for us to separate out those different threads of the conversation, but actually that’s a surprisingly difficult task, so computers maybe 10 years ago already were getting pretty good at doing speech recognition and in controlled situations and quiet rooms computers were actually pretty good at recognizing even random speakers, but when people actually started deploying these in real world settings where there is traffic noise and whatnot the computer algorithms broke down completely and it was sort of surprising at first because that is the aspect that we as humans seem to have not much trouble with at all, so the ability to take apart the different components of an auditory scene that’s what we’ve evolved for hundreds of millions of years to do really well. So that is one aspect of attention. There is almost this sort of aspect of it that happens before we’re even aware of it where the auditory scene is broken down into the components.

The other aspect, which is the one that we’re sort of more consciously aware of is the one where out of the many components of this conversation we... or of this auditory scene we select one and that is the one we focus in on, right, so at this hypothetical cocktail party we have the choice of focusing on either the conversation we’re engaged in or this one or that one.

Recorded August 20, 2010

Interviewed by Max Miller